morluto

51 posts

This is what happens when you fund a company so much yet they have nothing to do with the cash. No one wants anything from dedaluslabs

Cathy Di@itsCathyDi

build agents. share them with the world. get noticed by a YC startup. launching the @dedaluslabs campus ambassador program. > exclusive merch > free credits > top builders flown to sf > shape whats next for agents

English

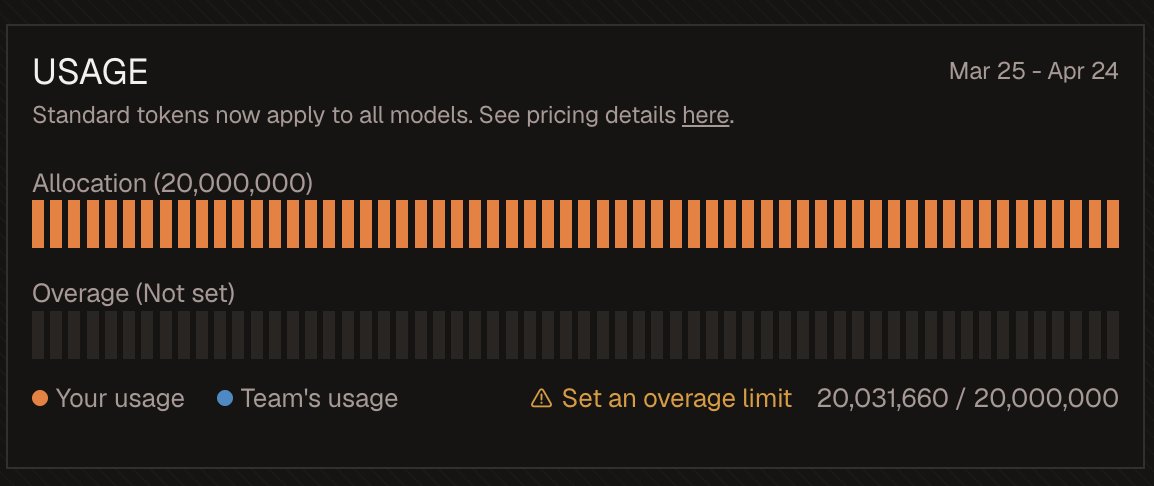

@novasarc01 at the end of the day you have to make money

alibaba isn't nearly the cash cow amazon is if u look at their books

English

@MTabarrok worked around this by give it an OCR tool like GLM-vision

one line + a ZAI key

English

JEPA are finally easy to train end-to-end without any tricks!

Excited to introduce LeWorldModel: a stable, end-to-end JEPA that learns world models directly from pixels, no heuristics.

15M params, 1 GPU, and full planning <1 second.

📑: le-wm.github.io

English

models need atomic primitives and better tools

reminds me of the experiment recently where they embedded a way to do computations inside the transformer weights

Fabricated Knowledge@fabknowledge

OMG AUTORESEARCH IS SO COOL > literally throws 51231231 moving average grids to predict future information of a time series, despite having covariates. I think autoresearch is awesome, but yeah like we gotta make better frameworks for model being lazy pretty much.

English

there’s a whole archetype of ppl who are weird / don’t fit into corporate and they either are at community college and building some kind of crazy advanced mechatronic fursuit, and it’s 50/50 they get one of the fancy math prizes later or become a professional comedian

i feel one of the things about silicon valley is that it’s really good at making space for these ppl

English

@_TobiasLee when do you think was the moment x became sufficiently good enough?

English

@mattmiesnieks current MLLMs lack fundamental visual primitives

relevant paper here, there's still quite a long way to go esp baselining against humans

arxiv.org/pdf/2601.06521

English