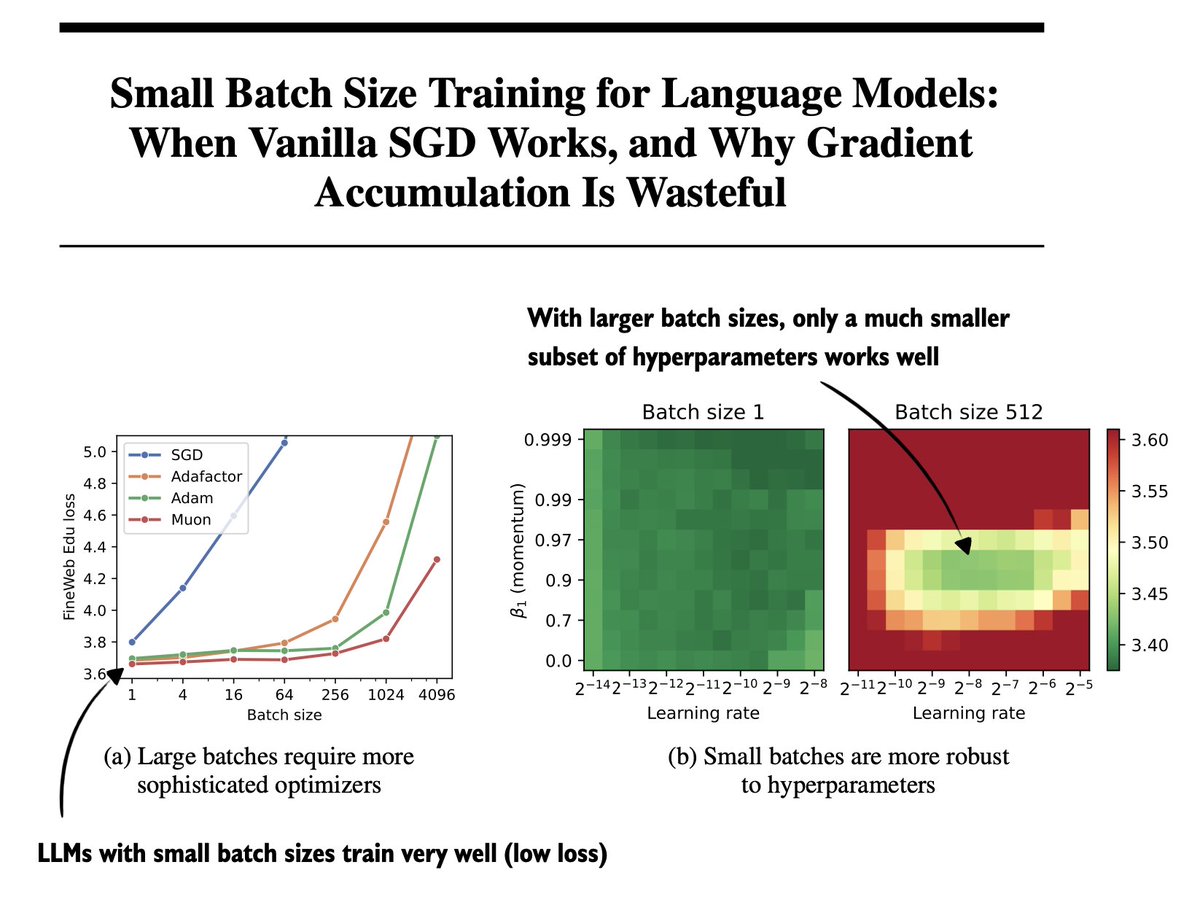

🚨 Did you know that small-batch vanilla SGD without momentum (i.e. the first optimizer you learn about in intro ML) is virtually as fast as AdamW for LLM pretraining on a per-FLOP basis? 📜 1/n

Martin Marek

254 posts

@mrtnm

still writing code

🚨 Did you know that small-batch vanilla SGD without momentum (i.e. the first optimizer you learn about in intro ML) is virtually as fast as AdamW for LLM pretraining on a per-FLOP basis? 📜 1/n

@francoisfleuret @ylecun When will it be easy, or even cheap, to iterate on model architectures? I suspect that’s when this will pop wide open.

We additionally introduce a "confidence" prior that directly approximates a cold likelihood. This allows us to see cold posteriors from a new perspective: as approximating a valid prior combined with the categorical likelihood. 6/8