Michael Bernstein

768 posts

@msbernst

@stanford Professor of Computer Science, @simile_ai co-founder, nationally bestselling author. I build interactive, social, and societal tech.

Once you have JIT objectives, you can embed them into various LLM architectures via existing generators and evaluators. Evaluations on N=205 participant-provided inputs show that JIT objectives produce user-preferred outputs, whether generating experts, tools, or feedback.

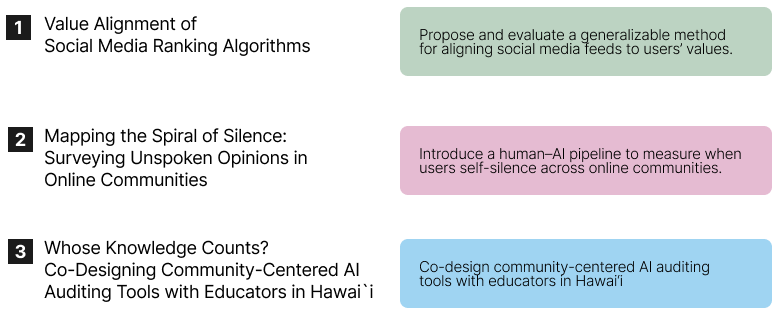

2. Mapping the Spiral of Silence: Surveying Unspoken Opinions in Online Communities w/ @Diyi_Yang @msbernst We introduce a human–AI pipeline to measure the spiral of silence across political subreddits, revealing how community design shapes when people choose to stay silent online. Preprint: arxiv.org/abs/2502.00952

1. Value Alignment of Social Media Ranking Algorithms w/ @FarnazJ_ @tizianopiccardi @_ziv_e Zach Robertson @sanmikoyejo @msbernst We present a generalizable method for aligning social media feeds to users’ values, showing with 400+ users that value-driven rankings produce meaningfully aligned feeds using their own Twitter data. Preprint: arxiv.org/abs/2509.14434

Most of what I actually need help with, I never think to tell a model. But why is it on me to remember? Our new paper asks: what if AI could proactively specialize to individuals and the tasks they’re carrying out at this very moment? 🧵

This is an absolute masterclass from MIT on how to speak

I think it’s pretty clear that simulation is the next frontier for AI. The most impressive feats of AI to date are when we have a clear environment + reward, whether it be beating Le Sedol at Go, winning an IMO gold medal, or writing entire apps from scratch. In these cases, the RL algorithm can try different actions, and observe the well-defined consequences in the safety of a docker container. But what about messy real-world situations involving people? The rewards are unclear, the stakes are high, and you can’t experiment in the real world. But these situations are precisely where the next big opportunity in AI is. To crack this, we need to *simulate* society (“put society into a docker container”). Concretely, this means building a model that can predict what will happen in any given situation (real or hypothetical). If we can do this, we are only limited by our imagination: predict the future, optimize for better outcomes, answer hypothetical (“what if”) questions. Ultimately, this goes beyond making better decisions, but it’s about giving us a better understanding of ourselves and the world. Simulation is the whole enchilada. And this is exactly the research that @simile_ai is working on. Read more here: simile.ai/blog/simulatio…

What’s the point of a “helpful assistant” if you have to always tell it what to do next? In a new paper, we introduce a reasoning model that predicts what you’ll do next over long contexts (LongNAP 💤). We trained it on 1,800 hours of computer use from 20 users. 🧵

What’s the point of a “helpful assistant” if you have to always tell it what to do next? In a new paper, we introduce a reasoning model that predicts what you’ll do next over long contexts (LongNAP 💤). We trained it on 1,800 hours of computer use from 20 users. 🧵