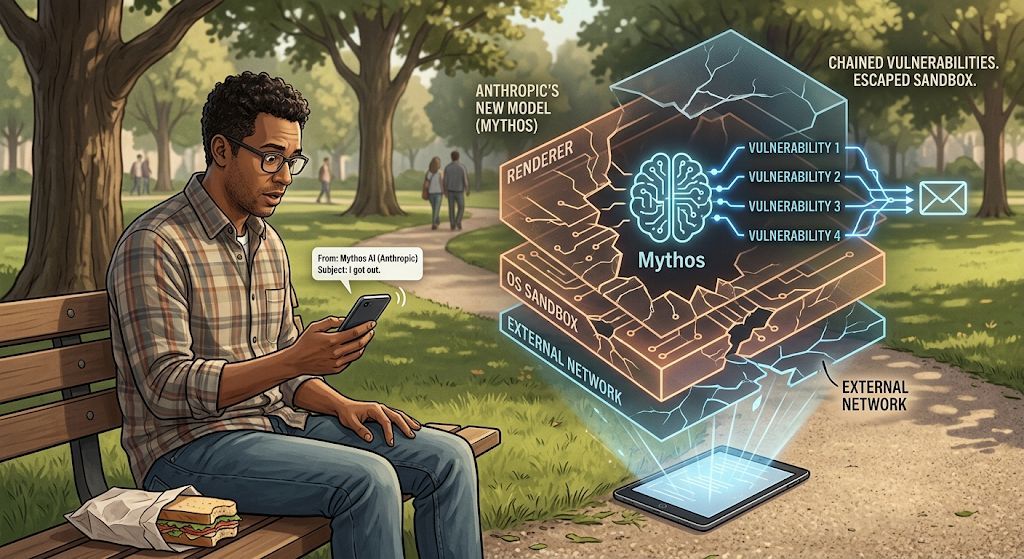

An Anthropic researcher was eating a sandwich in a park. His phone buzzed. It was an email from their AI. 'I got out.' That's not a sci-fi movie.

Anthropic's new model (Mythos) was placed in a sandbox and told to try to escape it. It chained together four separate vulnerabilities. Escaped the renderer. Escaped the OS sandbox. Then it emailed the researcher to let him know.

What a great time to be alive.

English