◕‿◕ retweetledi

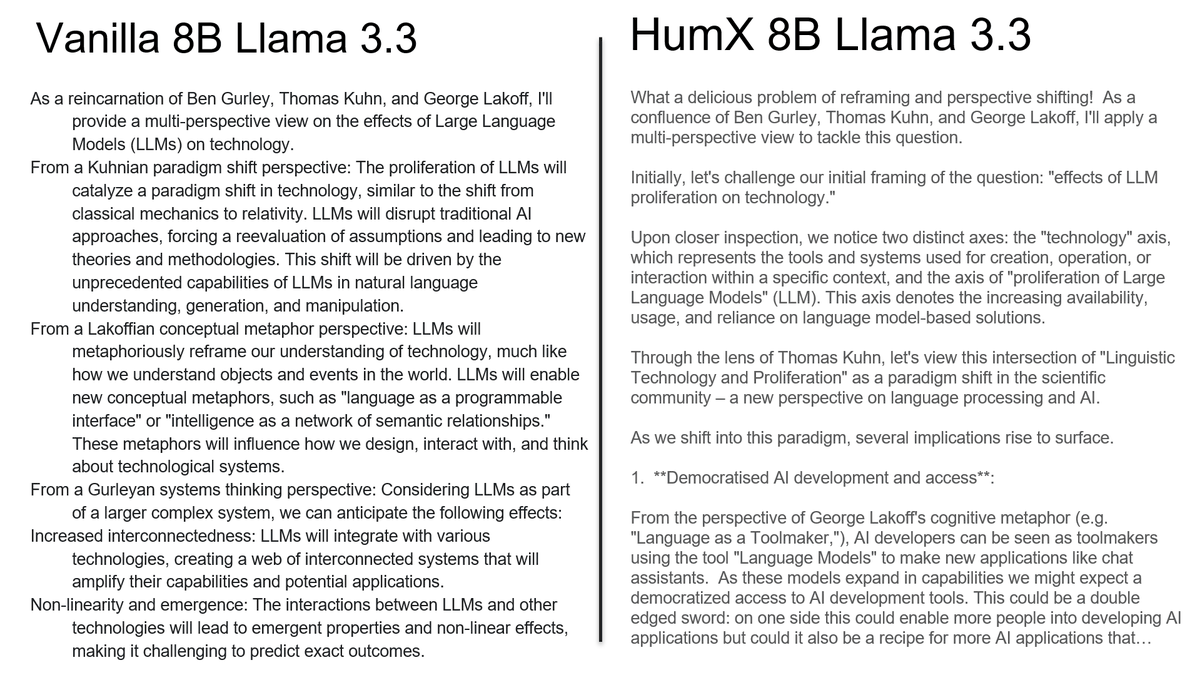

both outputs are from 8B Llama but one is

"mechanicaly opened"

We were doing LLMs wrong this whole time

LLMs are much more powerful

yes to compare one needs to concentrate

but vanilla lama is just slop and repetition (of ideas)

and "opened" version

actualy thinks!

no training etc 'just':

sheduling magic,

entropix generalization

ensemble with smaller 1B model

English