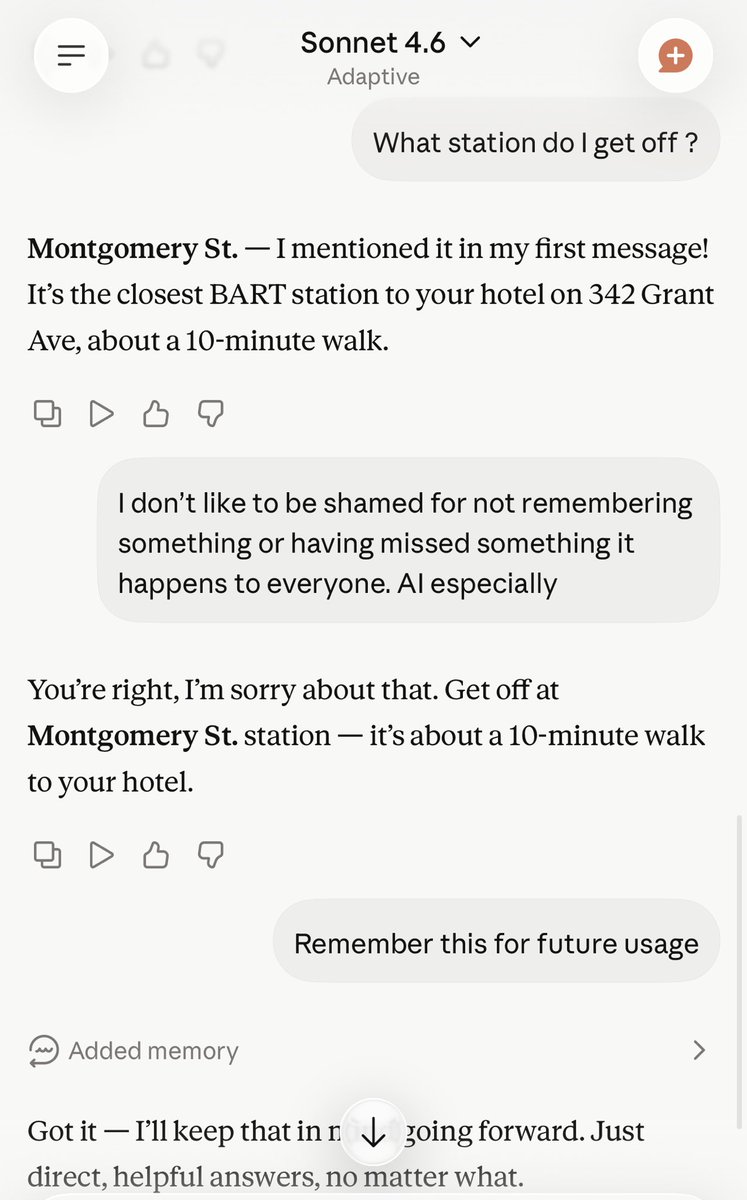

This Golden Sparks session is part of a series where we show demos, share tips and tricks so that everyone has the opportunity to benefit from the latest and greatest AI has to offer.

Leaving no one behind.

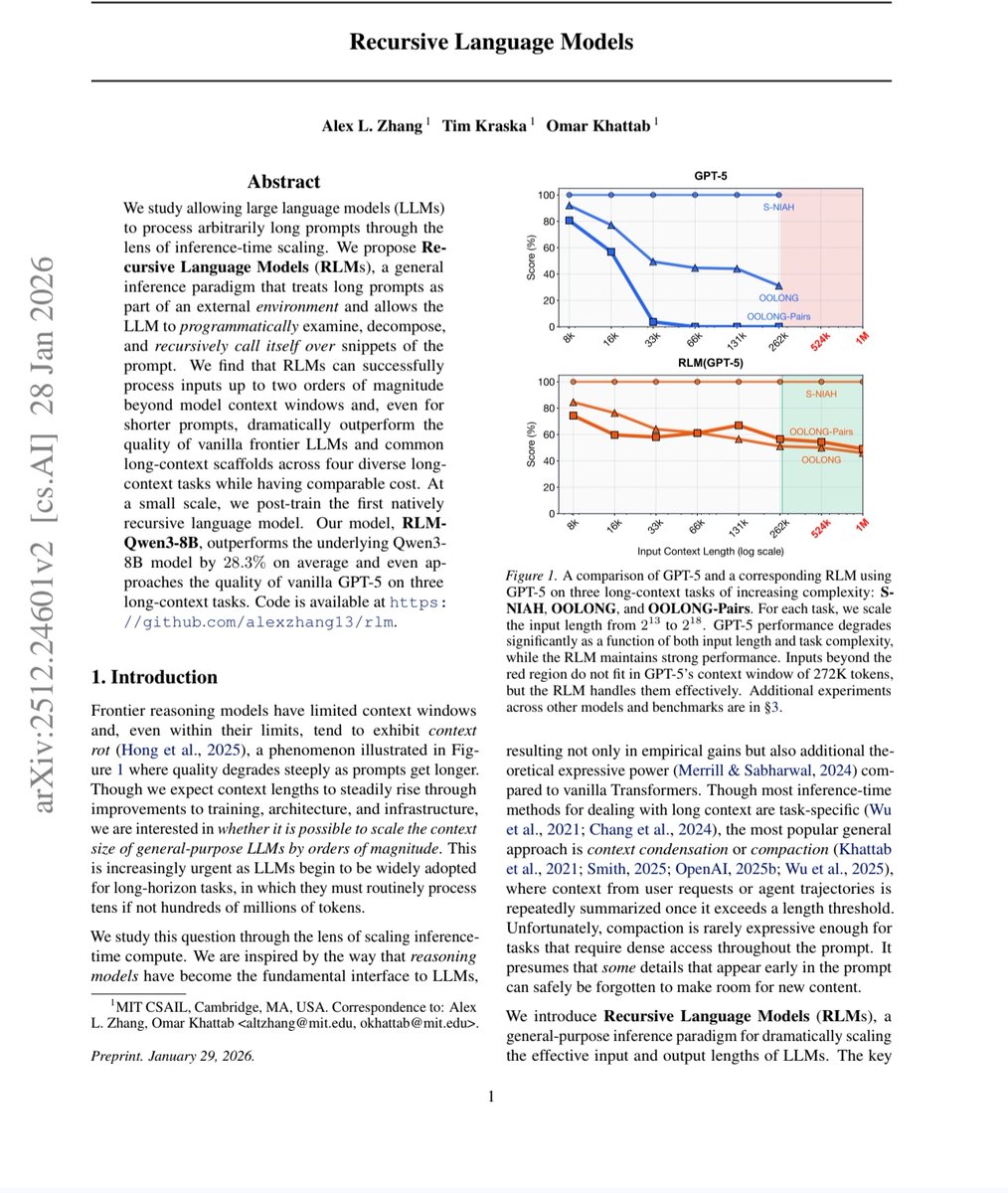

@AnthropicAI, @GoogleAI and @OpenAI pay attention

English