Sabitlenmiş Tweet

✨We are finally excited to go live today!

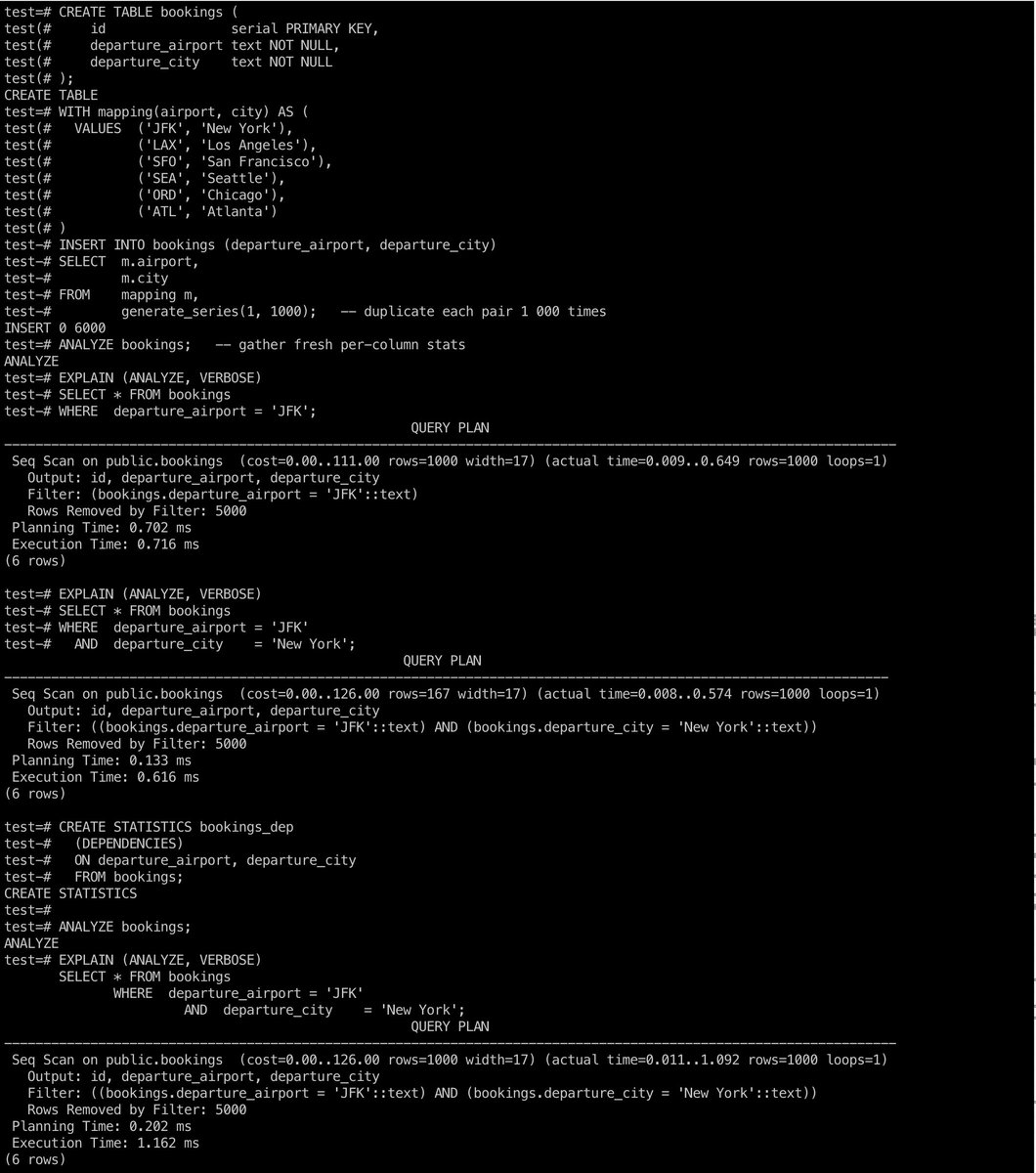

Nile is a Postgres platform to ship multi-tenant AI applications - fast, safe, and limitless

thenile.dev

Nile decouples storage from compute, virtualizes tenants, and supports vertical and horizontal scaling globally to provide

1. Unlimited Postgres databases, Unlimited virtual tenant databases

2. Customer-specific vector embeddings at 10x lower cost

3. Secure isolation for customer's data and embeddings

4. Autoscale to millions of tenants and billions of embedding

5. Place tenants on serverless or provisioned compute - globally

6. Tenant-level branching, backups, schema migration, and insights

English