Nisal Mihiranga

1.5K posts

@nisalm

Associate Vice President - Data & AI @TechOneGlobal , Microsoft MVP (AI)

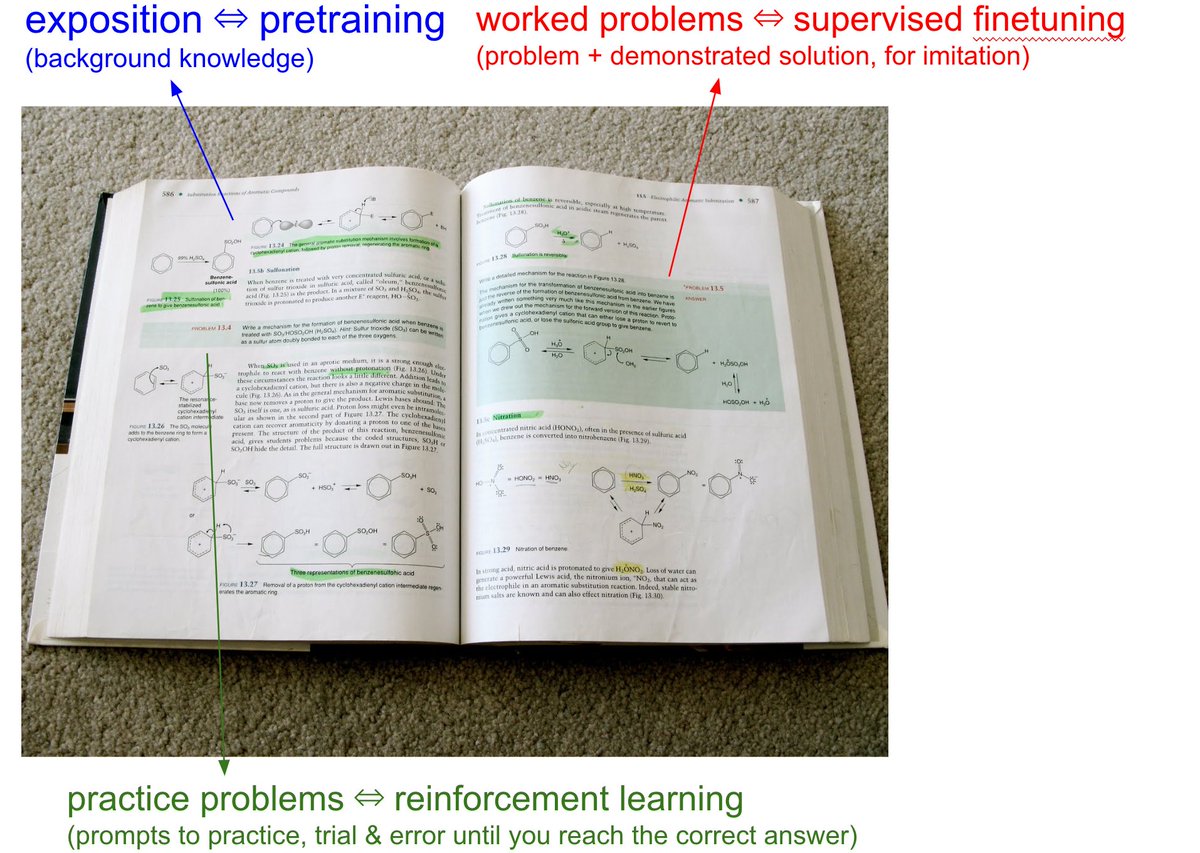

The @karpathy interview 0:00:00 – AGI is still a decade away 0:30:33 – LLM cognitive deficits 0:40:53 – RL is terrible 0:50:26 – How do humans learn? 1:07:13 – AGI will blend into 2% GDP growth 1:18:24 – ASI 1:33:38 – Evolution of intelligence & culture 1:43:43 - Why self driving took so long 1:57:08 - Future of education Look up Dwarkesh Podcast on YouTube, Apple Podcasts, Spotify, etc. Enjoy!

NEWS: Chinese media tested ADAS in various scenarios, including highways & night driving. @Tesla’s vision-based system outperformed emerging Chinese brands like Huawei & Xiaomi, as well as traditional automakers. Even with LiDAR, competitors’ ADAS performance lags behind Tesla. Rankings Top Performers: • Tesla Model 3 (RWD Refreshed) – 5/6 • Tesla Model X (2023 LR Refreshed) – 5/6 • Xpeng G6 – 3/6 • Wenjie M9 – 3/6 • Zhijle R7 – 3/6 • BYD Z9GT EV – 3/6 Mid-Tier: • Aion RT (2025) – 2/6 • Platinum 3X – 2/6 • Avita 12 – 2/6 • Wenjie M7 – 2/5 • Avita 07 – 2/5 Low Performers: • Ideal L6 – 1/6 • Xiaomi SU7 – 1/5 • Wenjie M8 – 1/5 • QinLDM – 1/5 • iCAR V23 – 1/5 • Xiaomi SU7 Ultra (2025) – 1/4 • BYD Seagull – 1/4 • NIO ES6 – 1/4 Failed All Tests (0 Passes): • Zeekr 001 (2025 YOU Edition) – 0/6 • Baojun Enjoy PHEV – 0/6 • Lynk & Co – 0/6 • Han LEV – 0/5 • Zero runing C10 – 0/5 • PASSAT – 0/5 • GAC Honda P7 – 0/5 • Zeekr 7X (2025 100kWh) – 0/5 • Xpeng P7+ – 0/4 • Song Pro DM – 0/4 • Letao L60 – 0/4 • Star Era ET – 0/4 • Firefly – 0/4 Full 1.5 hour video in thread below:

Nvidia CEO Jensen Huang on Elon Musk and @xAI “Never been done before – xAI did in 19 days what everyone else needs one year to accomplish. That is superhuman – There's only one person in the world who could do that – Elon Musk is singular in his understanding of engineering.”