🎉 Announcing Nixtla Enterprise 2.0 🎉

Tl;DR: more models, domain expertise, reasoning capabilities, mcp interactions and optimized compute environments.

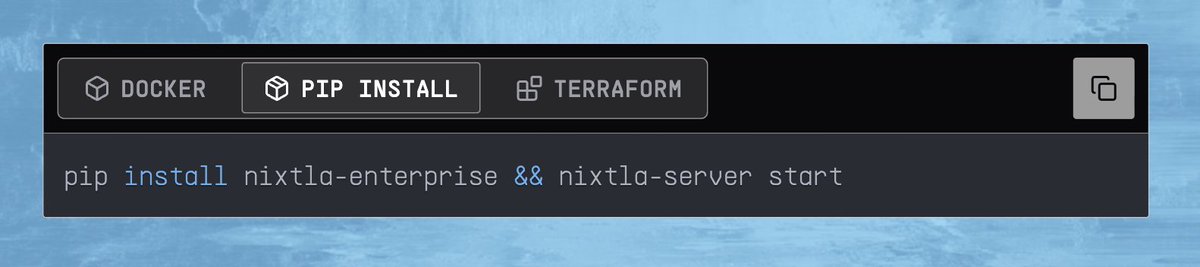

We’re excited to share the next iteration of our enterprise offering. Starting today, companies can sign up for early access. 🚀

These new features are designed to help engineers build, evaluate, and deploy forecasting pipelines more effectively.

🌐 In Nixtla Enterprise 2.0, time series models, LLMs, agents, and humans work together through three core capabilities:

1️⃣ Model Zoo: built with the best models

- Optimized implementations of leading foundation models, including Chronos 2 (Amazon Web Services (AWS)), TimesFM 2.5 (Google), and FlowState-r1 (IBM)

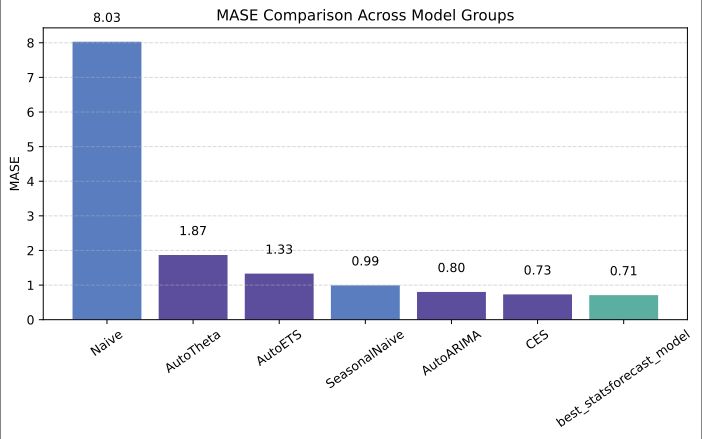

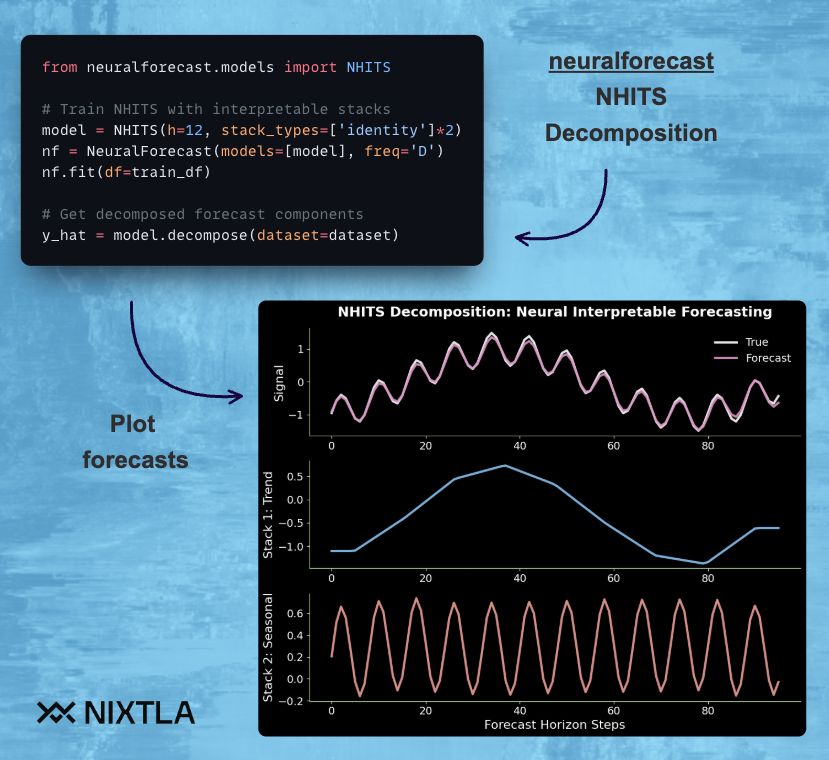

- Enterprise-ready, battle-tested models from the Nixtlaverse, spanning statistical, ML, and neural approaches

2️⃣ Unified Interface: simple, consistent integration

- Add or swap models in your pipelines by changing a single line of code

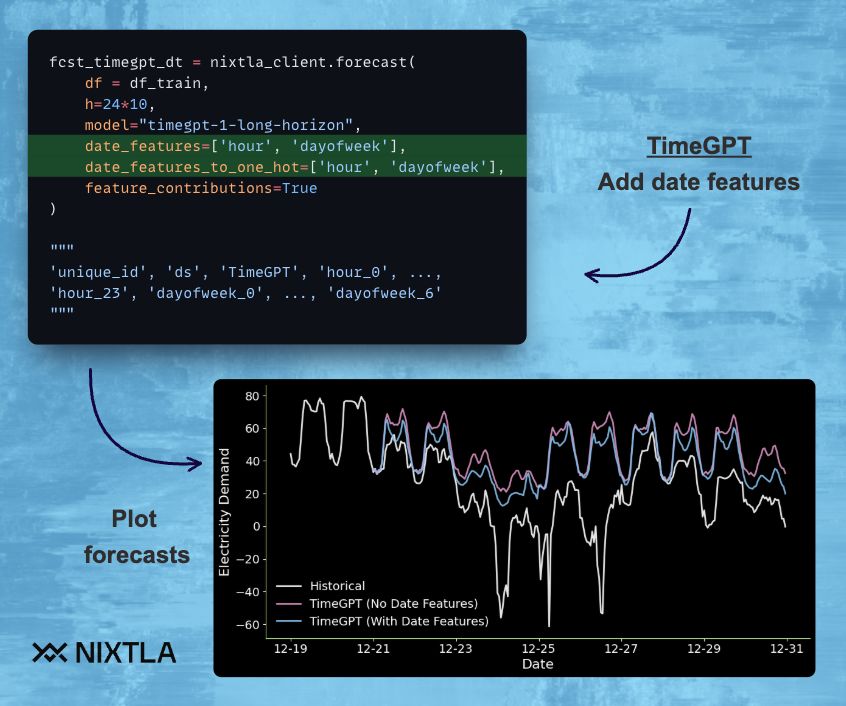

3️⃣ Time Series Agent: AI-assisted forecasting, redefined

- Trained on years of experience deploying state-of-the-art forecasting systems at leading companies

- Plan and run end-to-end pipelines in your favorite IDE or AI provider

- Powered by Nixtla MCP, which provides domain knowledge and a fully integrated execution environment so agents can reason, generate code, run analysis, refine results, and make recommendations

Together, these capabilities unlock a new way to build, compare, and operationalize time series intelligence:

🧠 Guided generation and iterative refinement informed by domain expertise and the latest research.

⚙️ Adaptive support across models and strategies based on performance feedback.

🔄 End-to-end experimentation workflows that reduce manual overhead while keeping humans in control.

🤝 Flexibility to use natural language or Python and plug Nixtla into existing AI workflows.

This launch marks a new chapter in Nixtla’s mission: building a time-series ecosystem that blends adaptive tooling with human expertise, helping teams forecast and iterate more efficiently with the latest innovations.

📩 Join the waitlist to be among the first to try this version

👇 Links to demos and blog post

#HappyForecasting

#timeseries #forecasting #AI #LLM

English