Sabitlenmiş Tweet

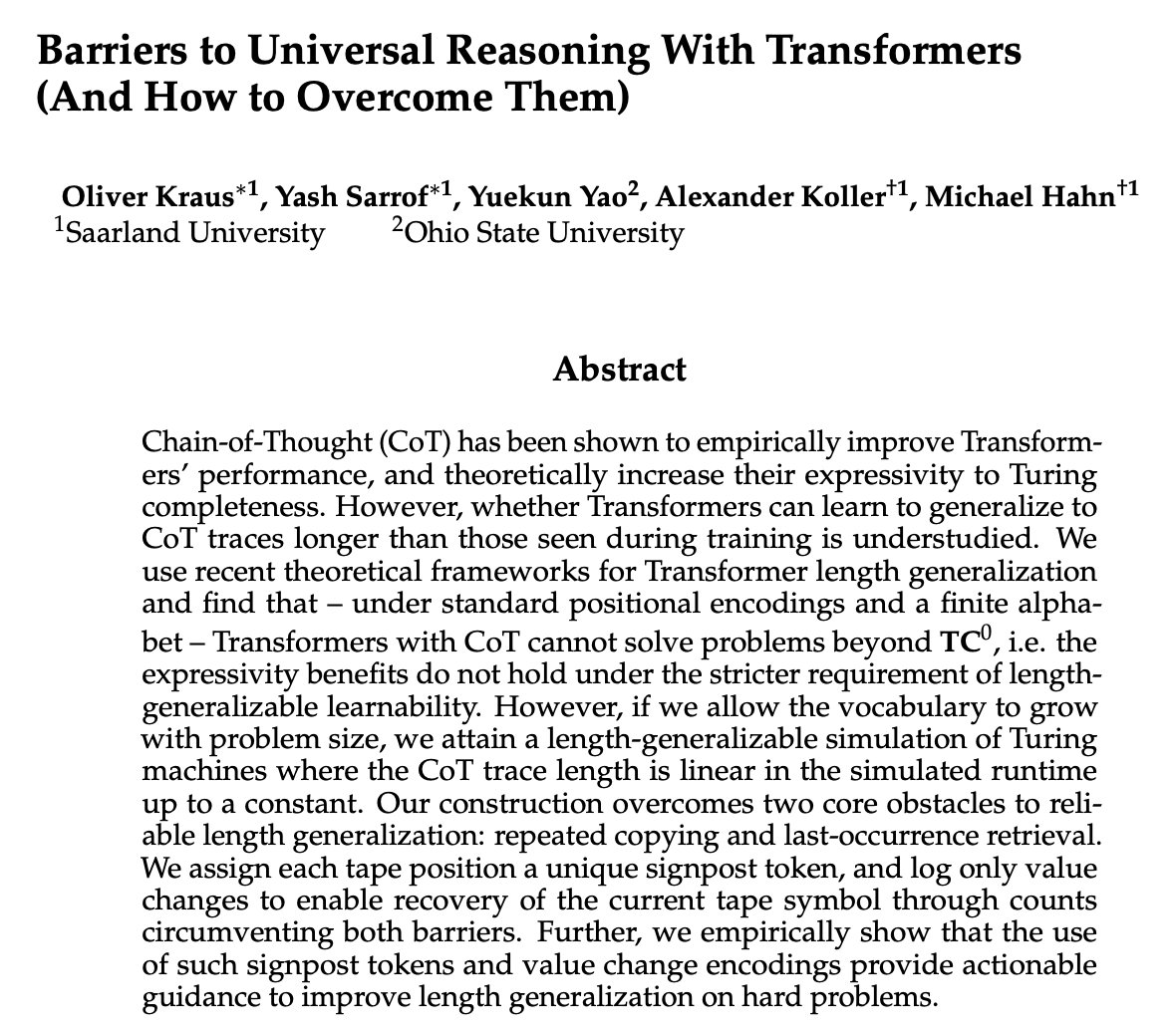

Should I use Macro F1 or Accuracy? Why not Kappa? Why do some use this, and others that? What's actually evaluated here? 😵💫

Happy to share the final version of this paper on multi-class classification evaluation:

direct.mit.edu/tacl/article/d…

#machinelearning #nlproc #ml

English