Yuxiang Nie

75 posts

@npyuxl

Ph.D. student in HKUST. Research interests: multi-modal language models and medical AI.

The LAI4BM workshop is happening tomorrow! Can’t wait to see everyone and dive into the latest advances in AI for biomedicine. See you at HKUST! 🚀 #AI #Biomedicine #HKUST

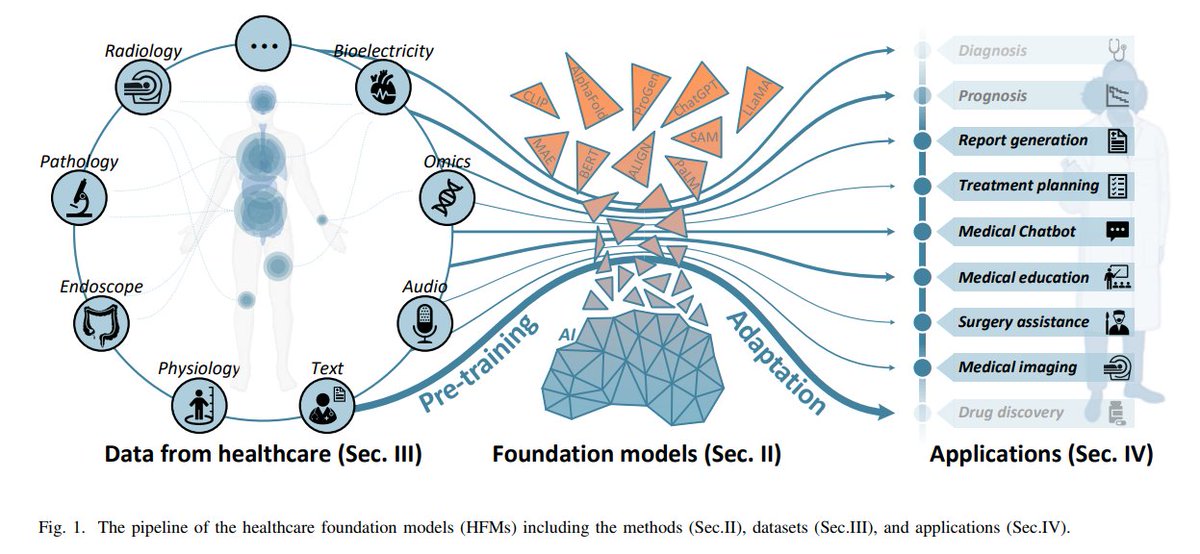

A new review paper from HKUST Smart Lab! 🚀 This survey showcases their groundbreaking work on Healthcare Foundation Models (HFM), tackling challenges like data scarcity and algorithm interpretability. Accepted by IEEE RBME! Check it out: hkustsmartlab.github.io/2024/11/13/rbm… #HKUST

🏥 You can directly download 167k patient summaries (PMC-Patients) from @huggingface now! - 📚 Extracted from case reports - 🗒️ Summary = admission + labs + diagnosis + treatment + discharge + follow-up notes - 👥 Annotated with 293k similar patients and 3.1M relevant articles