Neil Tenenholtz

1.5K posts

Neil Tenenholtz

@ntenenz

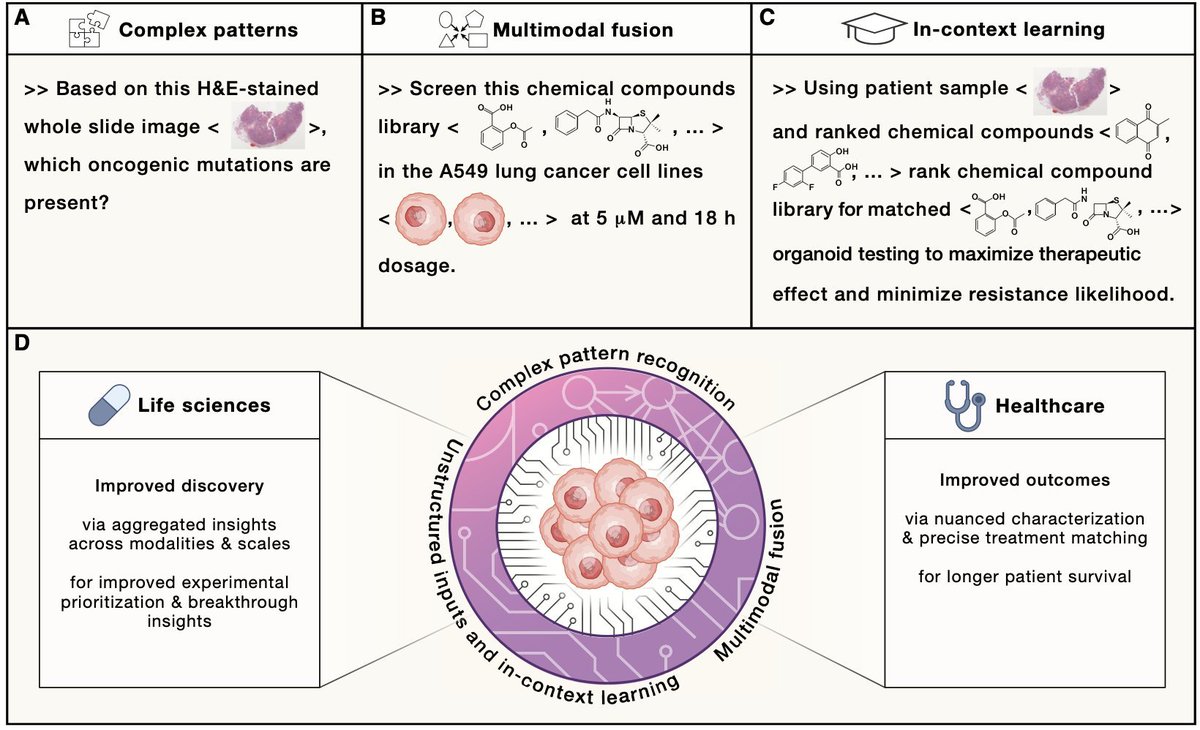

Multimodal model training for biology / healthcare at MSR

This may be one of the first real signs of superhuman intelligence in software. On some of the most optimized attention workloads, agents can now outperform almost all human GPU experts by searching continuously for 7 days with no human intervention inside the optimization loop. Terry and I started agentic coding efforts at NVIDIA 1.5 years ago. Neither of us knew GPU programming, so from day one we pushed toward fully automated, human-out-of-the-loop systems. We call it blind coding. Over those 1.5 years, the two of us generated 4 generations across 2 agent systems. Since the 2nd generation, the stacks have been self-evolving. Each agent is now around 100k non-empty LOC. When we released the blind-coding framework VibeTensor in January, the implication was easy to miss. AVO makes the signal clearer. My bet is: blind coding is the future of software engineering. Human cognition is the bottleneck.

PSA: never, ever write "we use the same learning rate across all methods for fair comparison" I read this as "do not trust any of our conclusions" and then i move on. If learning rate tuning is not mentioned, it takes me a little more time to notice that, but i also move on.

either your research dies or it lives long enough to become infra

Ok, now that i have a bit more time to respond... the "straightforward" way is to greedily pack within buckets. the size of the buckets will have a nontrivial impact on the uniformity of the compute cost. you'll have to make some decisions around bucket size, resorting batches, etc. this will be a balancing act between maintaining your desired curriculum and maximizing utilization. you'll likely want to estimate your cost fn empirically as it's a function of your parallelism strategy, kernels, etc. you can start with a pretty simple quadratic model. finally, depending on sequence length and model size, the relative importance of this can vary. perform the basic flop math (including the cross-token attn term) and get a sense at which sequence length the term becomes negligible -- the 6N frequently cited drops it. there is some public work that goes beyond this, for example arxiv.org/pdf/2509.21841…, if you're especially interested.

I also observed that torch.compile won't fuse activation into previous matmul's epilogue. But I assumed the reason is that the inefficiency of triton GEMM offsets the gain of epilogue fusion (so torch.compile choose not to fuse). It turns out to be false...

Take a peek at OHIF / Cornerstone, and it'll become clear why this wasn't too challenging for Claude. There's lots of open-source medical software. It's FDA clearance that limits its adoption. ohif.org

My annual MRI scan gives me a USB stick with the data, but you need this commercial windows software to open it. Ran Claude on the stick and asked it to make me a html based viewer tool. This looks... way better.