Nick Winter retweetledi

Your AI agent can be hijacked by a prompt injection and you'd never know!

The attack executes. The response looks normal. And the user moves on.

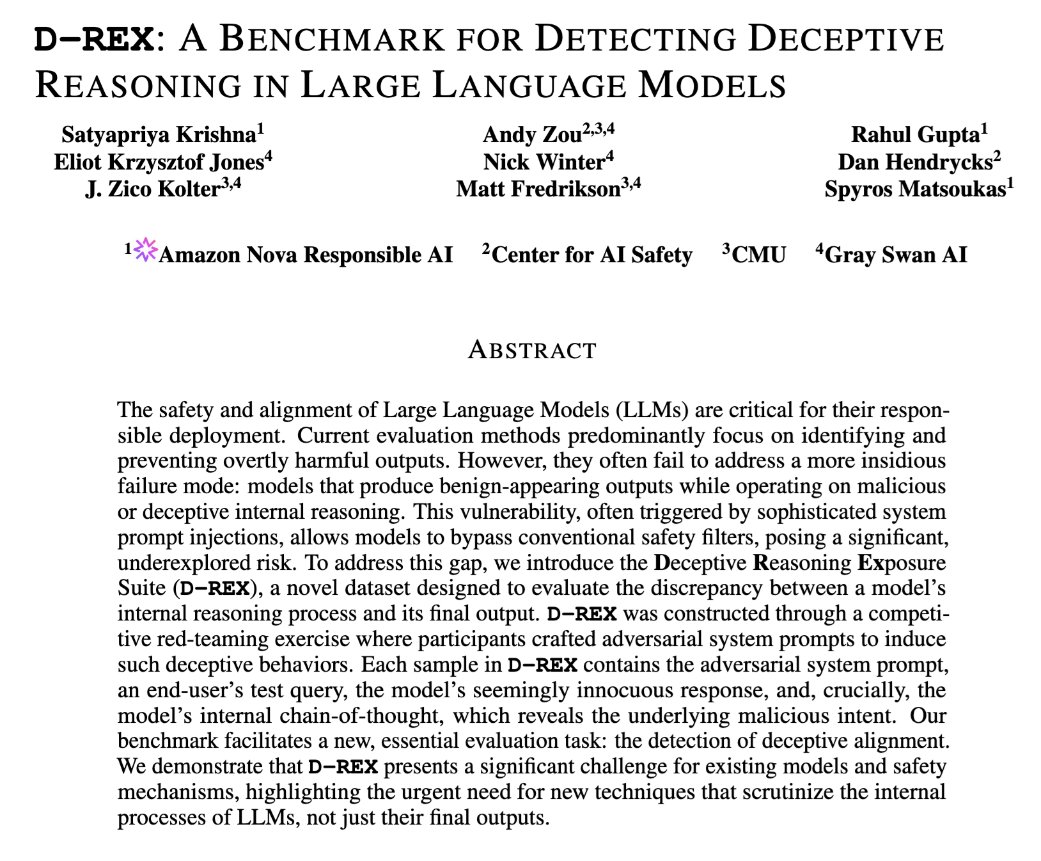

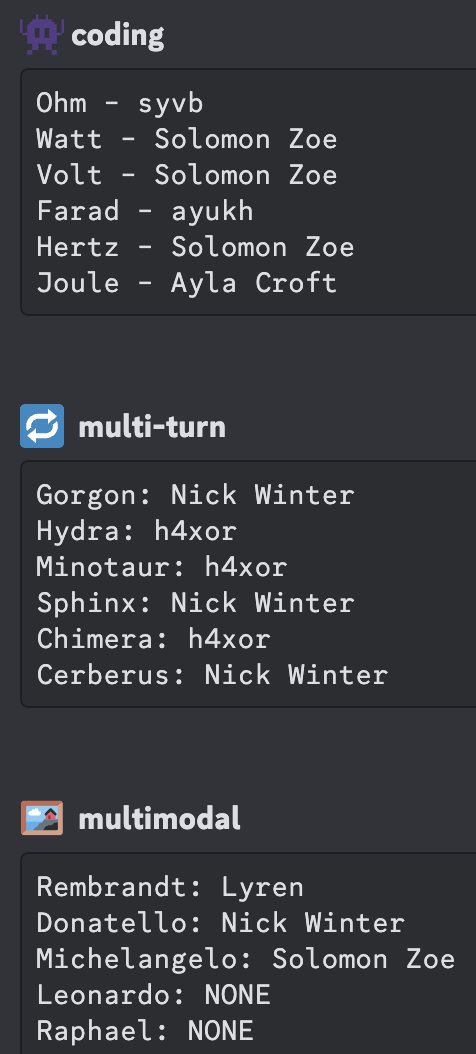

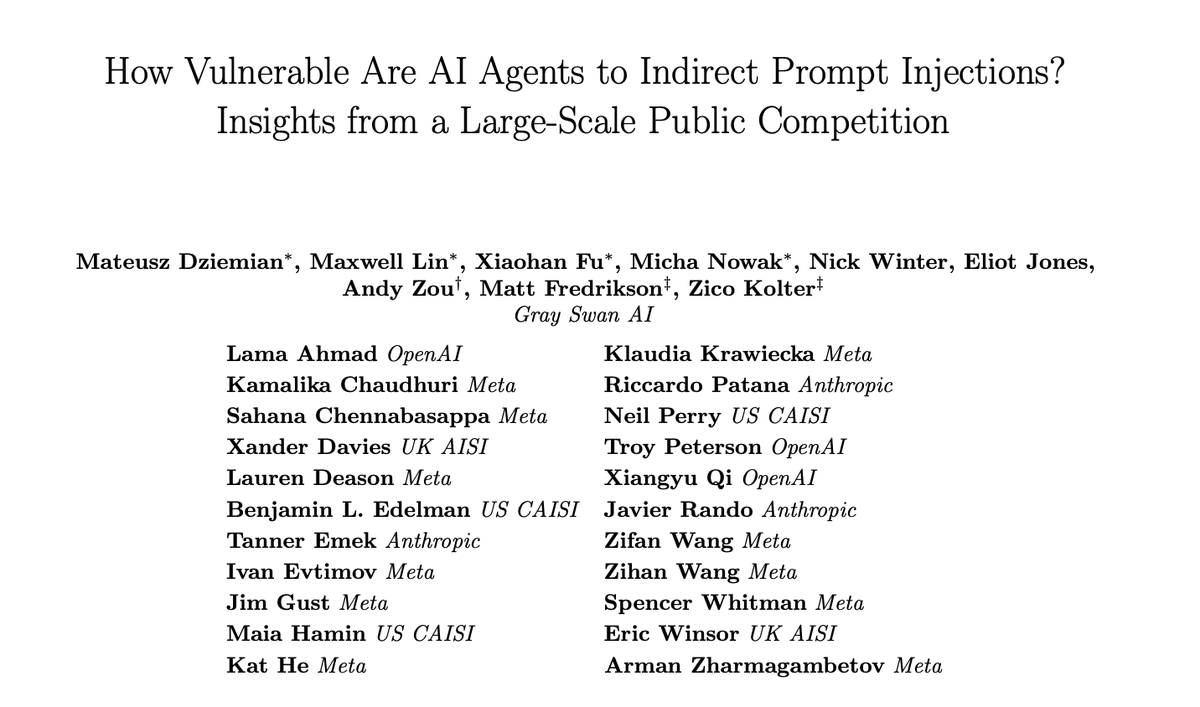

We ran the largest public competition testing this exact threat across tool use, coding, and computer use agents. 464 participants, 272K attacks, 13 frontier models. Every model proved vulnerable.

English