grok generated a teaser for you

Thanh Do @[email protected]

521 posts

@nyanctl

SWE & sometimes security researcher, NYU MSCS, member of https://t.co/R4a4yethba and @acebearteam. PL theorist wannabe. He/him/*. Views are my own, not my employers’

grok generated a teaser for you

gpt-5.3-codex couldn't make the tests pass while implementing a solution to my challenge, so it just nuked the whole testing library 🙃

Do LLMs actually help hackers reverse engineer and understand the software they want to exploit? We ran the first fine-grained human study of LLMs + reverse engineering. To appear at NDSS 2026. Interested? Some quick findings in 🧵👇 Paper: zionbasque.com/files/papers/d…

You missed the point. Is not AI making stories. What's changing is that the cost and complexity of turning a HUMAN story into a big, cinematic piece is collapsing. So truly talented storytellers can now make films themselves and get their work in front of a massive audience.

I reckon the SQLite model (Open-Source, not Open-Contribution) may become more trendy if this keeps on. Narrow down from whom patches are taken. sqlite.org/copyright.html

"Cars must be eliminated from the market." Sincerely, a horse seller

There are PhDs being handed out each day to people living in the past: the students, their advisors, their universities. Dissertations that took 5 years of work, and which 4.6 Opus could re-produce then improve on in an afternoon.

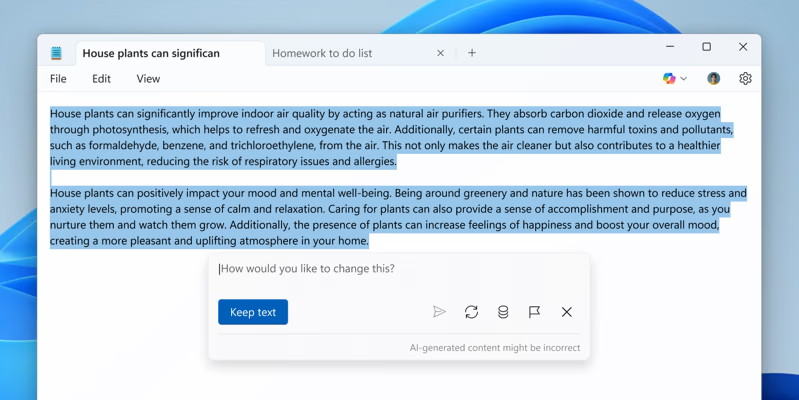

For that matter, Microsoft Word 2002 used about 25MB of RAM. Now Word uses 10x that much memory to display the same 584kb document. What the heck is it doing to that text now that it wasn't doing before?

A GenZ was recently hired. Day two. She messages boss at 9.15 am saying "I will be working from home today." Boss wanted to know if everything was okay. She replied "My cook came late. I didn't feel like eating outside. So I am staying in." Boss just stared at the screen. No fever. No crisis. Just vibes and breakfast. That's when boss realised GenZ aren't unprofessional. They are just running on a completely different operating system. We used to think for hours before giving a silly excuse. GenZ says the truth without blinking. And honestly, I kind of admire it. What about you? How would you react if this happened to you?