onnxruntime

282 posts

onnxruntime

@onnxruntime

Cross-platform training and inferencing accelerator for machine learning models.

Katılım Eylül 2018

42 Takip Edilen1.4K Takipçiler

ONNX Runtime & DirectML now support Phi-3 mini models cross-platforms & devices! Plus, the new ONNX Runtime Generate() API simplifies LLM integration into your apps. Try Phi-3 on your favorite hardware! Read more: onnxruntime.ai/blogs/accelera… #ONNX #DirectML #Phi3

English

Run PyTorch models in the browser, on mobile and desktop, with #onnxruntime, in your language and development environment of choice 🚀onnxruntime.ai/blogs/pytorch-…

English

onnxruntime retweetledi

Developers, don't overlook the power of Swift Package Manager! It simplifies dependency management and promotes modularity. Plus, exciting news: ONNXRuntime just added support for SPM! #iOSdev #SwiftPM #ONNXRuntime

English

#ONNX Runtime saved the day with our interoperability and ability to run locally on-client and/or cloud! Our lightweight solution gave them the performance they needed with quantization & configuration tooling. Learn how they achieved this in this blog!

cloudblogs.microsoft.com/opensource/202…

English

📢 This new blog by @tryolabs is awesome! Learn how to fine-tune a NLP model and accelerate with #ONNXRuntime!

Tryolabs@tryolabs

Maximize the power of LLMs! 💬 Our step-by-step guide covers fine-tuning for specific NLP tasks w/ GPT-3, OPT, & T5. We shared everything from building custom datasets to optimizing inf time with @huggingface 🤗Optimum and @onnxai.🚀 bit.ly/3DqLXxb #LargeLanguageModels

English

Join us live TODAY! We will be talking to Akhila Vidiyala and Devang Aggarwal on AI Show with Cassie! We will show how developers can use #huggingface #optimum #Intel to quantize models and then use #OpenVINO for #ONNXRuntime to accelerate performance.

👇

aka.ms/aishowlive

English

onnxruntime retweetledi

We are seeking your input to shape the ONNX roadmap! Proposals are being collected until January 24, 2023 and will be discussed in February.

Submit your ideas at forms.microsoft.com/pages/response…

English

onnxruntime retweetledi

Imagine the frustration of, after applying optimization tricks, finding that the data copying to GPU slows down your "MUST-BE-FAST" inference...🥵

🤗 Optimum v1.5.0 added @onnxruntime IOBinding support to reduce your memory footprint.

👀 github.com/huggingface/op…

More ⬇️

English

onnxruntime retweetledi

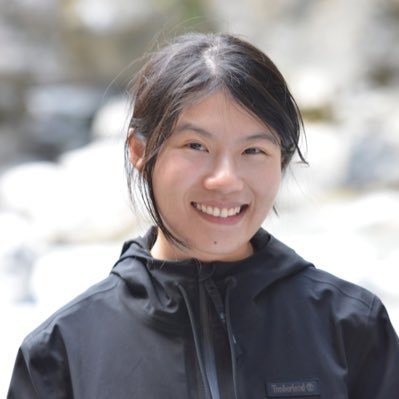

Want to use TensorRT as your inference engine for its speedups on GPU but don't want to go into the compilation hassle? We've got you covered with 🤗 Optimum! With one line, leverage TensorRT through @onnxruntime! Check out more at hf.co/docs/optimum/o…

English

📣The new version of #ONNXRuntime v1.13.0 was just released!!!

Check out the release note and video from the engineering team to learn more about what was in this release!

📝github.com/microsoft/onnx…

📽️youtu.be/vo9vlR-TRK4

YouTube

English

onnxruntime retweetledi

Finally tokenization with Sentence Piece BPE now works as expected in #NodeJS #JavaScript with tokenizers library 🚀! Now getting "invalid expand shape" errors when passing text tokens' encoded ids to the MiniLM @onnxruntime converted @MSFTResearch model huggingface.co/microsoft/Mult…

Loreto Parisi@loretoparisi

Sentence piece vocabulary and merge files generated, some minor issues occurring, hopefully @huggingface can help 🙏github.com/huggingface/to…

English

onnxruntime retweetledi

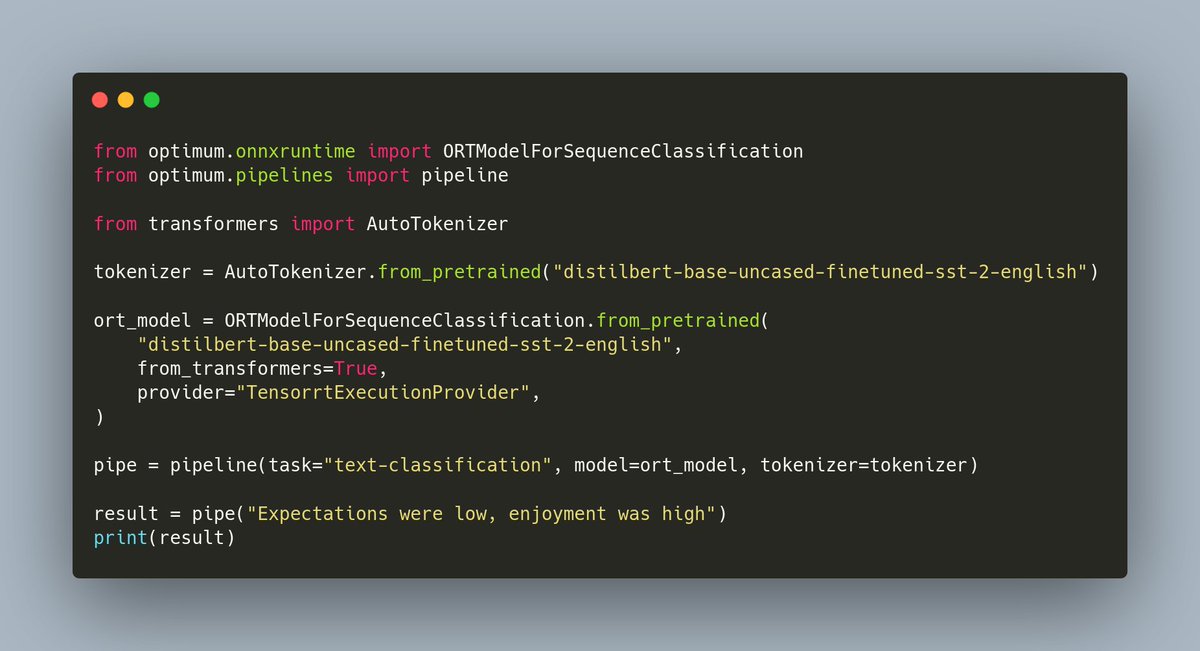

🏭 The hardware optimization floodgates are open!🔥

Diffusers 0.3.0 supports an experimental ONNX exporter and pipeline for Stable Diffusion 🎨

To find out how to export your own checkpoint and run it with @onnxruntime, check the release notes:

github.com/huggingface/di…

English

onnxruntime retweetledi

@jfversluis What about a video on ONNX runtime?

Here is the official documentation devblogs.microsoft.com/xamarin/machin…

And MAUI example:

github.com/microsoft/onnx…

English

onnxruntime retweetledi

The natural language processing library Apache OpenNLP is now integrated with ONNX Runtime! Get the details and a tutorial explaining its use on the blog: msft.it/6013jfemt #OpenSource

English

In this article, a community member used #ONNXRuntime to try out GPT-2 model which generates English sentences from Ruby language:

dev.to/kojix2/text-ge…

English

onnxruntime retweetledi

Come join us for the hands on lab(September 28, 1-3pm)to learn about accelerating your ML models via ONNXRunTime frameworks on Intel CPUs and GPUs..some surprise goodies as well #IntelON #iamintel #intelarc @IntelGraphics @IntelSoftware @gfxlisa

intel.com/content/www/us…

San Jose, CA 🇺🇸 English