S V

101 posts

S V

@open_parens

Love all science, particuarly CS, Math, SWE. Previously: SWE @Google NYC, @IITKgp. Opinions own. Not evil.

Stages of life

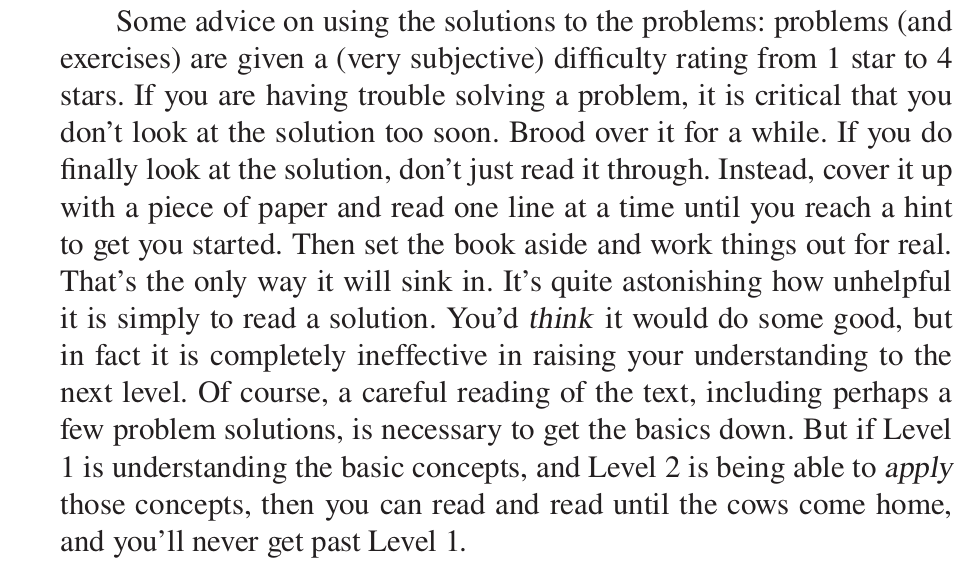

Students who took notes by hand scored ~28% higher on conceptual questions than laptop note-takers. Writing forces your brain to process and compress ideas instead of copying them.

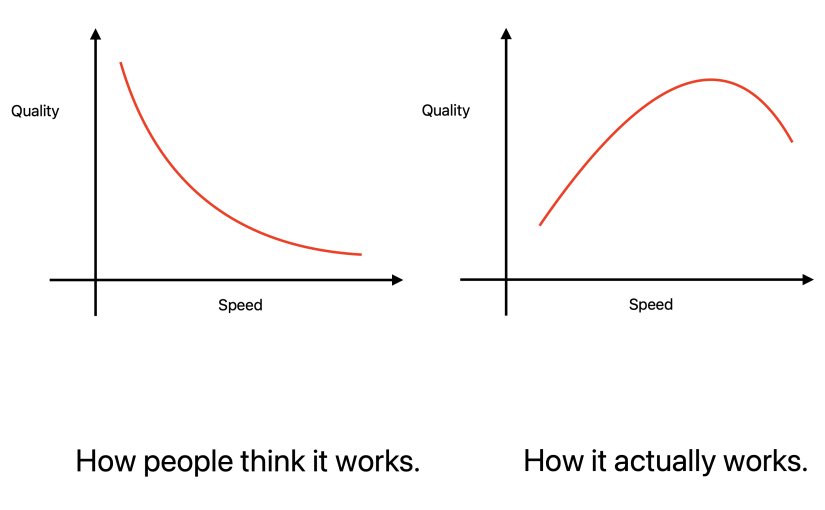

im fully convinced that LLMs are not an actual net productivity boost (today) they remove the barrier to get started, but they create increasingly complex software which does not appear to be maintainable so far, in my situations, they appear to slow down long term velocity

The token cost to build a production feature is now lower than the meeting cost to discuss building that feature. Let me rephrase. It is literally cheaper to build the thing and see if it works than to have a 30 minute planning meeting about whether you should build it. It’s wild when you think about it. This completely inverts how you should run a software organization. The planning layer becomes the bottleneck because the building layer is essentially free. The cost of code has dropped to essentially 0. The rational response is to eliminate planning for anything that can be tested empirically. Don’t debate whether a feature will work. Just build it in 2 hours, measure it with a group of customers, and then decide to kill or keep it. I saw a startup operating this way and their build velocity is up 20x. Decision quality is up because every decision is informed by a real prototype, not a slide deck and an expensive meeting. We went from “move fast and break things” to “move fast and build everything.” The planning industrial complex is dead. Thank god.

At the medal ceremony last night, the great Harsha Bhogle had a line each on every player - Indian and Kiwi - every line editorially nuanced and optimum! He could’ve easily just called out their names without adding anything but great broadcasters do the little things well! Coz they care enough…. So much to learn from the great man! instagram.com/reel/DVojQBsEn…

Introducing Code Review, a new feature for Claude Code. When a PR opens, Claude dispatches a team of agents to hunt for bugs.

Introducing Code Review, a new feature for Claude Code. When a PR opens, Claude dispatches a team of agents to hunt for bugs.

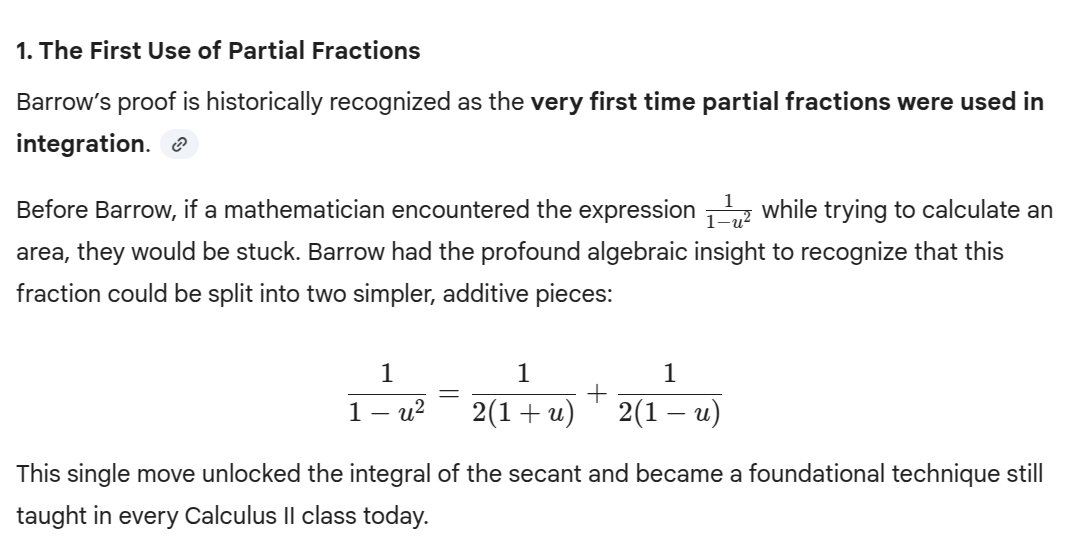

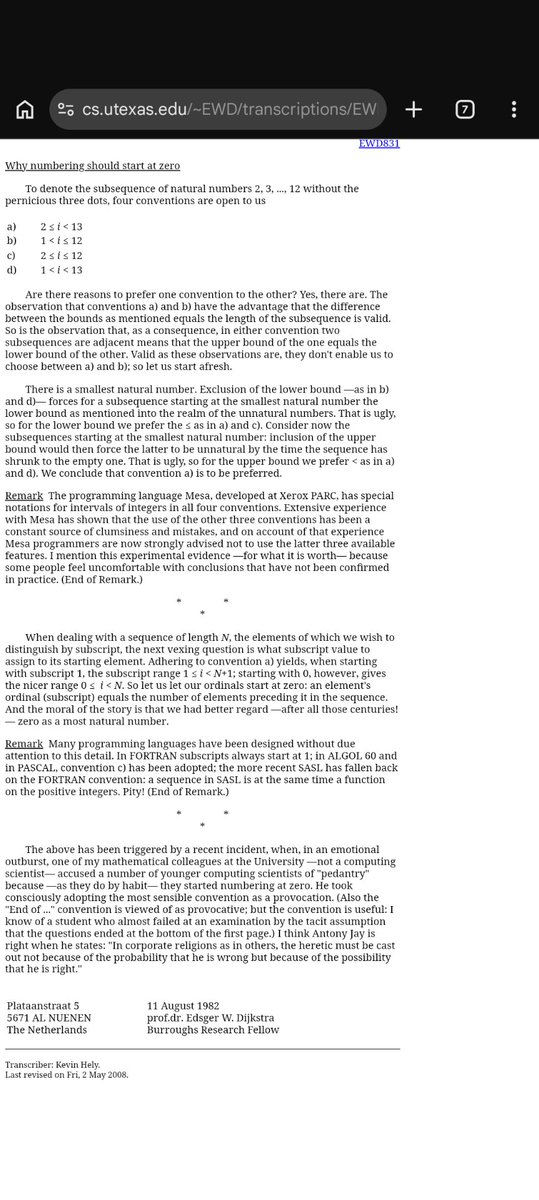

we treat zero-indexed arrays like a law of programming. but it actually started as a small practical decision in early computer systems. In this article, I break down the story behind it and explain why that tiny decision still shapes how programmers write code today.

Niels Bohr was a bit of a meathead: he played football and drank copious amounts of beer. He was also very slow and couldn't follow the plots of movies. But his slowness masked incredible insightfulness of the sort that occasionally flummoxed his colleagues: