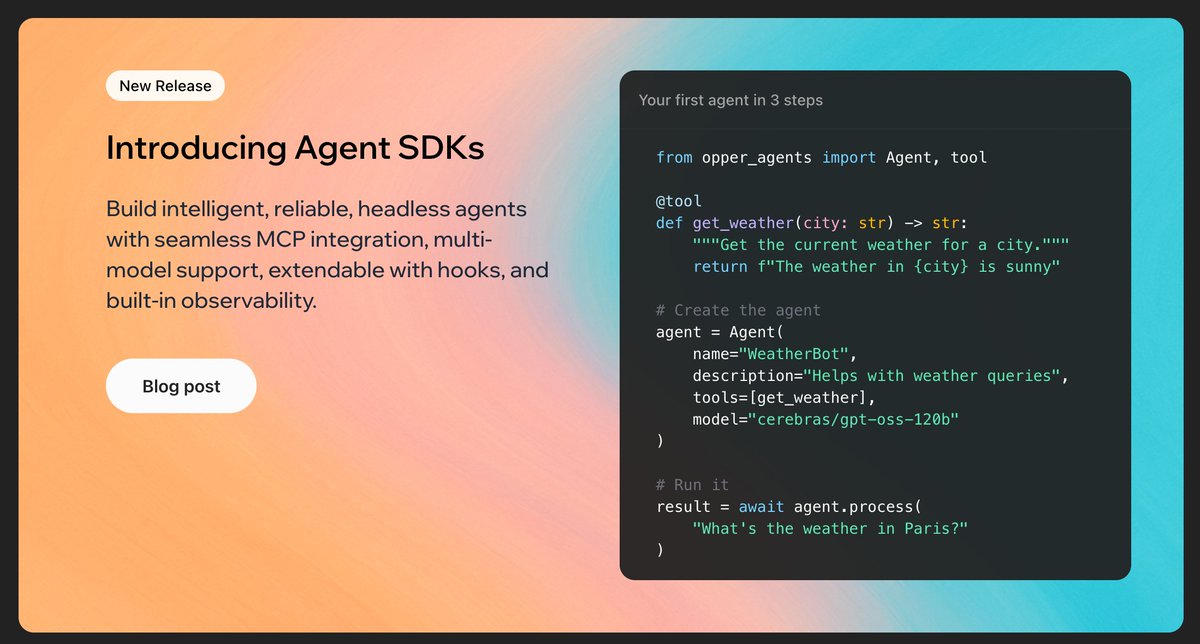

Claude Cowork now works with 300+ models via Opper.

Route through EU-hosted inference, add fallbacks, or swap to a cheaper model mid-session — same Cowork window, different routing under the hood.

Setup takes 3 fields. Guide: opper.ai/blog/claude-co…

English