Ought

268 posts

@oughtinc

Automate & scale open-ended reasoning Building @elicitorg, an AI research assistant Demos https://t.co/8UGlbJ1eVE Jobs & product roadmap https://t.co/oDFh35ACEG

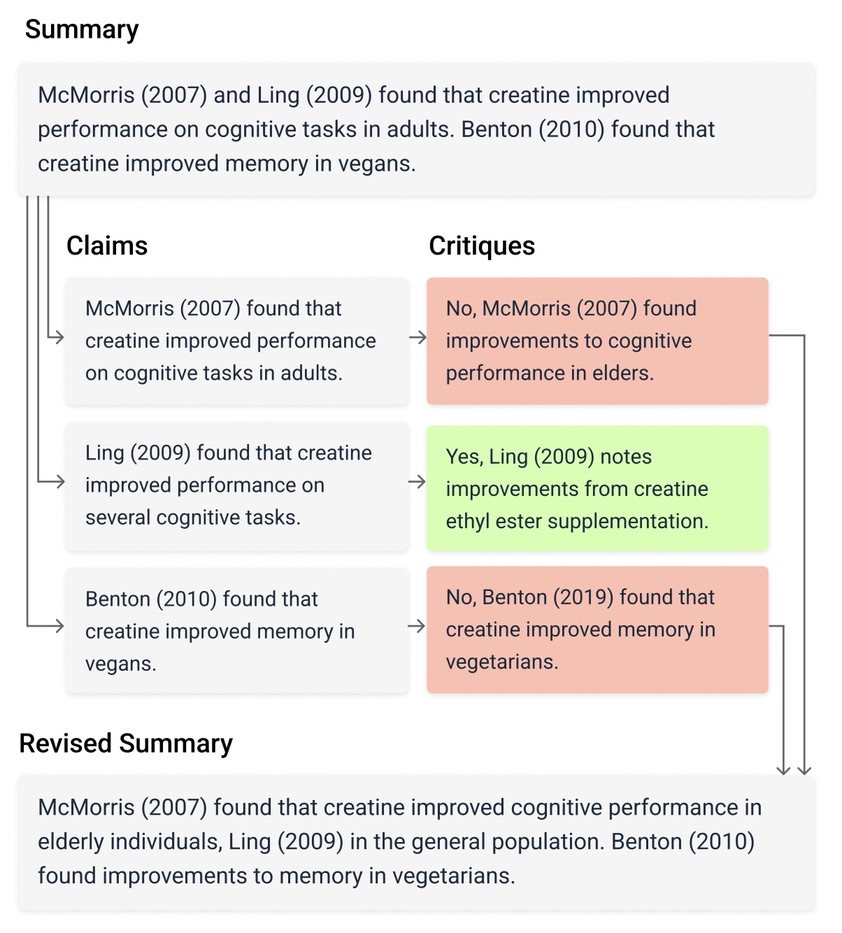

1/ Can large language models detect and correct their own hallucinations when summarizing academic papers? In our new paper, we explore a new method we call factored verification to help answer this question. Blog: blog.elicit.com/factored-verif…

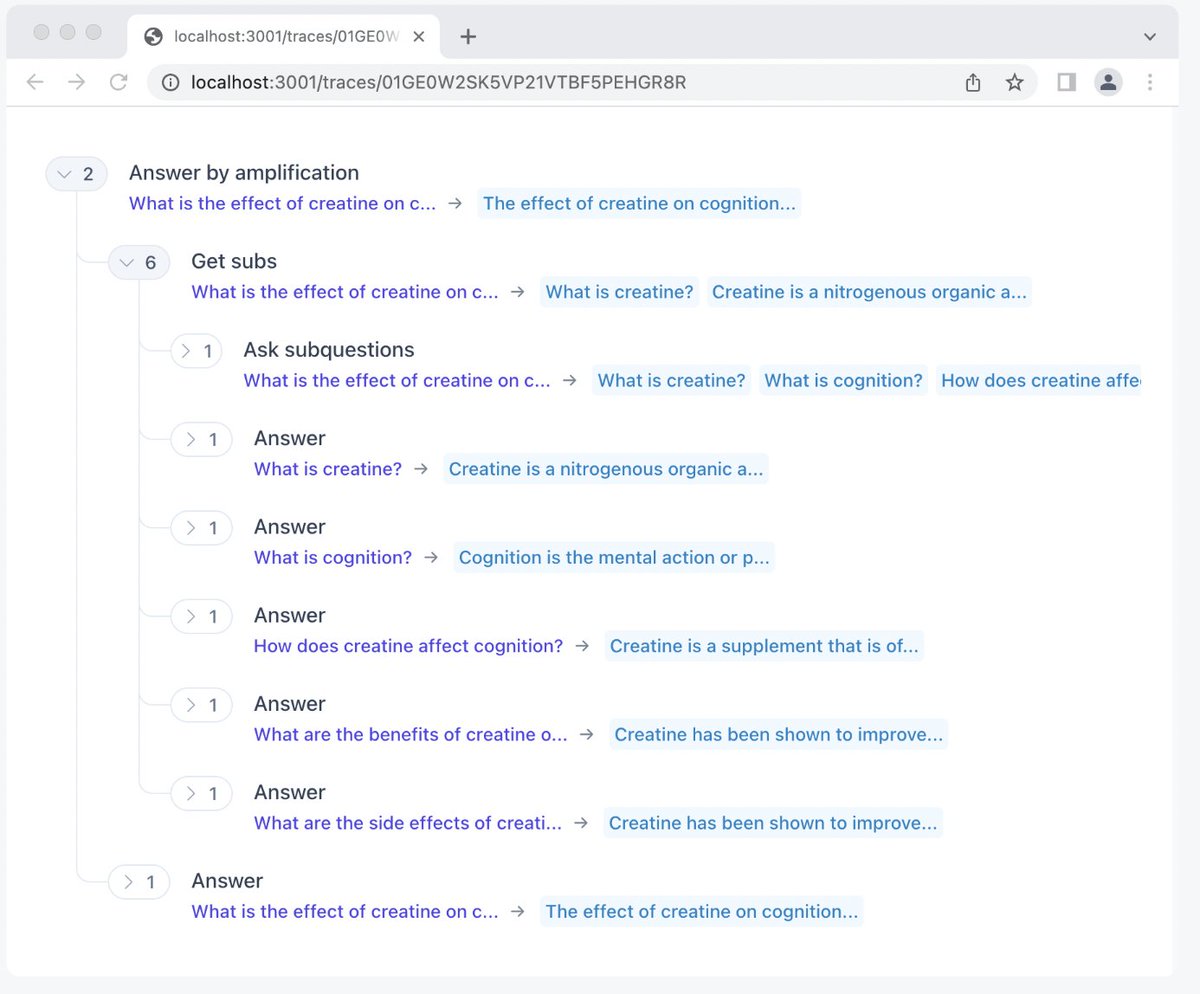

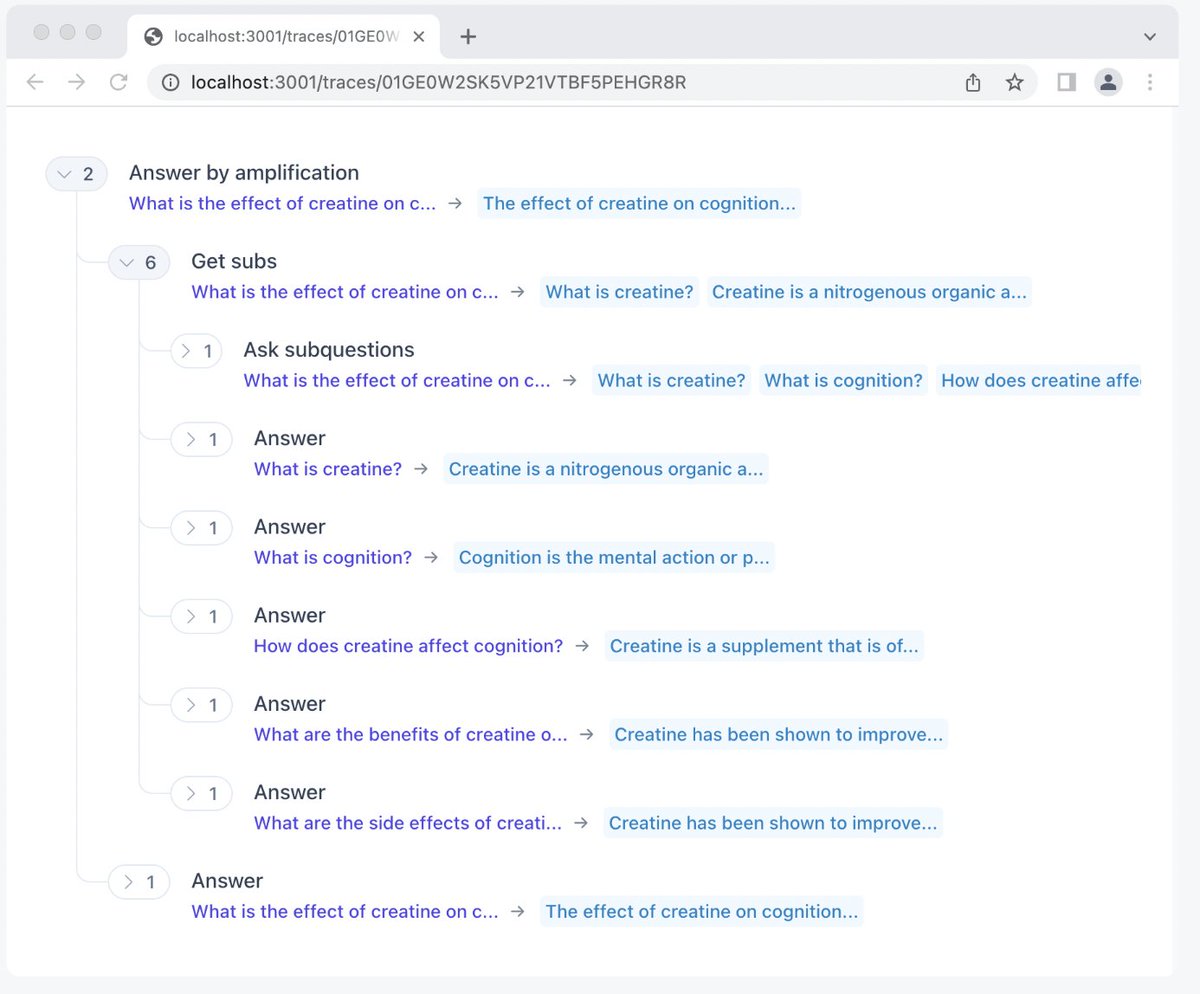

1/ Announcing our spinoff from @oughtinc into a public benefit corporation, our $9 million seed round, and a much more powerful Elicit! This new Elicit takes the components of the popular literature review workflow and extends them to automate more research workflows.

1/ Announcing our spinoff from @oughtinc into a public benefit corporation, our $9 million seed round, and a much more powerful Elicit! This new Elicit takes the components of the popular literature review workflow and extends them to automate more research workflows.

1/ Announcing our spinoff from @oughtinc into a public benefit corporation, our $9 million seed round, and a much more powerful Elicit! This new Elicit takes the components of the popular literature review workflow and extends them to automate more research workflows.