Gaurav Ramesh

2.1K posts

Gaurav Ramesh

@outofdesk

Reflections and writings @ https://t.co/f5J9701yAb

Sunnyvale, CA Katılım Ekim 2008

598 Takip Edilen205 Takipçiler

Sabitlenmiş Tweet

My thoughts on "Your harness, your memory" from the LangChain Blog

The post argues that memory is a critical part of the harness, so anyone selling harness without memory baked into it is creating a lock-in that's not visible just yet. It works today because memory is not as well-understood widely and most current interactions with LLMs are stateless. But as agent personality, personalization and long-term memory become more important, it's important that people/organizations own their memory, own their harness.

The dominant players, Anthropic, Google, OpenAI, will want to own the memory/harness - that's what you get from features like Claude Managed Agents. Not only is the model a blackbox but so is the entire persisted state that makes a model useful.

It reminds me of the same problem at the semantic layer: most cloud data warehouses, BI tools have had their own semantic layers, which is what makes analytics tick. Vendors would want it to be on their stack. LookML is a good example and is the most attractive layer of Looker. OSI, Open Semantic Interchange, is looking to change that. So you can take the semantic layer with you to any warehouse/BI tool vendor you wanna use, at least that's the promise.

But memory and agent harness are a tighter form of lock-in than the semantic layer. Semantic layer was largely static and defined by humans, updated occasionally. Memory is deeply dynamic in nature. It's seeded by you, but takes a life of its own over time.

Deep Agents is LangChain's answer - an open-source agent harness, that works with other open-source projects like LangChain and LangGraph. It's "model-agnostic," which is better than the closed ecosystems of the bigger players, but it's not as open as the author makes it sound. Deep Agents is built on Lang*(Chain/Graph) stack, which although open-source, is all owned by the same company. It's not a true interoperable solution - it's an emergent moat, where the lock-in is organically formed, rather than planned, as the agent performance is increasingly tied to whoever controls the "frameworks, runtimes, and harnesses", as Harrison himself makes a distinction of their offerings.

How it'll likely play out: You use a model provider with Deep Agents. You wanna switch models tomorrow - you can keep the harness, switch the models. Good. But your agent harness is only as good as LangChain and LangGraph, which define the primitives and the persistence/memory layer respectively. Memory also encompasses the logs generated from agent behavior, making it dependent on LangSmith, LangChain's commercial observability product. Over time, the harness works - or works better - only with Lang ecosystem, which creates harness lock-in. You can switch model providers, but can't switch your harness ecosystem. How the agent summarizes, compacts information, what it remembers or discard - are all at the mercy of the Lang stack.

Although it always comes with the promise of self-hosting and customization for your needs, most organizations will not or cannot do it. This has always been true for critical infrastructure, but is especially true in the LLM ecosystem given the novelty, the limited understanding most organizations have of how agents work under the hood, and the speed at which this space is evolving.

Managed solutions are likely the end-game.

So the play here seems to be to start from open-source - rather than closed-source like the dominant players - to gain market share, and convert that into structural lock-in, after significant customer adoption.

English

Gaurav Ramesh retweetledi

When I think about my writing as something that I'd like my kids to read when they grow up, suddenly the stakes become much higher. The topics I write about, what I want to say, how I write it, how much time I spend on each - the whole mindset changes.

Surprisingly, it also helps shift focus from thinking/worrying about what works on the Internet to what, if anything meaningful, I have to say.

English

As I interact more with Claude, I'm learning a few phrases in English that are good, novel to me, sound deep, but have an AI smell. "Worth sitting with" is one of those. "That insight is worth sitting with" .. "That tension is worth sitting with."

I hadn't encountered them much before LLMs, so their prevalence in my conversations now probably speaks to the kind of topics I read/learn about now, those that have gotten easier to do with LLMs - philosophy, sociology, anthropology, neuroscience, biology, psychology, and research papers.

English

@itsolelehmann Obsidian just makes it easy to work with markdown files.

At the end of the day, you need files over app, as @kepano calls it, and @obsdmd is an embodiment of that philosophy.

English

@stevemagness When you say most adults can't sprint, what do you mean exactly? I'm sure they are trying to run as fast as they can, even if they are relatively slow, but what exactly makes it a Sprint? Genuinely want to know..

English

Most adults can't sprint. They lose the capacity to.

They're body limits them to striding it because after years or decades of never doing it, the risk is too high.

One of the best things for your health and longevity is maintain the capacity to sprint.

x.com/PicturesFoIder…

non aesthetic things@PicturesFoIder

Shelly-Ann-Fraser-Pryce, 8x times Olympic medalist, obliterating other parents at a school event.

English

@vasantshetty81 The mass layoffs are because of AI, yes, but not because of the capabilities of the models, or that AI can do what those people could.

It's more to make a budget for spending on AI.

English

Oracle in India laid off 12,000 people in one go, and I was just thinking, what’s happening?

Is it all because of AI? Mostly, yes!

If you look at the rate at which AI is progressing and making strides every few weeks, its capabilities are definitely becoming 2x or 3x every three months. It’s compounding at a rapid pace. And that just shows that a lot of business processes, even legacy ones, are going to be affected by AI in one way or another.

More likely, we will see even complex workflows in Fortune 500 companies being handled by AI. There is a high chance that almost every tech job will be impacted.

The only way to handle this is for professionals in tech to quickly ramp up, develop new skills, and use their domain knowledge to become very different individuals. Agency may not always be cultivated easily, but when circumstances force change, people do adapt.

This feels like an inflection point.

People who believed their domain knowledge made them irreplaceable should realize that AI is going to come after them as well. It is better to prepare, harness AI, and ride this wave as long as it lasts.

Also, in a few years, businesses that never had any tech exposure may come under the tech radar big time. It is now much easier to build for every kind of business, even those with smaller TAMs. No matter the size, something can be built, and efficiencies can be gained.

This means a lot more businesses will become digital first, AI first, and that is where the opportunity lies.

People who are affected should think about switching domains if needed, learning new skills, and unlearning things that built their careers so far. If not, they should consider alternate paths where human effort will still be relevant for the next 10 to 15 years.

Otherwise, there is no way out.

What I see is that large companies that employed thousands in India will continue to shrink their workforce. This is not going to reverse. It will only continue.

Better to be prepared for it.

English

@Gregorein How else in the future might one found a company backed by YC that optimizes websites?

English

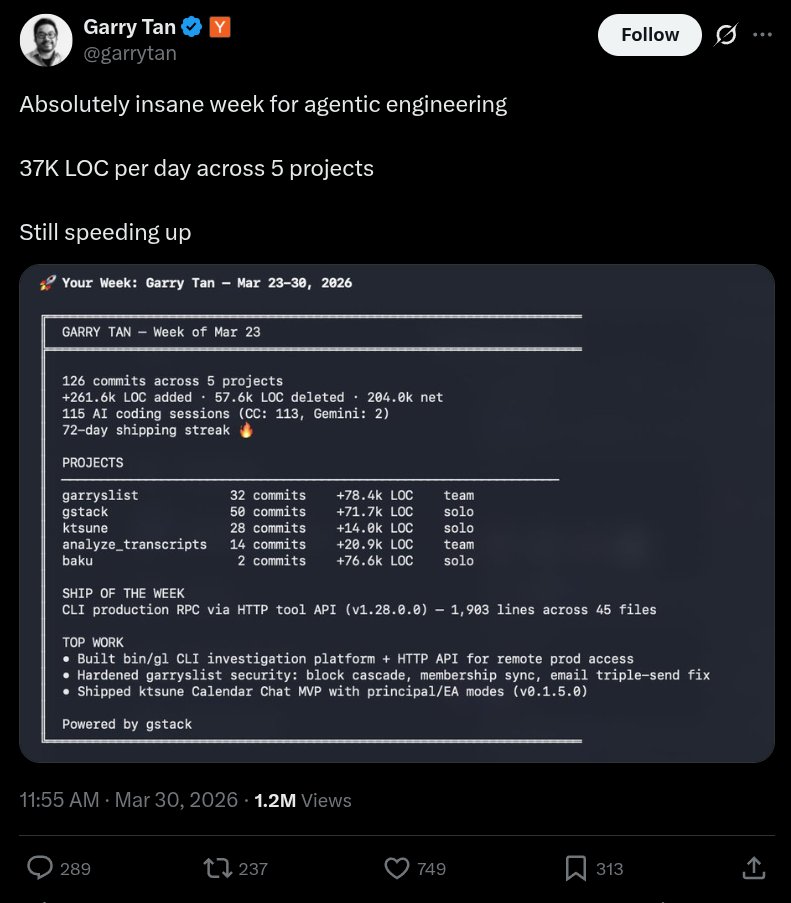

so... I audited Garry's website after he bragged about 37K LOC/day and a 72-day shipping streak.

here's what 78,400 lines of AI slop code actually looks like in production.

a single homepage load of garryslist.org downloads 6.42 MB across 169 requests.

for a newsletter-blog-thingy.

1/9🧵

Garry Tan@garrytan

Absolutely insane week for agentic engineering 37K LOC per day across 5 projects Still speeding up

English

@sawyerhood Isn't this what Cloudflare did with Vinext? blog.cloudflare.com/vinext/

English

i'm seeing a lot of fake news around this:

- no you cannot legally redistribute this

- no you cannot modify the source and redistribute it (that isn't how copyright works)

Chaofan Shou@Fried_rice

Claude code source code has been leaked via a map file in their npm registry! Code: …a8527898604c1bbb12468b1581d95e.r2.dev/src.zip

English

@ruchirkanakia If by successful you mean earning in dollars to send money back to India?

English

@akothari @KotakBankLtd I can confirm it's the same with @ICICIBank, unfortunately! Been in back and forth with them since the last 3-4 months to secure a loan, and eventually gave up

English

PSA: If you’re an Indian living overseas, think twice before opening a @KotakBankLtd account. If you already have one, it may be worth considering alternatives.

Speaking from my own experience over the past two years, even basic account changes have required excessive physical paperwork. Each round seems to uncover “one missing document” that was never mentioned earlier.

Moving funds or closing the account has been equally difficult, often attributed to “RBI regulations.” Escalations typically bring in more managers, but not much progress beyond initial apologies.

I’ve been a customer for a decade and have long respected @udaykotak as an entrepreneur, which makes this especially disappointing. I hope the bank can course correct and return to a higher standard of customer experience.

English

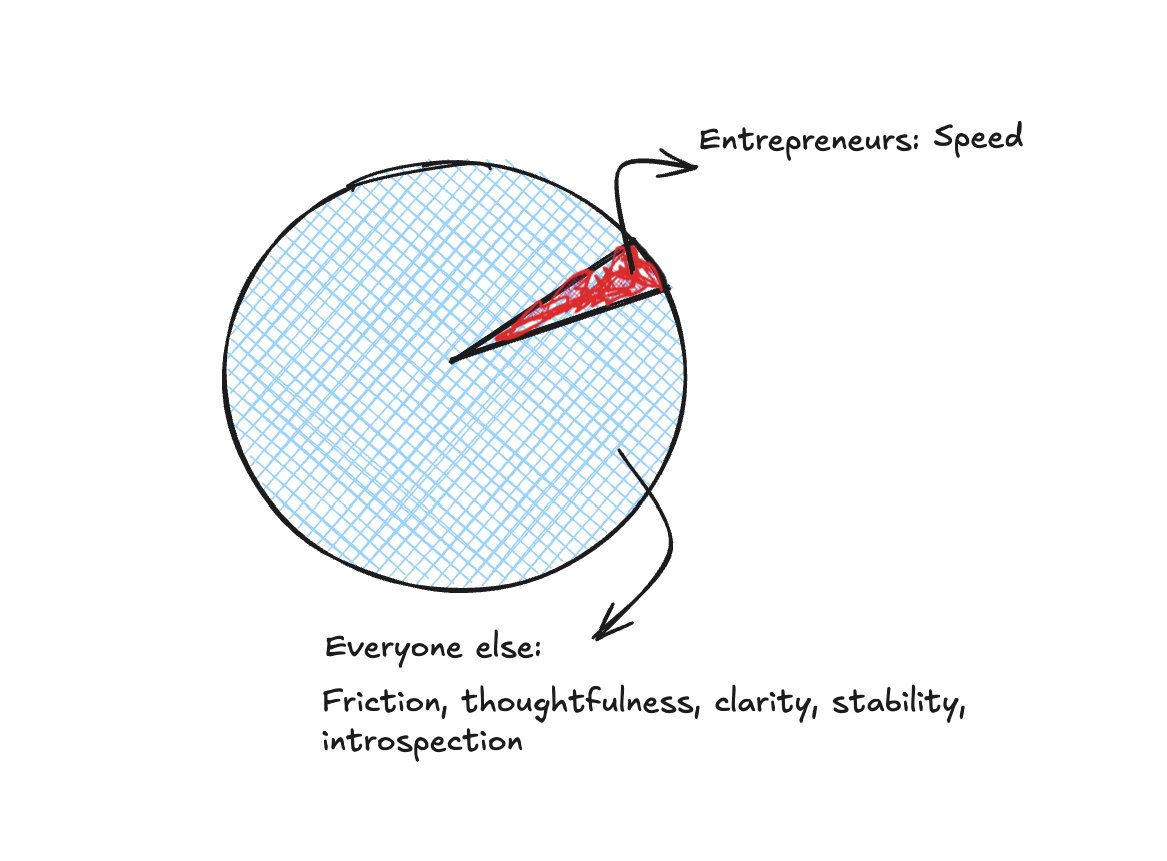

I love visuals for thinking and communication too. I wrote about it here outofdesk.blog/thinking-outsi….

But I don't buy the usual arguments for why.

"Thinking is faster than writing, so use low-latency mediums." Okay, but the friction IS the point. Slow is where the learning happens.

"We weren't evolutionarily built to read or write." So what? We weren't built to fly planes either.

English

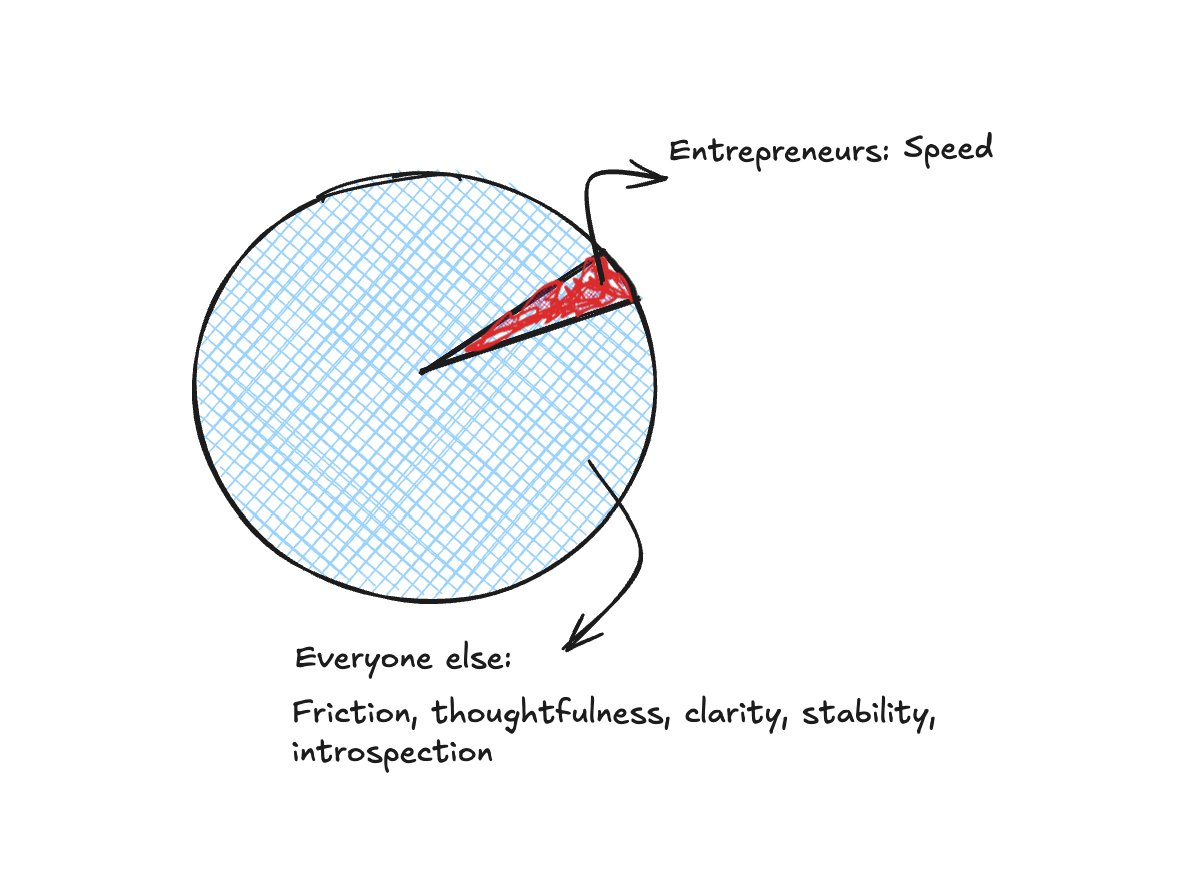

Another day, another essay about "speed as a virtue." The gist is essentially this: X is slower. Y is faster. Hence, Y is better.

I'm increasingly seeing two camps emerge in the age of AI(along this one dimension of speed): one that favors friction, thoughtfulness, clarity, and stability, argues they are "features, not bugs", and another that favors speed above all else.

Entrepreneurs and salespeople fall in the latter bucket.

Grant Lee@thisisgrantlee

English

Another day, another essay about "speed as a virtue." The gist is essentially this: X is slower. Y is faster. Hence, Y is better.

I'm increasingly seeing two camps emerge in the age of AI(along this one dimension of speed): one that favors friction, thoughtfulness, clarity, and stability, argues they are "features, not bugs", and another that favors speed above all else.

Entrepreneurs fall in the latter bucket.

x.com/thisisgrantlee…

English

In one of the @dwarkesh_sp podcasts(think it was with Sutton), he mentioned he used Gemini Deep Research to learn all about the history of RL. Although it was an ad, and he was paid to say that, if it's true, he'd do much better with Claude deep research!

I thought Gemini was good until I tried Claude. Haven't gone back since!

English