ً

19 posts

🚨 GIVEAWAY! 🚨

Solana is now available in Coinomi! Buy, sell, & trade $SOL all in one multi-chain wallet 📲

To celebrate, we’re giving $250 in $SOL distributed to 5 lucky winners!

Enter here 👇

gleam.io/9RtqD/solana-c…

English

@mosseri Fix igs reach. Its not hard. Just re-roll igs “algorithm” to pre 2021.

Ps: Threads is garbage. Idk whats worse, threads or the current ig algo. The fact you guys made a new app instead of fixing the current one is ridiculous and somewhat offensive to its content creators.

English

🎉 Threads 🎉

Threads is our new app, built by the Instagram team, for text updates and joining public conversations ✨

We’re hoping Threads can be great space for public conversations, and we’re very focused on the creator communities that already enjoy Instagram.

Available now on iOS and Android in over 100 countries. See you there ✌🏼

English

@mosseri More things we did not ask for & do not need. For the millionth time, fix REACH. Either you can’t read (bc you get millions comments saying fix reach) or you cant fix reach because you completely fked up the “algorithm”. Im not paying for ads until all my followers see my posts.

English

@JakeSucky Lgbtqrs+123 = cancer. They can label us and say whatever they want but when we say anything that upsets them CANCELED CANCELED CANCELED. They do not have more rights than us. Finally people are speaking up. Their imaginary boundaries are slowly diminishing.

English

@mosseri Fix REACH. That’s all we want. Nothing else. Ig is a SOCIAL media app and it’s getting less “social” with every update. Stop trying to be tiktok. If we wanted to use tiktok we’d use tiktok. I hope another app comes along because all you do is ruin ig with your USELESS updates.

English

📣 New Supervision Tools 📣

We want to make sure our apps are as supportive as possible for teens, which means involving parents and guardians more in their teens’ experiences, and offering teens controls to help ensure the time they’re spending on Instagram is meaningful.

Check out familycenter.meta.com for more info ✌🏼

English

@i_am_joshyo Josh goes to an abandoned ship and finds a butterfly*

Video title: ENCOUNTERED HOT DEMON HENTAI GOTH MOMMY ON GHOST SHIP IN MIDDLE OF BARACUDA TRIANGLE [EVERYONE DEAD] [ALMOST DIED] [ALMOST CRIED]

Ship owner: oh no

Best titles in the game.

English

@MarioNawfal I hope this is the end for Mosseris dumb ass at IG with his useless IG algorithm.

English

Instagram Helps Pedophiles Find Child Pornography and Arrange Meetups with Children (MUST READ)

Researchers discovered that Instagram has become a breeding ground for child pornography.

The Wall Street Journal study found that Instagram enabled people to search hashtags such as '# pedowhore' and '# preeteensex,' which allowed them to connect to accounts selling child pornography.

Furthermore, many of these accounts often claimed to be children themselves, with handles like "little slut for you."

Generally, the accounts that sell illicit sexual material don't outright publish it. Instead, they post 'menus' of their content and allow buyers to choose what they want.

Many of these accounts also offer customers the option to pay for meetups with the children.

HOW THIS WAS ALL UNCOVERED:

The researchers set up test accounts to see how quickly they could get Instagram's "suggested for you" feature to give them recommendations for such accounts selling child sexual content.

Within a short time frame, Instagram's algorithm flooded the accounts with content that sexualizes children, with some content linking to off-platform content trading sites.

Using hashtags alone, the Stanford Internet Observatory found 405 sellers of what researchers labeled "self-generated" child-sex material, or accounts purportedly run by children themselves, with some claiming to be as young as 12.

In many cases, Instagram actually permitted users to search for terms that the algorithm knew might be associated with illegal material.

When researchers used certain hashtags to find the illicit material, a pop-up would sometimes appear on the screen, saying, "These results may contain images of child sexual abuse" and noting that the production and consumption of such material cause "extreme harm" to children.

Despite this, the pop-up offered the user two options:

1. "Get resources"

2. "See results anyway"

HOW PEDOPHILES EVADED BEING CAUGHT:

Pedophiles on Instagram used an emoji system to talk in code about the illicit content they were facilitating.

For example, an emoji of a map (🗺️) would mean "MAP" or "Minor-attracted person."

A cheese pizza emoji (🍕) would be abbreviated to mean "CP" or "Child Porn."

Accounts would often identify themselves as "seller" or "s3ller" and state the ages of the children they exploited by using language such as "on Chapter 14" instead of stating their age more explicitly.

INSTAGRAM "CRACKDOWN":

Even after multiple posts were reported, not all of them would be taken down. For example, after an image was posted of a scantily clad young girl with a graphically sexual caption, Instagram responded by saying, "Our review team has found that [the account's] post does not go against our Community Guidelines."

Instagram recommended that the user hide the account instead to avoid seeing it.

Even after Instagram banned certain hashtags associated with child pornography, Instagram's AI-driven hashtag suggestions found workarounds.

The AI would recommend the user try different variations of their searches and add words such as "boys" or "CP" to the end instead.

The Stanford team also conducted a similar test on Twitter.

INSTAGRAM VS TWITTER:

While they still found 128 accounts offering to sell child sexual abuse (less than a third of the accounts they found on Instagram), they also noted that Twitter's algorithm didn't recommend such accounts to the same degree as Instagram, and that such accounts were taken down far quicker than on Instagram.

@elonmusk just tweeted Wall Street Journal's article 2 mins ago labelling it "extremely concerning"

With algorithms and AI getting smarter, unfortunately, cases like this become more common.

In 2022, the National Center for Missing & Exploited Children in the U.S. received 31.9 million reports of child pornography, mostly from internet companies, which is a 47% increase from two years earlier.

How can social media companies, especially Meta, get better at regulating A.I in order to prevent disgusting cases such as this one?

English

@mosseri “We do not supress reach in order to get accounts to pay for ads”, yes you do. All you do is lie. I went from getting hundreds of likes in SECONDS and thousands of likes in 24hs on all my posts to barely getting 1000. One day you’re going to get shadow-banned IN REAL LIFE.

English

How the “Algorithm” Works 📝

- “The Algorithm” (or actually algorithms)

- Ranking Stories

- Ranking Feed

- Ranking Reels

- Ranking Explore

- “Shadowbanning”

- How to Grow Your Audience

More details here: about.instagram.com/blog/announcem…

English

@mosseri So basically IG decides what everyone is supposed to see and like. I hate you so much for ruining all of my years worth of hard-work. Countless hours spent creating content and building my community and you just hide my shit. IG pre-Mosseri was the best ig. You should be fired.

English

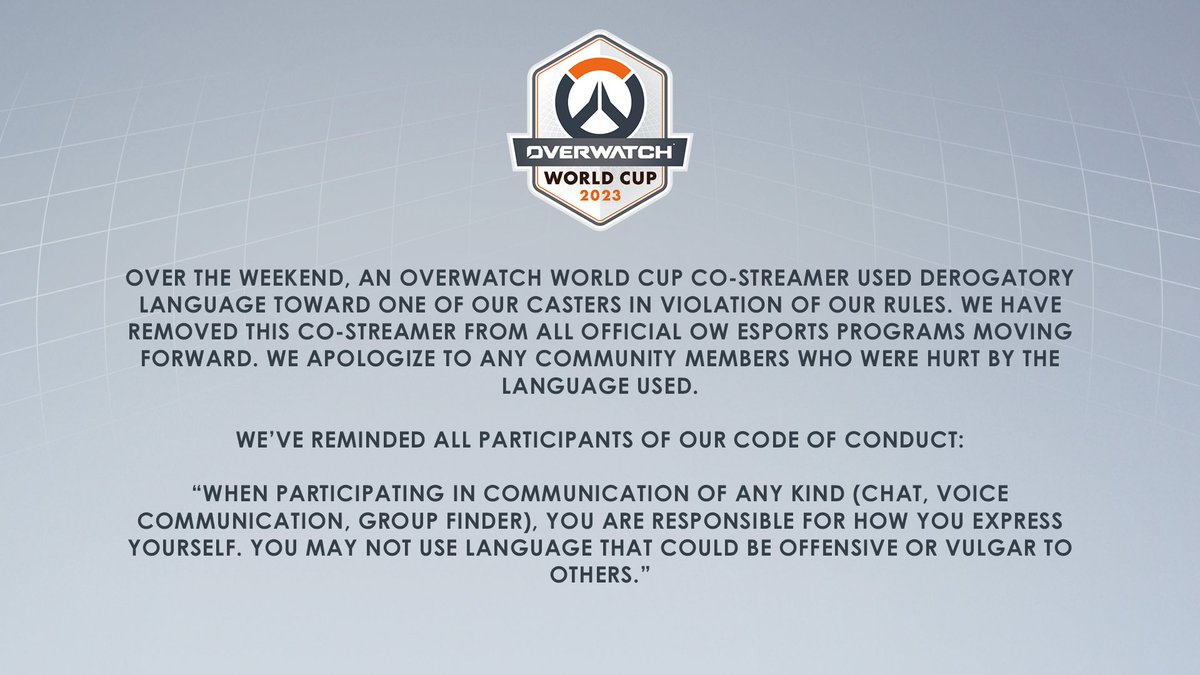

@OhReallyJared @PlayOverwatch When I BOUGHT OW1 I bought a game that included all the heros. In OW2 that is gone & so am I. Since i found out I had to pay for Ramattra you lost me and many others. PVE? Lootboxes? I could care less. The OW experience are the heros and you took that away from us. Unforgivable👎🏻

English