Jiangwei Pan

249 posts

Jiangwei Pan

@panjiangwei

Personalization algorithms @Netflix.

Katılım Nisan 2010

346 Takip Edilen89 Takipçiler

Jiangwei Pan retweetledi

The University of Hong Kong (HKU) welcomes Professor Ngô Bảo Châu, recipient of the 2010 Fields Medal, to join the Department of Mathematics under the Faculty of Science, commencing June 2026. Professor Ngô earned global recognition for his groundbreaking proof of the fundamental lemma in the Langlands Programme, a monumental achievement that solved a three-decade-old mathematical challenge and was named one of Time magazine's Top 10 Scientific Discoveries of 2009.

The Langlands Programme, proposed by mathematician Robert Langlands in 1967, stands as one of mathematics' most ambitious theoretical frameworks, creating vital connections between number theory and group theory. For three decades, its fundamental lemma remained unproven until Professor Ngô's ingenious solution in 2009, which not only validated years of mathematical work but also opened new pathways within this profound theoretical structure.

In 2010, Professor Ngô made history by becoming the first Vietnamese mathematician awarded the Fields Medal – mathematics' highest honour for researchers under 40. Along with the Abel Prize, these awards are widely regarded as the discipline's equivalent of the Nobel Prize. His transformative contributions to number theory and representation theory have had far-reaching implications across mathematical physics. His distinguished honours include the Clay Research Award (2004), Oberwolfach Prize (2007), Sophie Germain Prize (2007), and election as a Member of the American Academy of Arts and Sciences (2012) and a Foreign Member of the French Academy of Sciences (2016).

read more: hku.hk/press/press-re…

#HKU #香港大學 #UniversityofHongKong

English

@TheGregYang The good news for RecSys is that most of the signals/patterns can be learned from most recent data (eg last 1 day).

English

Jiangwei Pan retweetledi

Jiangwei Pan retweetledi

@netflixsports @AlexHonnold Such a good answer. True inspiration!

English

“It’s a reminder that their time is finite and they should use it in the most meaningful way that they can. If you work really hard at it, you can do hard things if you try.”

@AlexHonnold on the message he hopes people around the world take away following #SkyscraperLIVE ❤️🙏

English

The @xAI team is working on providing For You tabs that are specific to topics.

For example, a “For You AI” that is focused only on artificial intelligence with no political rage bait.

This would be like automatically generated follow lists with content ranked by quality.

English

Jiangwei Pan retweetledi

Every Bay Area engineer stereotype right now (with TC):

OpenAI: $XXM+, either working on coolest thing ever or the 156th AI product

Anthropic: $XXM+, devout AGI-pilled zealots everything the AI just stay pure

Meta: $XM+, cashing in the checks of a dysfunctional org while justifying value in the 73th reorg this year

Google: $XM+, decides to code once in a while unless ambitious enough to become the 426th DeepMind offshoot shipping Gemini in X

AI Founder: $XM+ secondary, "our main differentiator here is focus. Were growing like 10x this year (from $100k arr). We might be the fastest to $1M ARR. Our team is so cracked dude you have no idea"

AI Startup engg: $200k, grinding 24/7 to pronpt LLM do one thing. start over after new model release. "it's not just prompts bro.. we do RL and have like our own proprietary data" (17 rows)

Other BigTech: $500k+, worked tirelessly to be on the team that ships the new AI feature on a SaaS product no one thinks about, just learnt about "RAG"

Microsoft: $500k+, "chill bro ultimately the AI is only useful in Office. Did you know Excel is the most popular programming language? yeah dude Copilot is a game changer"

Amazon: $400k, just survived a PIP, "so do these AI jobs ask leetcode?"

Apple: $500k+, got the entire org's AI project canned but can't talk about it "ultimately there's no value in models, it's all gonna be on-device"

Elon-owned Co: $XM+, first 180 days, works 24/7 "I do this cause I love it. This is humanitys most important problem", next 180 days: "so like, LinkedIn reached out and it's pretty compelling (read: Elon doesn't hate it and I haven't seen my wife in months)"

Nvidia: $XXM+, "life's good."

English

Jiangwei Pan retweetledi

The @ilyasut episode

0:00:00 – Explaining model jaggedness

0:09:39 - Emotions and value functions

0:18:49 – What are we scaling?

0:25:13 – Why humans generalize better than models

0:35:45 – Straight-shotting superintelligence

0:46:47 – SSI’s model will learn from deployment

0:55:07 – Alignment

1:18:13 – “We are squarely an age of research company”

1:29:23 – Self-play and multi-agent

1:32:42 – Research taste

Look up Dwarkesh Podcast on YouTube, Apple Podcasts, or Spotify. Enjoy!

English

@karpathy I wonder if humans simply use supervised learning to incorporate reward, eg with any sample (context, actions, reward), construct two SFT examples: (1) context, reward: actions (2) context, actions: reward. At inference, just generate from “context, max_reward”.

English

In era of pretraining, what mattered was internet text. You'd primarily want a large, diverse, high quality collection of internet documents to learn from.

In era of supervised finetuning, it was conversations. Contract workers are hired to create answers for questions, a bit like what you'd see on Stack Overflow / Quora, or etc., but geared towards LLM use cases.

Neither of the two above are going away (imo), but in this era of reinforcement learning, it is now environments. Unlike the above, they give the LLM an opportunity to actually interact - take actions, see outcomes, etc. This means you can hope to do a lot better than statistical expert imitation. And they can be used both for model training and evaluation. But just like before, the core problem now is needing a large, diverse, high quality set of environments, as exercises for the LLM to practice against.

In some ways, I'm reminded of OpenAI's very first project (gym), which was exactly a framework hoping to build a large collection of environments in the same schema, but this was way before LLMs. So the environments were simple academic control tasks of the time, like cartpole, ATARI, etc. The @PrimeIntellect environments hub (and the `verifiers` repo on GitHub) builds the modernized version specifically targeting LLMs, and it's a great effort/idea. I pitched that someone build something like it earlier this year:

x.com/karpathy/statu…

Environments have the property that once the skeleton of the framework is in place, in principle the community / industry can parallelize across many different domains, which is exciting.

Final thought - personally and long-term, I am bullish on environments and agentic interactions but I am bearish on reinforcement learning specifically. I think that reward functions are super sus, and I think humans don't use RL to learn (maybe they do for some motor tasks etc, but not intellectual problem solving tasks). Humans use different learning paradigms that are significantly more powerful and sample efficient and that haven't been properly invented and scaled yet, though early sketches and ideas exist (as just one example, the idea of "system prompt learning", moving the update to tokens/contexts not weights and optionally distilling to weights as a separate process a bit like sleep does).

Prime Intellect@PrimeIntellect

Introducing the Environments Hub RL environments are the key bottleneck to the next wave of AI progress, but big labs are locking them down We built a community platform for crowdsourcing open environments, so anyone can contribute to open-source AGI

English

Jiangwei Pan retweetledi

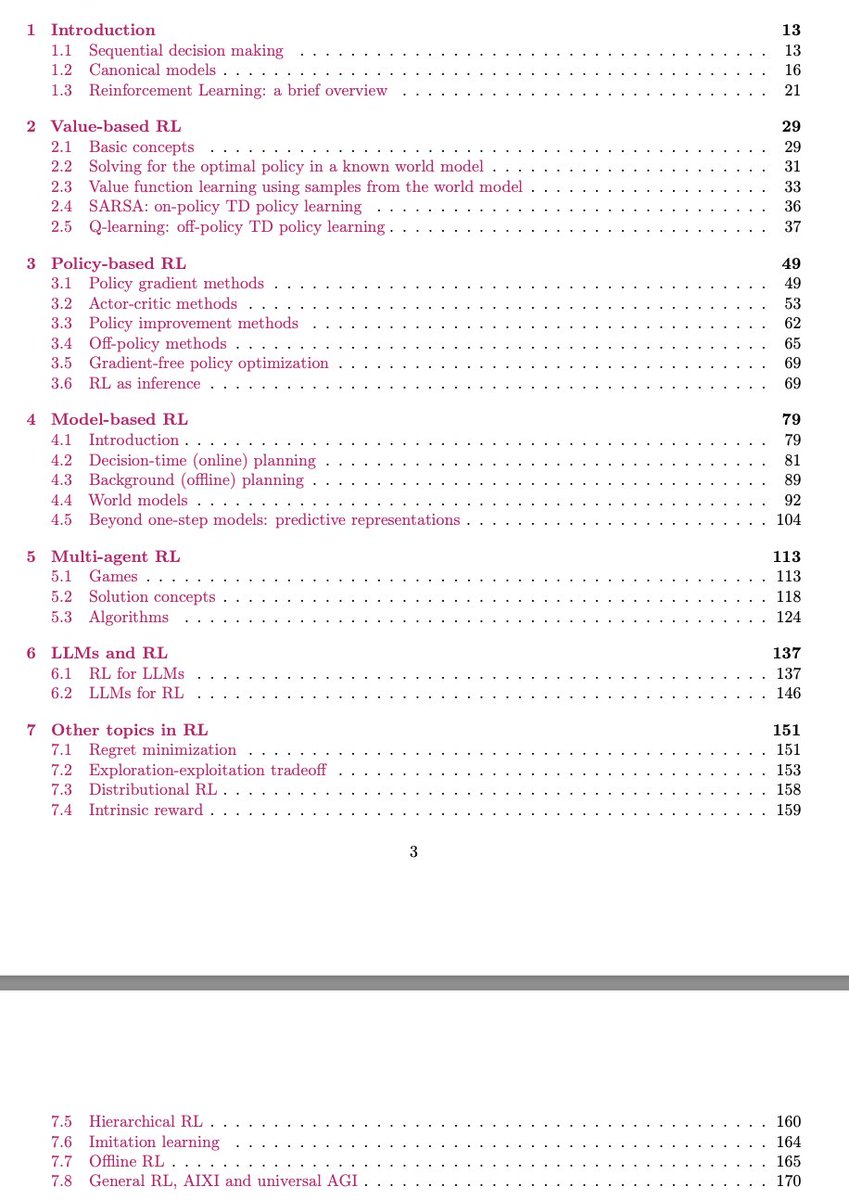

I am pleased to announce a new version of my RL tutorial. Major update to the LLM chapter (eg DPO, GRPO, thinking), minor updates to the MARL and MBRL chapters and various sections (eg offline RL, DPG, etc). Enjoy!

arxiv.org/abs/2412.05265

English

Jiangwei Pan retweetledi

Jiangwei Pan retweetledi

Introducing AlphaEvolve: a Gemini-powered coding agent for algorithm discovery.

It’s able to:

🔘 Design faster matrix multiplication algorithms

🔘 Find new solutions to open math problems

🔘 Make data centers, chip design and AI training more efficient across @Google. 🧵

GIF

English

Jiangwei Pan retweetledi

We're missing (at least one) major paradigm for LLM learning. Not sure what to call it, possibly it has a name - system prompt learning?

Pretraining is for knowledge.

Finetuning (SL/RL) is for habitual behavior.

Both of these involve a change in parameters but a lot of human learning feels more like a change in system prompt. You encounter a problem, figure something out, then "remember" something in fairly explicit terms for the next time. E.g. "It seems when I encounter this and that kind of a problem, I should try this and that kind of an approach/solution". It feels more like taking notes for yourself, i.e. something like the "Memory" feature but not to store per-user random facts, but general/global problem solving knowledge and strategies. LLMs are quite literally like the guy in Memento, except we haven't given them their scratchpad yet. Note that this paradigm is also significantly more powerful and data efficient because a knowledge-guided "review" stage is a significantly higher dimensional feedback channel than a reward scaler.

I was prompted to jot down this shower of thoughts after reading through Claude's system prompt, which currently seems to be around 17,000 words, specifying not just basic behavior style/preferences (e.g. refuse various requests related to song lyrics) but also a large amount of general problem solving strategies, e.g.:

"If Claude is asked to count words, letters, and characters, it thinks step by step before answering the person. It explicitly counts the words, letters, or characters by assigning a number to each. It only answers the person once it has performed this explicit counting step."

This is to help Claude solve 'r' in strawberry etc. Imo this is not the kind of problem solving knowledge that should be baked into weights via Reinforcement Learning, or least not immediately/exclusively. And it certainly shouldn't come from human engineers writing system prompts by hand. It should come from System Prompt learning, which resembles RL in the setup, with the exception of the learning algorithm (edits vs gradient descent). A large section of the LLM system prompt could be written via system prompt learning, it would look a bit like the LLM writing a book for itself on how to solve problems. If this works it would be a new/powerful learning paradigm. With a lot of details left to figure out (how do the edits work? can/should you learn the edit system? how do you gradually move knowledge from the explicit system text to habitual weights, as humans seem to do? etc.).

English

@sama Very impressive. Although log-scale may be more accurate. +1M on top of 0 users is much harder than on top of O(100M) users.

English

Got a picture that isn't quite right? Try our native image generation in Gemini Flash 2.0. "Can you remove the stuff on the couch?". "Can you make the curtains light green?" "Can you put a unicorn horn on the person in the green pants?"

Editing in human language, not image editing tools

Oriol Vinyals@OriolVinyalsML

Gemini 2.0 Flash debuts native image gen! Create contextually relevant images, edit conversationally, and generate long text in images. All totally optimized for chat iteration. Try it in AI Studio or Gemini API. Blog: developers.googleblog.com/en/experiment-…

English