Parav

710 posts

To manage growing demand for Claude we're adjusting our 5 hour session limits for free/Pro/Max subs during peak hours. Your weekly limits remain unchanged. During weekdays between 5am–11am PT / 1pm–7pm GMT, you'll move through your 5-hour session limits faster than before.

We’re releasing OmniReset, a framework for training robot policies using large-scale RL and diverse resets for contact-rich, dexterous manipulation. OmniReset pushes the frontier of robustness and dexterity, without any reward engineering or demonstrations. Try the policies yourself in our interactive simulator! weirdlabuw.github.io/omnireset/ (1/N 🧵)

This entire cyberpunk world was built by a single creator. 100 million Gaussian splats, with nearly every surface and structure generated in Marble. We’re entering an era where individuals can build entire worlds.

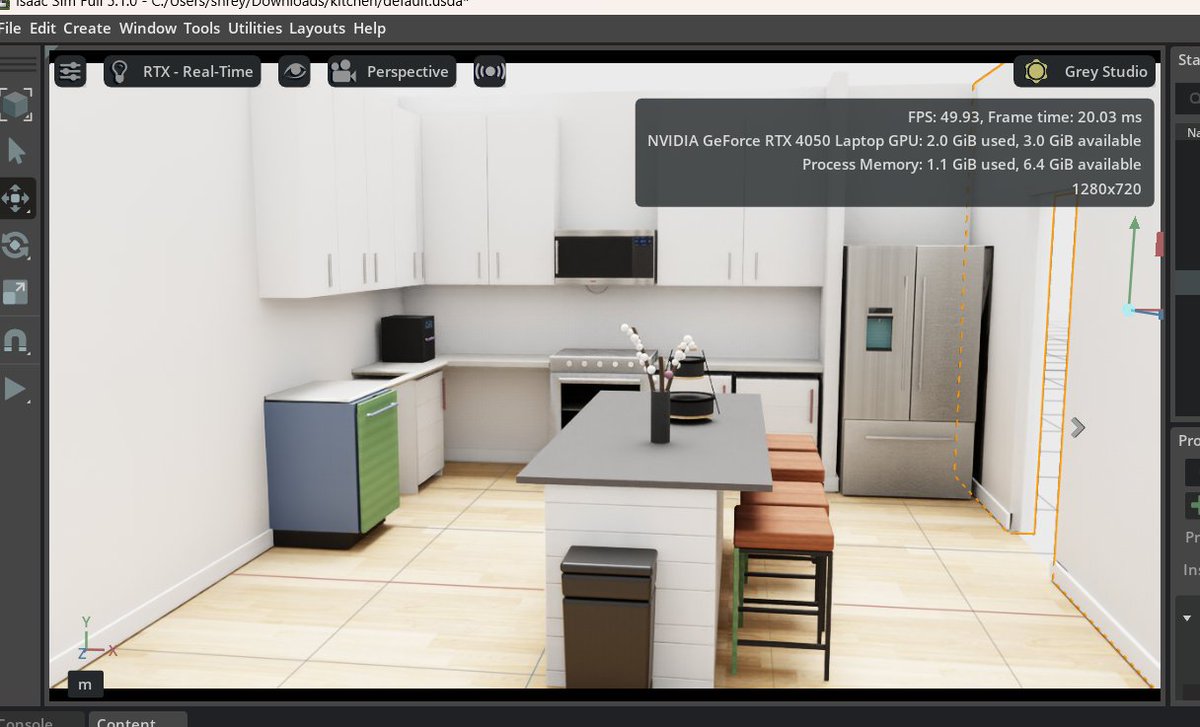

we’re opening this up to testers. it now supports both isaac and mujoco. if you’re interested in automating sim creation, reach out

Our recent findings on World Action Models (WAMs): the core advantage of WAMs is not test-time “imagination” of futures, but the training-time supervision from future video prediction. We propose Fast-WAM, which makes inference simple, fast, and policy-centric.

Why does manipulation lag so far behind locomotion? New post on one piece we don't talk about enough: The gearbox. The Gap You've probably seen those dancing humanoid robots from Chinese New Year. Locomotion isn't entirely solved; but clearly it's on a trajectory. But we haven't seen anything close for manipulation. 𝗪𝗵𝘆? When sim-to-real transfer fails, the instinct is to blame the algorithm. Train bigger networks. Crank up domain randomization. Those approaches have made real progress; we don't deny that. But we started wondering: are we treating the symptom or the disease? The Hardware Bottleneck: Fingers are too small for powerful motors. So most hands use massive gearboxes (200:1, 288:1) to get enough torque. But those gearboxes break everything manipulation needs: • Stiction and backlash are complex to simulate. Policies trained on smooth physics hallucinate when they hit that reality. • Reflected inertia scales as N². At large gear ratio, the finger hits with sledgehammer momentum. • Friction blocks force information. The hand becomes blind. And they're the first thing to break. What we are trying to build at Origami, we cut the gear ratio from 288:1 to 15:1 using axial flux motors and thermal optimization. The transmission becomes more transparent: backdrivable, low friction, forces propagate to motor current. Early signs are encouraging. Still running quantitative benchmarks. Why Interactive? I love how Science Center uses interactive devices to explain complex ideas. I want to borrow this concept and help people understand the hard problems in robotics better visually. The post has demos where you can toggle friction, slide gear ratios, watch the sim-to-real gap widen in real-time. What's inside: • Interactive demos (friction curves, N² scaling, contact patterns) • Comparison table: 14 robot hands by sim-to-real gap and force transparency • The math behind why low-ratio matters Read it here: origami-robotics.com/blog/dexterity… We're not claiming we've solved dexterity. The deadlock has many pieces. But we think this one's foundational. Curious what you think.

I realized something else AI has changed about coding: you don't get stuck anymore. Programming used to be punctuated by episodes of extreme frustration, when a tricky bug ground things to a halt. That doesn't happen anymore.

Researchers trained a humanoid robot to play tennis using only 5 hours of motion capture data The robot can now sustain multi-shot rallies with human players, hitting balls traveling >15 m/s with a ~90% success rate AlphaGo for every sport is coming