Peppe Silletti

287 posts

Peppe Silletti

@peppesilletti

Product Engineer for AI-native startups (open to part-time opportunities as IC or Coach) | Podcast Host @ “Product Engineers - Create Fiercely”

Katılım Aralık 2024

99 Takip Edilen25 Takipçiler

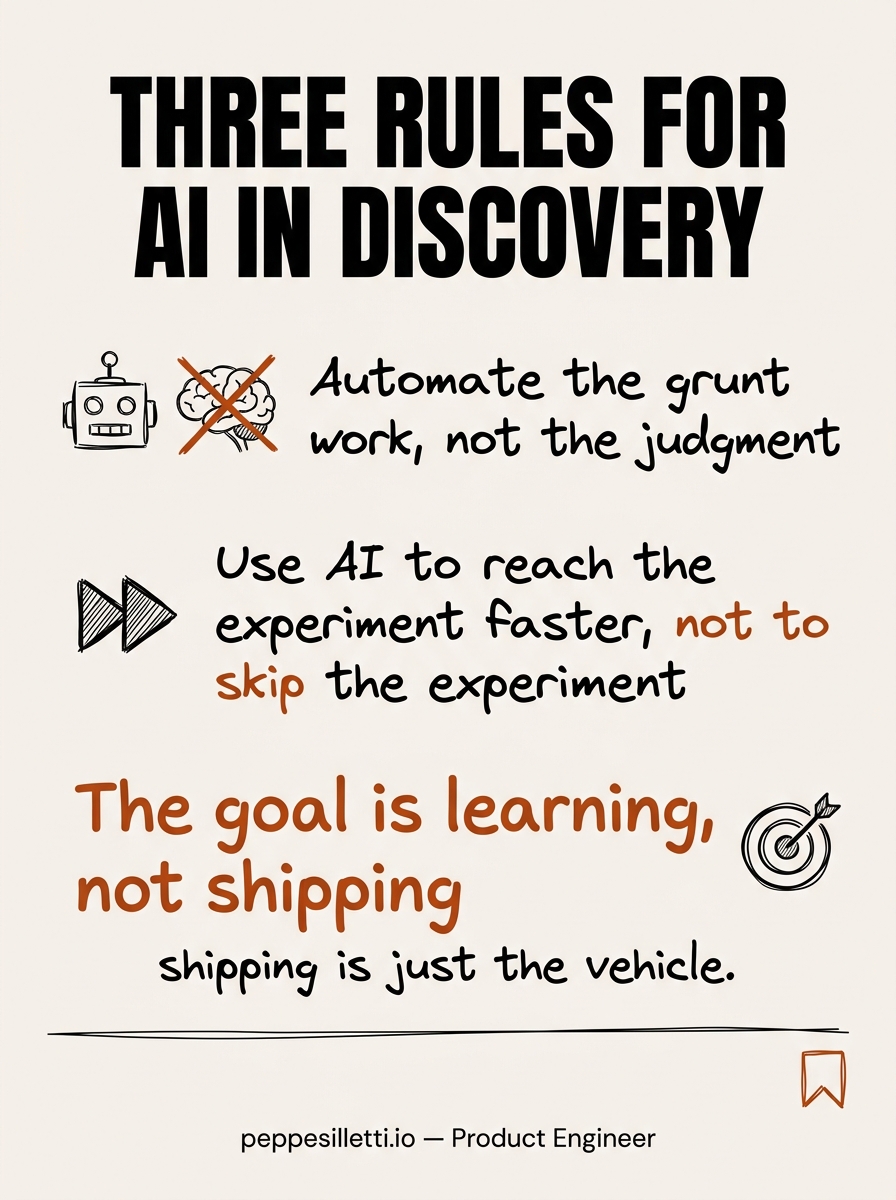

In 2020, the bottleneck was building fast enough. In 2026, it's knowing what to build next.

Most teams upgraded their speed but kept their old process for deciding where to point it. The ones pulling ahead are using a different approach… they started treating learning as the goal.

Speed just became the vehicle.

English

Interviewing people during product discovery is one of my favourite things. Hearing their stories, their struggles, their wins. Trying to read between the lines of what they actually mean.

I feel a bit like Sherlock Holmes and a bit like a therapist, getting someone to open up about stuff they didn't even know bothered them. Then, connecting the dots they can't see yet.

I dunno, there's something deeply satisfying about digging into people's minds and finding an opportunity to build something genuinely useful for someone.

English

"We already know what users want" (based on one conversation with a friend who's not even in the target market)

"Let's just build it and see" (translation: let's spend 3 months building something we could've invalidated in 3 days)

"The data will tell us" (there is no data, there is no instrumentation, there are only vibes)

"We did a survey once" (47 responses, 43 of which were from the team's LinkedIn connections)

"Our PM talked to someone" (one call, four months ago, notes lost in a Notion page nobody can find)

"We'll do user research in v2" (v2 does not exist and never will)

"The competitor does it this way" (so now we're building someone else's product instead of our own)

"I used to be a user so I know" (you used to be a user of a completely different product five years ago)

"We have product-market fit" (we have 12 users and 3 of them are investors)

Startup discovery bingo. You know the game.

English

@mattpocockuk I keep thinking that we should treat AI agents as humans. This is such a human thing... for me, context is the same as our short-term memory. If we overload it with information, we start to lose focus and become less productive.

English

Doing some experiments today with Opus 4.6's 1M context window.

Trying to push coding sessions deep into what I would consider the 'dumb zone' of SOTA models: >100K tokens.

The drop-off in quality is really noticeable. Dumber decisions, worse code, worse instruction-following.

Don't treat 1M context window any differently.

It's still 100K of smart, and 900K of dumb.

English

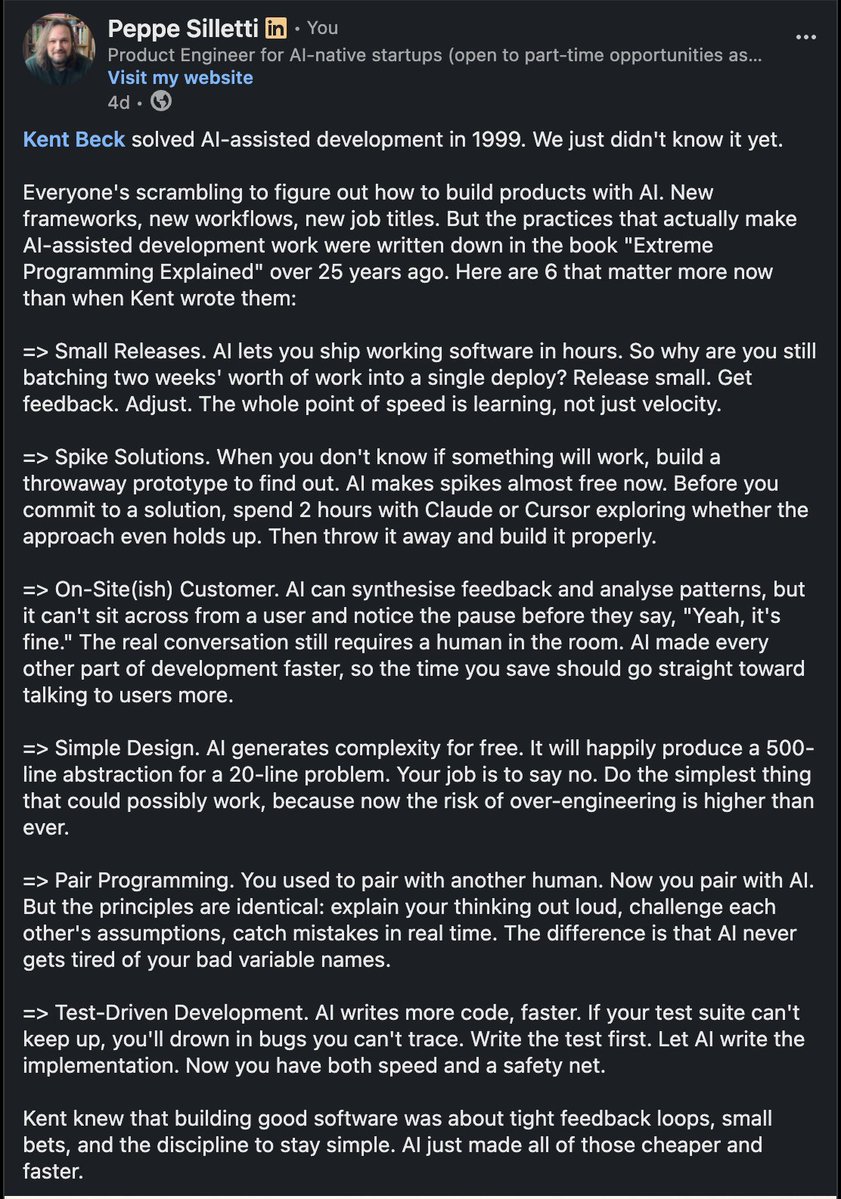

@KentBeck solved AI-assisted development in 1999. We just didn't know it yet.

Everyone's scrambling to figure out how to build products with AI. New frameworks, new workflows, new job titles. But the practices that actually make AI-assisted development work were written down in the book "Extreme Programming Explained" over 25 years ago. Here are 6 that matter more now than when Kent wrote them:

1. Small Releases. AI lets you ship working software in hours. So why are you still batching two weeks' worth of work into a single deploy? Release small. Get feedback. Adjust. The whole point of speed is learning, not just velocity.

2. Spike Solutions. When you don't know if something will work, build a throwaway prototype to find out. AI makes spikes almost free now. Before you commit to a solution, spend 2 hours with Claude or Cursor exploring whether the approach even holds up. Then throw it away and build it properly.

3. On-Site(ish) Customer. AI can synthesise feedback and analyse patterns, but it can't sit across from a user and notice the pause before they say, "Yeah, it's fine." The real conversation still requires a human in the room. AI made every other part of development faster, so the time you save should go straight toward talking to users more.

4. Simple Design. AI generates complexity for free. It will happily produce a 500-line abstraction for a 20-line problem. Your job is to say no. Do the simplest thing that could possibly work, because now the risk of over-engineering is higher than ever.

5. Pair Programming. You used to pair with another human. Now you pair with AI. But the principles are identical: explain your thinking out loud, challenge each other's assumptions, catch mistakes in real time. The difference is that AI never gets tired of your bad variable names.

6. Test-Driven Development. AI writes more code, faster. If your test suite can't keep up, you'll drown in bugs you can't trace. Write the test first. Let AI write the implementation. Now you have both speed and a safety net.

Kent knew that building good software was about tight feedback loops, small bets, and the discipline to stay simple. AI just made all of those cheaper and faster.

English

@Sc_Meerkat @svpino This has always been the case, especially with open-source projects. Before AI, no one stopped a dev from cloning the repo and reusing it / changing it slightly for their own purposes. It was just slower... Does it make a difference if the copycat is a human or an agent?

English

@peppesilletti @svpino The only reason it can be “simply rebuilt on the spot” is because the AI was trained on the source code of that open-source project.

LLM does not create new, they simply rehash other people’s creation.

English

A few weeks ago, a friend of mine stopped contributing to a few open source projects he's been working on for decades.

He just stopped.

"I don't want to train my replacement for free," were his exact words.

I keep thinking about this.

I really don't know what will happen to open-source projects in the next few years, when it becomes painfully obvious that developers no longer care because they can just "build" whatever they need on the spot.

By the way, I don't think developers should be reinventing the wheel every time, and there's absolutely zero chance that leads to better software, but the reality is that many people don't care anymore.

English