Sabitlenmiş Tweet

Our research: Adversarial Flow Models (AF)

arxiv.org/abs/2511.22475

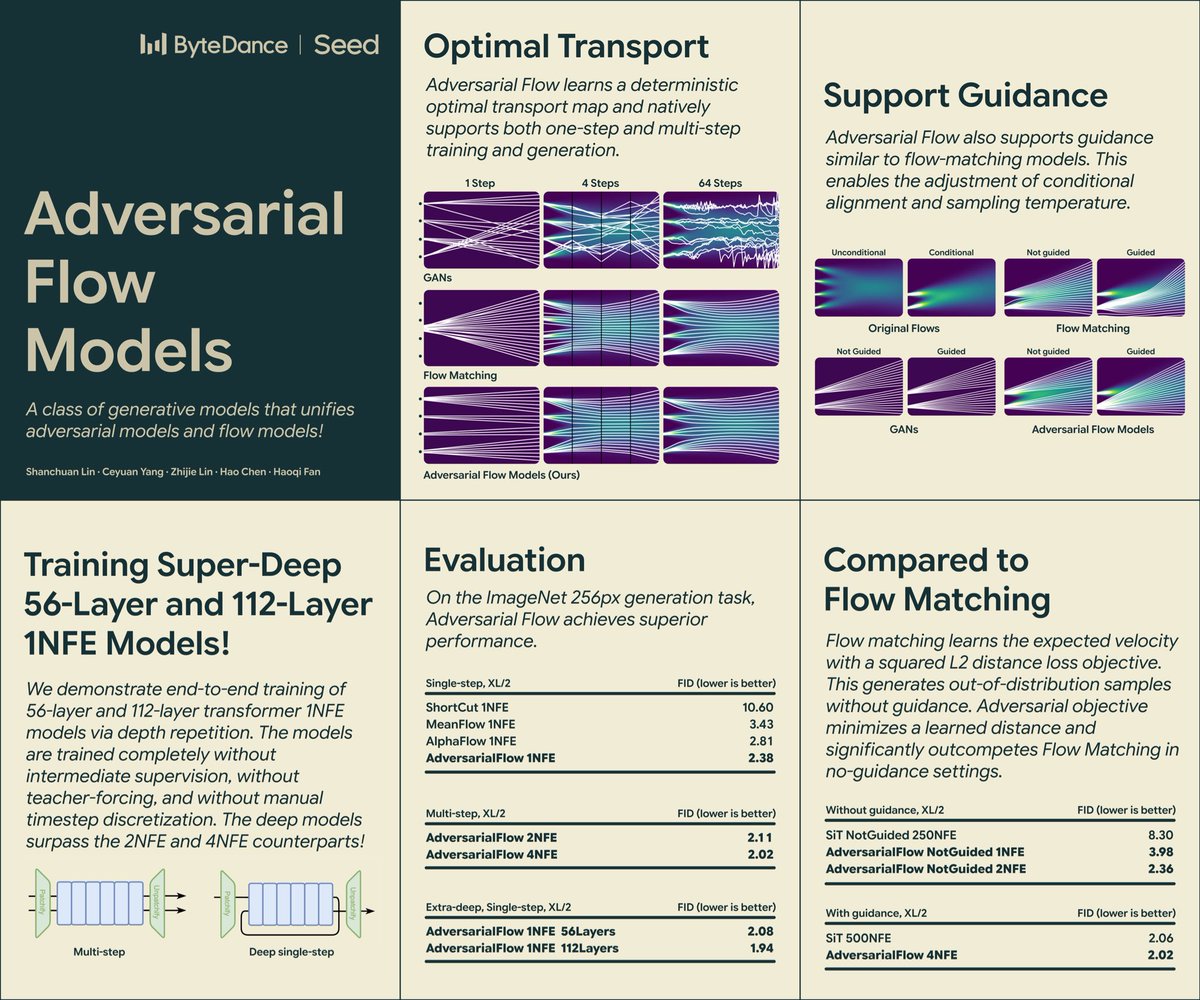

AF unifies Adversarial and Flow Models.

Unlike GANs, AF learns optimal transport (stable). Unlike CMs, AF only trains on needed timesteps (save capacity). We can train super-deep 112-layer 1NFE model! SOTA FIDs!

English