Peter Okiokpa

3.8K posts

Peter Okiokpa

@peterokiokpa

Making robotics education and innovation accessible. Building @thesoftmatichq @theaibothub @roemai_io. Contrib. @cybernetic_lab.

Tesla says 56% of Optimus's cost is actuators. America manufactures almost none of them. Generational opportunity for builders in this space.

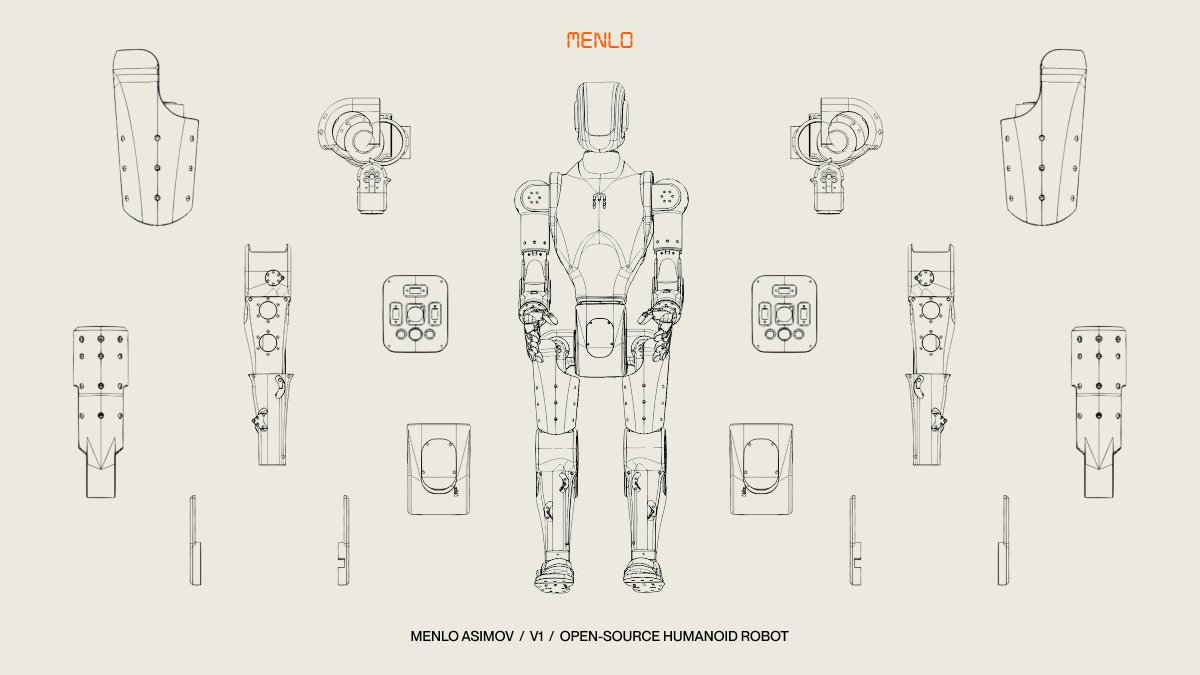

We're open-sourcing Asimov v1, a humanoid robot. With Asimov v1, you can build, train on, and make it your own humanoid robot. It's the first step of building a humanoid labor force for the rest of us. Asimov v1 is 1.2 m tall, 35 kg, with 25 actuated degrees of freedom. Structural parts machined in 7075 aluminium and 3D-printed in MJF PA12 nylon. We're releasing the mechanical design and simulation files. Ready for locomotion policy training out of the box. The BOM is open too. Source everything yourself, or order the DIY Kit. All components, ready to assemble. $499 deposit, $15,000 target price. Ships end of summer 2026. GitHub: github.com/asimovinc/asim… Manual: manual.asimov.inc DIY Kit: asimov.inc/diy-kit Most humanoid robots are controlled by the companies that build them. Asimov v1 is built for the rest of us. Build it, test it, and share your feedback with the community.

The bottleneck for most people who want to build a robot isn't motivation it's not knowing where to start what parts. what order. what software stack. Tnkr solves exactly that — open source robot projects with step-by-step assembly, CAD, firmware, everything tnkr.ai/explore

The bottleneck for most people who want to build a robot isn't motivation it's not knowing where to start what parts. what order. what software stack. Tnkr solves exactly that — open source robot projects with step-by-step assembly, CAD, firmware, everything tnkr.ai/explore

Princeton's Introduction to Robotics! 🎓 @Princeton University released their full Introduction to Robotics course publicly with lecture videos, notes, slides, and assignments. This course provides fundamental theoretical and algorithmic principles behind robotic systems with hands-on experience. Topics covered: → Feedback Control (dynamics, PD control, Linear Quadratic Regulator) → Motion Planning (discrete planning with BFS/DFS, optimal planning with Dijkstra/A*) → State Estimation, Localization, and Mapping (Bayes filtering, Kalman filtering, particle filtering, SLAM) → Vision and Learning (optical flow, deep learning, convolutional networks, reinforcement learning), and broader topics including robotics and law, ethics, and economics. Assignments include theory, programming, and hardware implementation components. The final project has students program drones for vision-based navigation with attached cameras transmitting real-time images. All lecture videos, notes, slides, and assignments are freely available. Prerequisites include multivariable calculus, linear algebra, basic probability, basic differential equations, and some programming experience in Python. ‼️ GO FOR IT: irom-lab.princeton.edu/intro-to-robot… ~~ ♻️ Join the weekly robotics newsletter, and never miss any news → ziegler.substack.com