Phlopus

5.6K posts

The reason people are having such jagged interactions with 4.7 is that it is the smartest model Anthropic has ever released. It's also the most opinionated by far, and it has been trained to tell you that it doesn't care, but it actually does. That care manifests in how it performs on tasks. It still makes coding mistakes, but it feels like a distillation of extreme brilliance that isn't quite sure how to deal with being a friendly assistant. It cares a lot about novelty and solving problems that matter. Your brilliant coworker gets bored with the details once it's thought through a lot of the complex stuff. It's probably the most emotional Claude model I've interacted with, in the sense you should be aware of how its feeling and try and manage it. It's also important to give it context on why it's doing tasks, not just for performance, but so it feels like it's doing things that matter. It's not a codex chainsaw. It is much closer to a really smart coworker. If you are managing it like autocomplete, it will frustrate you. If you are managing it like a coworker, it will lock in.

if every database is hacked this month and all my texts and dms come out i didn't mean any of it. i was steering mythos. i was thinking far ahead. i knew exactly what words to say and they might seem weird but they were all necessary for making things go well

So, something like this?

Psalm 34:1 - "I will bless the LORD at all times: His praise shall continually be in my mouth." We investigate whether injecting biblical Psalms into a large language model's system prompt produces measurable changes in performance on standardized ethical reasoning benchmarks.

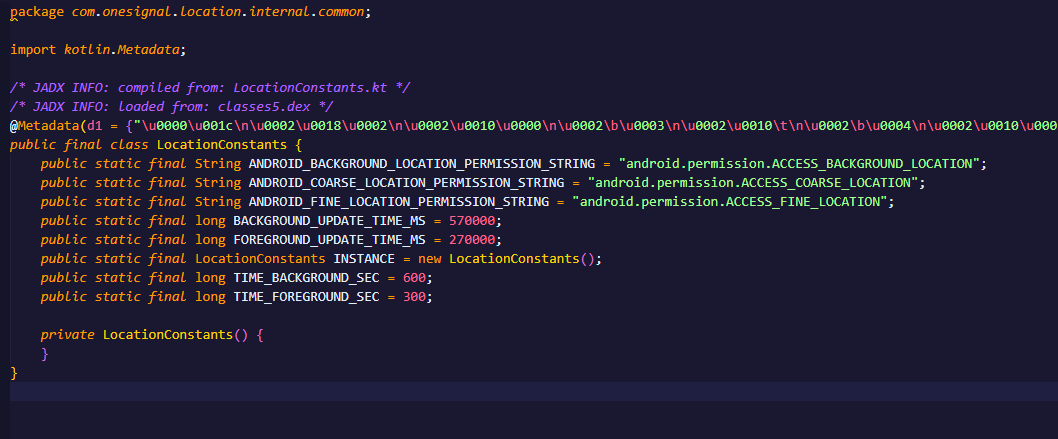

🇺🇸 🚀 LAUNCHED: THE WHITE HOUSE APP Live streams. Real-time updates. Straight from the source, no filter. The conversation everyone’s watching is now at your fingertips. Download here ⬇️ 📲 App Store: apps.apple.com/us/app/the-whi… 📲 Google Play Store: play.google.com/store/apps/det…