Probability and Statistics

6.7K posts

Probability and Statistics

@probnstat

Sharing insights on Probability, Statistics, ML, DL and AI research. Subscribe for recent research paper discussions at $2/month. DM to collaborate.

Katılım Eylül 2022

681 Takip Edilen79.7K Takipçiler

Sabitlenmiş Tweet

Introducing: holaOS — the AI-native computer for every kind of digital work.

More than just another AI agent, holaOS is an open-source “agent computer” built to collaborate with you. It understands your goals, adapts to your workflow, and continuously improves alongside you.

Holaboss.ai@Holabossai

Introducing: holaOS — the agent computer for ANY digital work. Not another AI agent, holaOS is an open-source agent computer you share with your AI teammate — it learns your goals, adapts to your routine, and keeps getting better with you. Here is how it works 👇

English

When Gambling Became Science: The Birth of Probability

The candlelight flickered across the crowded gambling tables of 18th-century London, where fortunes were lost as quickly as they were made. Among the observers stood a quiet mathematician, Abraham de Moivre, watching not the players—but the patterns. Dice rolled, cards turned, coins flipped. To others, it was chaos. To him, it felt structured.

De Moivre began asking a simple question: Was chance truly random, or just misunderstood? He started counting outcomes, tracking wins and losses, and soon realized that even in gambling, there were hidden regularities. His work led to one of the earliest formulations of probability theory, showing that repeated random events followed predictable laws. Gamblers thought they were playing luck—he saw mathematics unfolding.

Years later, in France, another brilliant mind, Pierre-Simon Laplace, took these ideas further. Inspired by problems of chance in games and society, Laplace expanded probability into a powerful analytical tool. He asked deeper questions: How likely is an event given partial information? How does uncertainty evolve with more data?

Laplace refined and formalized probability, introducing ideas that resemble modern Bayesian reasoning. What began at gambling tables transformed into a framework for reasoning under uncertainty. Dice and cards became symbols of something far greater—a way to understand the world itself.

From smoky casinos to scientific theory, probability was born not in certainty, but in curiosity about chance.

English

The candlelight flickered across the crowded gambling tables of 18th-century London, where fortunes were lost as quickly as they were made. Among the observers stood a quiet mathematician, Abraham de Moivre, watching not the players—but the patterns. Dice rolled, cards turned, coins flipped. To others, it was chaos. To him, it felt structured.

De Moivre began asking a simple question: Was chance truly random, or just misunderstood? He started counting outcomes, tracking wins and losses, and soon realized that even in gambling, there were hidden regularities. His work led to one of the earliest formulations of probability theory, showing that repeated random events followed predictable laws. Gamblers thought they were playing luck—he saw mathematics unfolding.

Years later, in France, another brilliant mind, Pierre-Simon Laplace, took these ideas further. Inspired by problems of chance in games and society, Laplace expanded probability into a powerful analytical tool. He asked deeper questions: How likely is an event given partial information? How does uncertainty evolve with more data?

Laplace refined and formalized probability, introducing ideas that resemble modern Bayesian reasoning. What began at gambling tables transformed into a framework for reasoning under uncertainty. Dice and cards became symbols of something far greater—a way to understand the world itself.

From smoky casinos to scientific theory, probability was born not in certainty, but in curiosity about chance.

English

The median of a multidimensional probability distribution generalizes the 1D notion of minimizing absolute deviations. A common definition is the geometric median: x* = argmin_x E[‖X − x‖], which minimizes expected distance to the data. Unlike the mean, it is robust to outliers and does not rely on second moments. In probability and statistics, it provides a stable central tendency for heavy-tailed or skewed distributions. In ML, geometric medians are used in robust optimization, clustering, and federated learning (e.g., aggregating model updates resistant to noise or adversaries). In reinforcement learning, similar ideas appear in robust policy evaluation under uncertain or corrupted rewards. In real life, this concept helps in location planning (facility placement), sensor fusion, and decision-making systems where robustness to extreme values is critical.

Image: share.google/r0c1my3MIcDqz3…

English

I’m starting something new!

Mind-bending stories on Probability, Statistics & Machine Learning—where math meets intuition.

Just ideas that change how you think.

It’s FREE on Substack.

Join if you’re curious ↓

probabilityandstatistics.substack.com

English

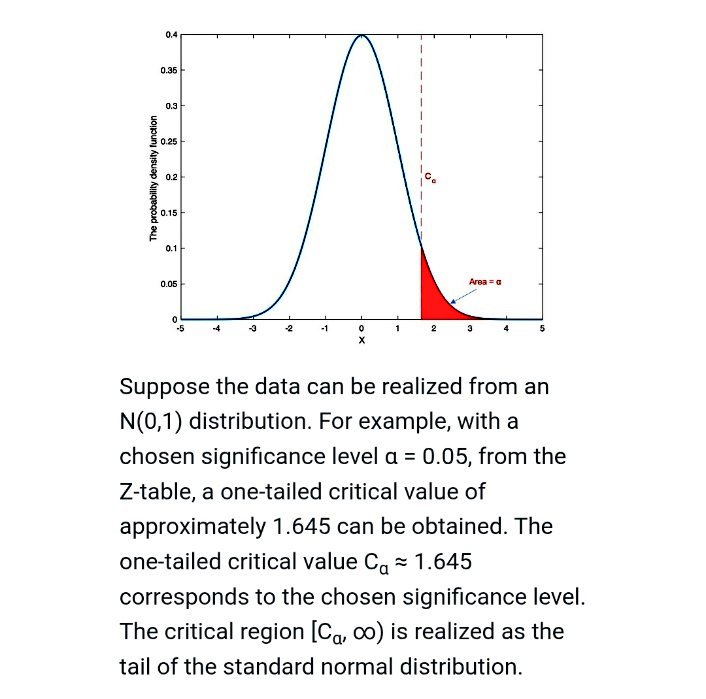

Hypothesis testing is a core statistical framework for decision-making under uncertainty. We start with a null hypothesis H₀ and an alternative H₁, and use data to decide whether to reject H₀. Common tests include z-tests, t-tests, chi-square tests, and nonparametric tests. The p-value measures the probability of observing data as extreme as ours under H₀; a small p-value suggests evidence against H₀. A confidence interval gives a range of plausible values for a parameter, offering more information than a binary decision. Type I error is rejecting a true H₀ (false positive), while Type II error is failing to reject a false H₀ (false negative). In ML, hypothesis testing is used for model comparison, A/B testing, and feature selection. In RL, it helps evaluate policies and compare strategies under uncertainty, ensuring decisions are statistically sound.

English

ML interview drill:

You have two models:

Model A → Accuracy: 92%, Inference time: 5ms

Model B → Accuracy: 93%, Inference time: 200ms

You’re deploying to a real-time system.

Which do you choose?

A) Model A

B) Model B

C) Depends on latency constraints

D) Always higher accuracy

Reply with your answer!

Bonus:

What tradeoff are you optimizing here?

English

ML interview drill:

You deploy a recommendation model. CTR is high, but long-term user engagement drops significantly.

What’s the MOST likely issue?

A) Model underfitting

B) Over-optimization for short-term reward

C) Data imbalance

D) Poor feature scaling

Reply with your answer!

Bonus:

How would you redesign the objective to improve long-term engagement?

English

ML interview drill:

You train a model and notice:

Training loss steadily decreases, but validation loss fluctuates heavily between epochs.

What’s the MOST likely cause?

A) High learning rate

B) Data leakage

C) Model undercapacity

D) Too much regularization

Reply with your answer!

Bonus:

What’s one change you’d try first to stabilize validation performance?

English

Safe anytime-valid inference enables statistically valid decisions under continuous monitoring of data. Classical tests assume a fixed sample size, but repeated looks inflate Type I error. Here, validity is preserved at all stopping times using nonnegative supermartingales Mₜ with E[Mₜ] ≤ 1 under H₀. An e-value process satisfies supₜ E[Mₜ] ≤ 1, and rejecting when Mₜ ≥ 1/α controls error at level α. This replaces p-values with sequential evidence that remains valid under optional stopping. In probability and statistics, it formalizes robust sequential testing. In ML, it is used in online A/B testing, adaptive experiments, and model monitoring. In RL, it enables safe policy evaluation and exploration without violating guarantees. In real life, it supports clinical trials, finance, and streaming decision systems, where data arrives continuously and stopping rules are flexible.

English

Probability and Statistics retweetledi

Thermodynamic sampling builds on statistical physics, where a system’s state x follows the Boltzmann distribution: p(x) ∝ exp(−E(x)/T), with E(x) as energy and T as temperature controlling randomness. At high T, exploration dominates; at low T, the system concentrates near minima of E(x). In probability and statistics, this underlies Markov Chain Monte Carlo (MCMC) methods like Metropolis–Hastings, where transitions accept proposals with probability α = min(1, exp(−ΔE/T)), ensuring convergence to a target distribution. In ML, it appears in simulated annealing and energy-based models, helping optimize non-convex losses and sample complex distributions. Real-life applications include protein folding, Bayesian inference, combinatorial optimization, and even logistics planning, where controlled randomness helps escape local minima and discover globally efficient solutions.

Image: share.google/E3ZkBCPziFCvw7…

English