'YZ' Yezhou Yang (杨叶舟)

2.1K posts

@prof_yz

Prof_YZ @APGASU , RTesearching AI/CV/GenAI @SCAI_ASU ; Amazon Scholar @PrimeVideo ; PhD @umdcs; BA @ZJU_China 奇共赏 疑与析 修栈道 渡陈仓 🤠

The basic idea of world models is very old. Optimal control folks were using model-based planning in the 1960s (using the "adjoint state" methods, which deep learning people would now call "backprop through time"). But the real question is what you do with this idea and how you reduce it to practice.

Walk for PEACE ☮️

Suggestion for #ICLR2026 @iclr_conf: Allow authors to withdraw their papers without public disclosure of the submission at the conclusion of the review process. No matter what fixes are implemented now, the review process has been compromised, and is not what the authors agreed to when they first submitted their papers.

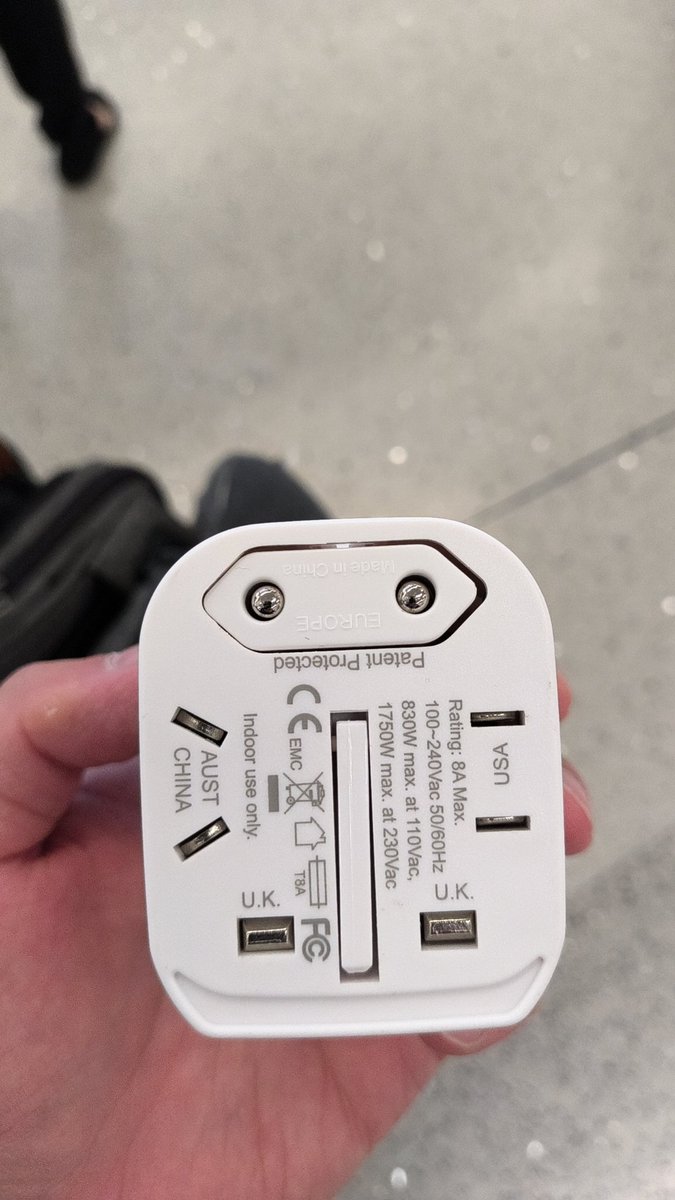

Next stop: #ICCV2027 Hong Kong