Paul Esau

93 posts

Paul Esau

@ptesau

Spring 2023 @ScovillePF at @WisconsinProj. Military export policy writer/researcher. 2022 @Laurier and @StudyofCanada alum.

WSJ reports @pangramlabs on falsely accusing people of AI. wsj.com/opinion/the-ai… The ai detection industry needs to rise above accusations as a way to drive use (which people on twitter love, I get it, ragebait sells) If we really want to promote human-written content, we should help people establish that their writing is human. Give people the ability to replay their process. Trust comes from transparency. So what did we do about this at @GPTZeroAI ?

Today, we’re launching AI Reviewer Expert Feedback. We partnered with Emmy-winning writers to train AIs to give expert writing feedback through GPTZero.

Zealots at the Gate has a new home at @Georgetown and a new season coming soon. Subscribe wherever you stream podcasts!

The first attack on a nuclear facility occurred 36 years BEFORE Iran's attack on Osirak. In 1944, by pure luck, Japan attacked the Hanford plant in Washington that was producing the plutonium that would be used in the nuclear weapon dropped on Nagasaki. (1/n)

CENTCOM is unhappy with this story, but unable to argue with the facts it presents. This morning I offered an on-record interview with Adm. Cooper in case he wanted to clarify his remarks, but that was rejected out of hand by a staffer nytimes.com/2026/03/17/us/…

Latest from me on anti-corruption issues (yes, that is still a problem too) A Year Later – What Did the Pause on FCPA Enforcement Do? at justsecurity.org/133481/year-la…

Today, we're launching AI Vision. The first AI slop detector that exposes content as you scroll.

Just tested chatgpt 5.2 for hallucinations. EVERYONES saying it's no longer a problem in 2026. Well guess what... It hallucinated over 10/40 citations on this prompt.

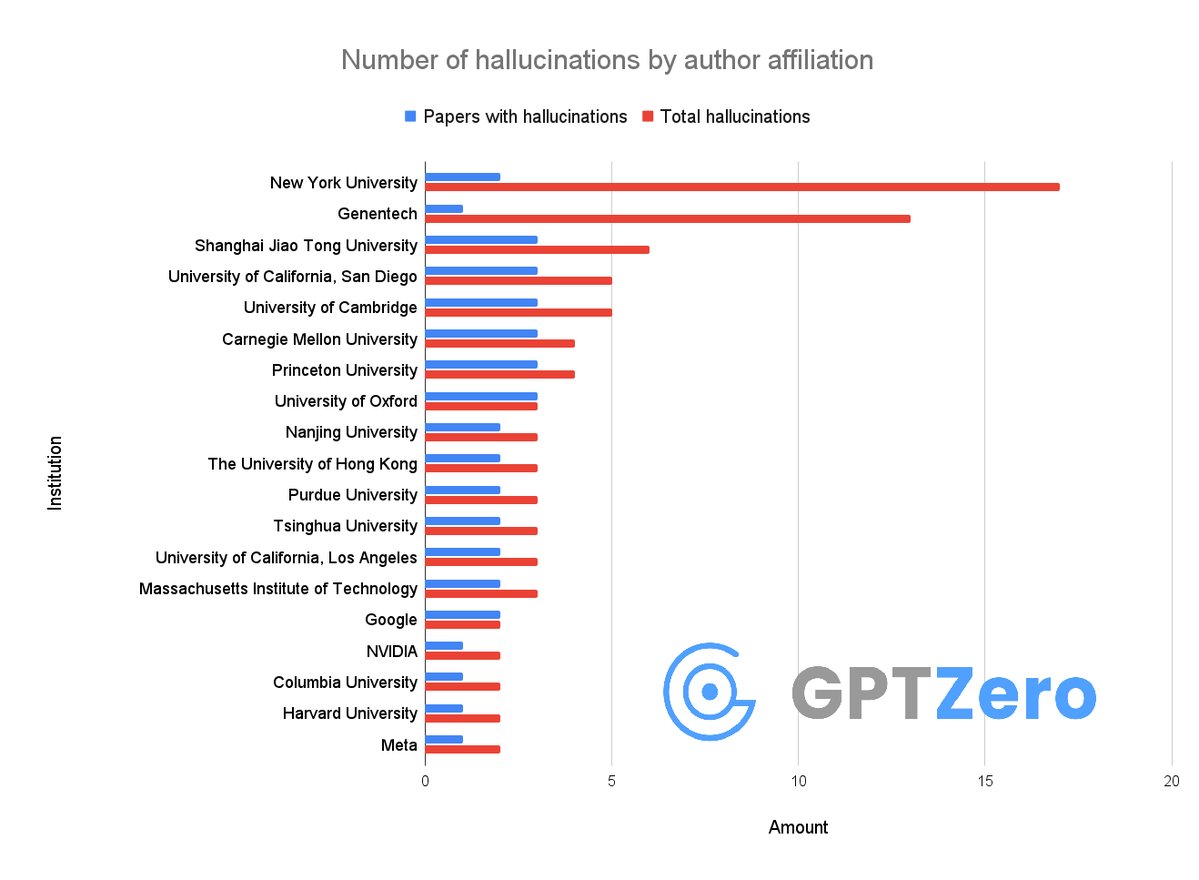

Okay so, we just found that over 50 papers published at @Neurips 2025 have AI hallucinations I don't think people realize how bad the slop is right now It's not just that researchers from @GoogleDeepMind, @Meta, @MIT, @Cambridge_Uni are using AI - they allowed LLMs to generate hallucinations in their papers and didn't notice at all. It's insane that these made it through peer review👇

This paper has been desk rejected. LLM-generated papers that hallucinate references and do not report LLM usage will be desk rejected per ICLR policy (blog.iclr.cc/2025/08/26/pol…) Reviewers of other versions of this submission have been notified.