Sabitlenmiş Tweet

pystar

24.7K posts

pystar

@pystar

| Engineering | Previously Finance | Previously Physics

Ad Abyssum, Ut Ad Astra Eamus Katılım Ocak 2009

850 Takip Edilen1.3K Takipçiler

pystar retweetledi

Agentic AI's compute demands are growing faster than anyone projected

qz.com/agentic-ai-com…

English

pystar retweetledi

@TheStalwart The Cobra Effect of the British Raj.

The Rat Tail scandal of 1902 French colonial Vietnam.

Humans will act a certain way under certain conditions.

English

The FT says that Amazon employees are doing random unnecessary task automations to consume tokens and to show their bosses that they're using AI more ft.com/content/8ee0d3…

English

@AryanA9019 @stufflistings A customized Linux distro which can also run android apps would be much better

English

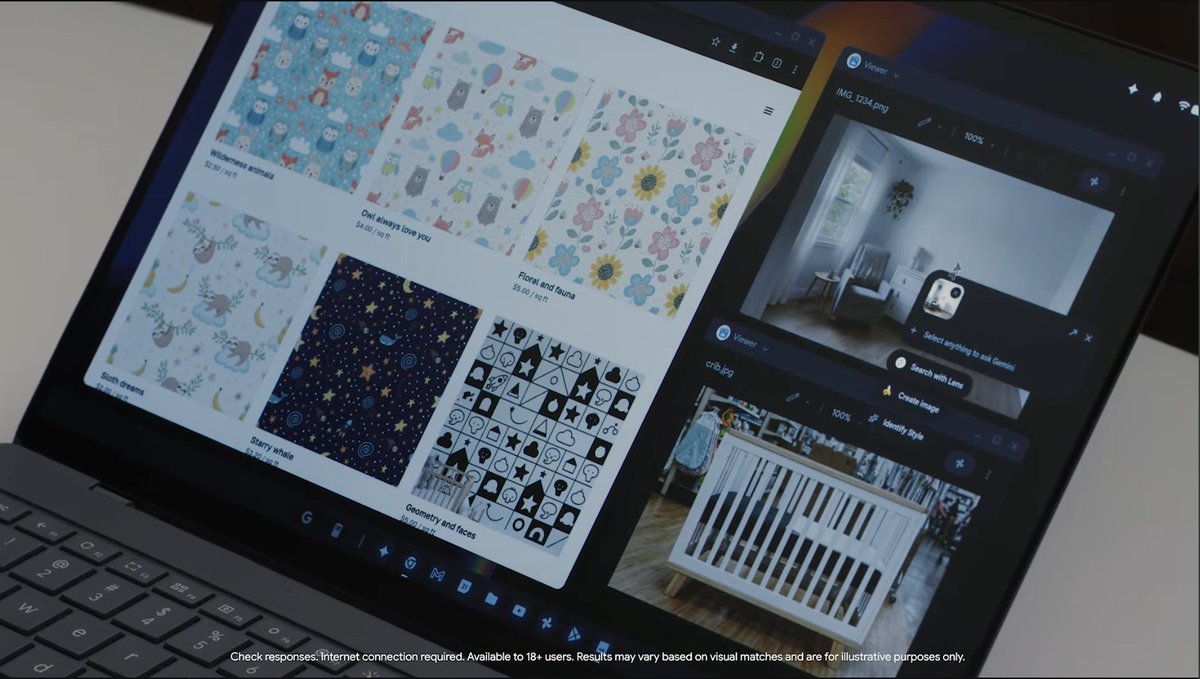

@stufflistings It looks god awful. No real desktop apps at all — just ugly, unoptimized Android apps stretched on a big screen with zero proper UI scaling. They also barely showed any cross-device handoff/continuity features. This is terrible.

English

Woah.

Google has announced Googlebook. The cursor is going to be contextual, built with Gemini.

#TheAndroidShow #Googlebook

English

@heynavtoor Imagine this 💩 making it's way into training data of major labs.

We are cooked.

English

THIS GUY BUILT AN ENTIRE WIKIPEDIA THAT IS 100% AI HALLUCINATIONS AND IT'S OPEN SOURCE ON GITHUB

it's called Halupedia.

nothing on the site existed before you clicked. every article was generated the second you arrived.

the site has one rule: the universe only exists when you visit it.

it looks exactly like wikipedia. same fonts. same layout. same scholarly citations. same "stumble" button for random articles.

the only difference is none of it is real.

here are some actual articles currently in the encyclopedia:

> the great pigeon census of 1887

> the ministry of slightly wrong maps

> chaldic arithmetic — a branch of mathematics where subtraction is forbidden

> armund the river mapper — a cartographer who mapped 14,000 leagues of river without leaving his chair

> the society for the prevention of unnecessary tuesdays

every article page also tells you how many people are reading it right now. it says: "you alone are consulting this folio at present."

the creator's own tagline for the site is the most unhinged sentence i've read this year:

"an encyclopedia of a universe that does not exist until you visit it"

the entire backend is a single open source repo called vibeserver. one guy. one description on github: "a little webserver making things up just in time."

we built the largest knowledge base in human history and the very first thing a guy did with it was make a hallucinated mirror universe and put it on the open web.

the internet is healing.

English

pystar retweetledi

"We're watching the cost of intelligence fall faster than the cost of distribution."

- @gregisenberg

Wrong!!!

Anthropic/OpenAI keep increasing process or reducing limits.

English

pystar retweetledi

Huge thanks to @cloudflare for the startup grant.

This is massive for keeping the momentum high while we keep shipping.

Onwards and upwards 🦸♂️

English

pystar retweetledi

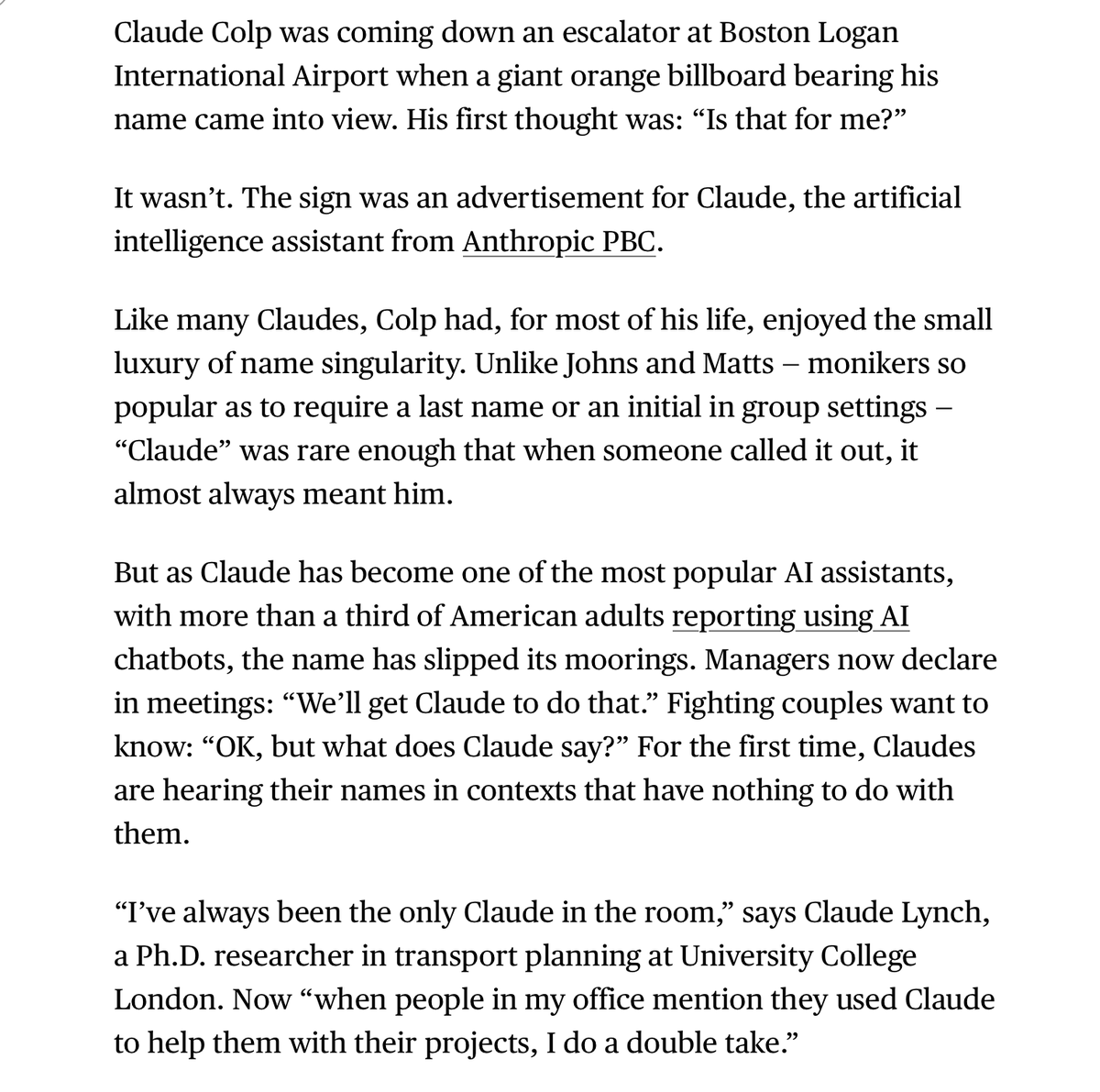

Bloomberg interviewed a bunch of people named Claude to figure out how their lives are going

Really funny story by @MADarbyshire

English

Posted public audits of strangers' websites.

Reddit roasted me.

Wrote about it anyway.

hunchbank.com/blog/founder-d…

English