Sabitlenmiş Tweet

Yang You

33 posts

Yang You

@q456cvb

Postdoc at the Geometric Computation group led by Prof. Leonidas Guibas in Stanford. Research interests include 3D graphics, 3D computer vision and robotics.

Stanford Katılım Ocak 2022

83 Takip Edilen138 Takipçiler

🚀 Excited to announce that DOT-Sim is accepted to #ICRA2026! We build a differentiable optical tactile simulator that captures both the physics and optics of soft sensors: an MPM-based elastic model handles large, non-linear deformations, and a learned residual renderer maps depth+normal to realistic sensor images. It calibrates to a real DenseTact in minutes, and enables zero-shot sim-to-real on object classification (85%), embedded tumor detection (90%), and sub-mm trajectory following. #ICRA2026 #Robotics #TactileSensing #Simulation #Sim2Real #AI

Joint work with amazing collaborators: Won Kyung Do, Aiden Swann, Rika Antonova, Monroe Kennedy, and Leonidas Guibas.

🔗 ArXiv: arxiv.org/abs/2604.27367

English

Yang You retweetledi

Generative models can create visually stunning 3D rooms, but are they functional for the agents inside them? 🛋️🤖

Introducing SceneTeract - a framework that verifies 3D scene functionality under agent-specific constraints!

📄: arxiv.org/abs/2603.29798

🌐: sceneteract.github.io

English

Yang You retweetledi

Spatial representations are central to world models🌍 SuperDec is an extremely compact 3D scene representation (replacing millions of Gaussians with just a few hundred primitives) ideal for abstract reasoning and planning in 3D ➡️super-dec.github.io

✨Oral @ICCVConference

Elisabetta Fedele@efedele16

Are photorealistic representations all we need? In SuperDec, we turn millions of points into compact and modular abstractions made of just a few superquadrics!🧩 Try our code and get a compact representation of your favorite scene!🚀 👾: github.com/elisabettafede…

English

Yang You retweetledi

Excited to share that we’ll be presenting three works at #CoRL 2025 (@corl_conf), taking place September 27–30 in Seoul, Korea! 🎉

1. SIREN: siren-robot.github.io

2. ParticleFormer: suninghuang19.github.io/particleformer…

3. ARCH: long-horizon-assembly.github.io

Suning Huang@suning_huang

Unfortunately I cannot attend the conference in person this year, but our co-author @jiankai will be presenting ParticleFormer @corl_conf ! - Sep 30 - Spotlight Session 5 - Poster Session 3 x.com/suning_huang/s…

English

🚀 Excited to announce that Img2CAD is accepted to #SIGGRAPHAsia2025! We reverse engineer 3D CAD from a single 2D image by factorizing the task: a finetuned VLM predicts discrete CAD programs, and a TrAssembler regresses continuous parameters. It works well on ShapeNet common objects like chairs and tables, and generalizes to images in the wild. #SIGGRAPHAsia2025 #3D #CAD #ComputerGraphics #VisionLanguage #AI

🔗 ArXiv: arxiv.org/abs/2408.01437

🖥️ Project: qq456cvb.github.io/projects/img2c…

💻 Code: github.com/qq456cvb/Img2C…

English

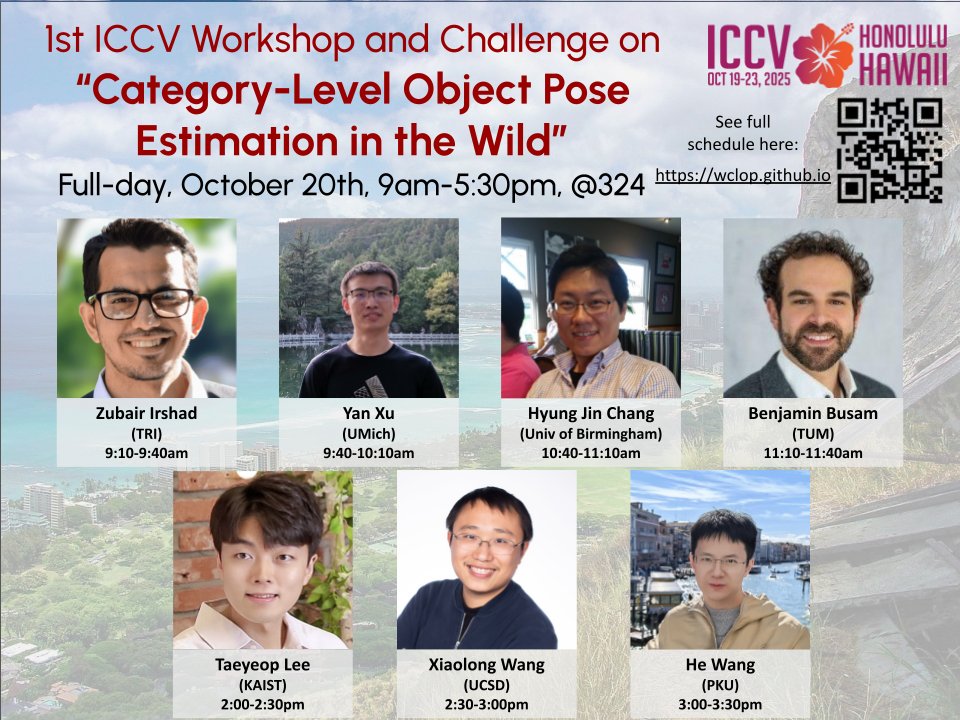

Call for Papers & Challenge Participants | WCLOP @ ICCV 2025

📆 Paper Deadline: 22 September 2025 (AoE)

🔔 Notification: 29 September 2025

📍 Venue: Honolulu, Hawaii

We’re hosting the cross-disciplinary workshop “WCLOP: 1st ICCV Workshop and Challenge on Category-Level Object Pose Estimation in the Wild” at ICCV 2025, spotlighting advances in category-level object pose estimation for robotic manipulation scenarios.

We warmly invite non-archival submissions:

• Short Papers — up to 4 pages

• Regular Papers — up to 8 pages

Join the WCLOP 2025 Challenge as well:

Two-phase evaluation on Omni6DPose & PACE datasets, followed by downstream robot-manipulation tasks

📆 Phase 1 deadline: 15 September 2025

💰 Over $6,000 in awards for challenge winners

Several leading experts will deliver keynote talks.

Let’s push the boundaries of category-level pose estimation 🤝

🔗 Website: wclop.github.io

🔗 Paper Submission: openreview.net/group?id=thecv…

🔗 Challenge Portal: codabench.org/competitions/9…

English

Yang You retweetledi

We made a @gradio demo for AllTracker!

AllTracker is the current state-of-the-art for general-purpose point tracking. The demo gives a good sense of the accuracy---try your own videos and see for yourself!

🔗 Demo: huggingface.co/spaces/aharley…

💻 Code: github.com/aharley/alltra…

English

Yang You retweetledi

Yang You retweetledi

Excited to share our work:

Gaussian Mixture Flow Matching Models (GMFlow)

github.com/lakonik/gmflow

GMFlow generalizes diffusion models by predicting Gaussian mixture denoising distributions, enabling precise few-step sampling and high-quality generation.

English

🚀 Excited to announce that our work on enhancing the 3D awareness of Vision Transformers (ViT) is accepted to #ICLR2025! We evaluate ViT models' 3D equivariance and introduce a simple finetuning strategy using 3D correspondences, achieving remarkable improvements in pose estimation, tracking, and semantic transfer even with minimal finetuning.

🔗 Arxiv paper: arxiv.org/abs/2411.19458

💻 Code is now available on github.com/qq456cvb/3DCor…, check it out!

🤗 Try out the demo on Hugging Face: huggingface.co/spaces/qq456cv…

🙏 Grateful to my amazing co-authors Yixin Li, Congyue Deng (@CongyueD), Yue Wang (@yuewang314), Leonidas Guibas for the contributions!

#ICLR2025 #3Dvision

English

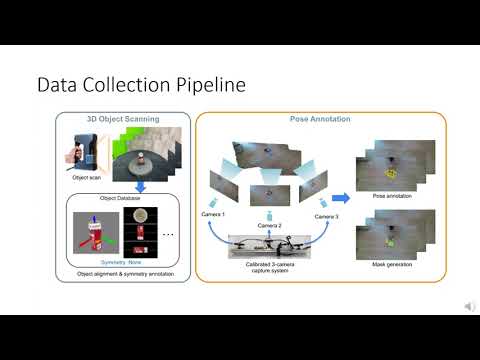

🚀 Excited to share our PACE dataset at #ECCV2024! 📊 PACE (Pose Annotations in Cluttered Environments) is a large-scale benchmark for 3D pose estimation in real-world, cluttered scenes with 55K frames, 258K annotations, and 238 objects across 43 categories. It includes PACE-Sim with 100K simulated frames and 2.4M annotations. We evaluate state-of-the-art methods in both pose estimation and tracking, revealing their limits in real-world scenarios. Also, an innovative 3-camera annotation system boosts pose labeling efficiency.

🗓️ Poster #191 on Fri, Oct 4, 10:30am-12:30pm CEST at the Exhibition Area!

🔗 Dataset: huggingface.co/datasets/qq456…

🌐 Project: qq456cvb.github.io/projects/pace

💻 Code: github.com/qq456cvb/PACE

🎥 Video: youtu.be/RX1K-xA99ZI

#3Dvision #PoseEstimation #ECCV2024 #eccvconf

YouTube

English

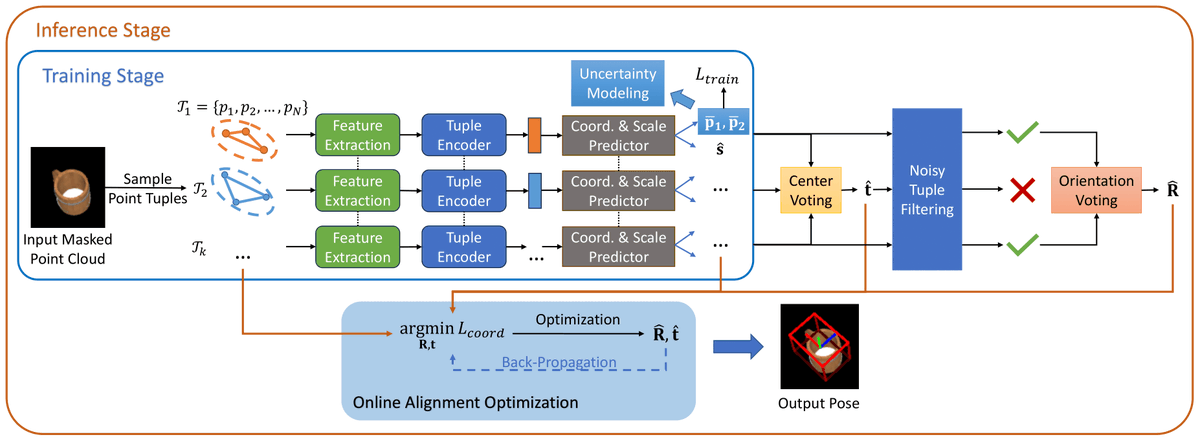

Excited to share our work RPMArt, accepted by #IROS2024 🎉🎉🎉!

RPMArt takes a single-view noisy point cloud as input and is able to estimate the articulation parameters and manipulate the articulation part robustly by voting.

👉Explore r-pmart.github.io for more!

#Robotics

English