Sabitlenmiş Tweet

Raffaele Mauro

10K posts

Raffaele Mauro

@Rafr

Partner at Primo Capital - Deep Tech VC | Kauffman Fellow | Karman Fellow | EYL40 | Ph.D. | Previously Endeavor & Harvard

Milano, Italy Katılım Mart 2009

5.4K Takip Edilen3.5K Takipçiler

Felice di aver contribuito alla nuova puntata di #FINTheBox con Davide Zanichelli e Paolo Bucciol. In una conversazione frizzante abbiamo parlato di spazio, investimenti, geopolitica e della teoria della Luna piatta ! (joking ) Buon ascolto ! 🌍 🚀 🌕 💫

youtu.be/r5Em_Z2aECU?si…

YouTube

Italiano

Epoch AI - ML Trends dashboard offers curated key numbers, visualizations, and insights that showcase the significant growth and impact of artificial intelligence.epoch.ai/trends

English

Raffaele Mauro retweetledi

Raffaele Mauro retweetledi

Terry Tao's article "Machine Assisted Proof" is out in the Notices of the AMS: ams.org/journals/notic…

English

Raffaele Mauro retweetledi

Machines of

Loving Grace - How AI Could Transform the World for the Better darioamodei.com/machines-of-lo… @DarioAmodei

English

Raffaele Mauro retweetledi

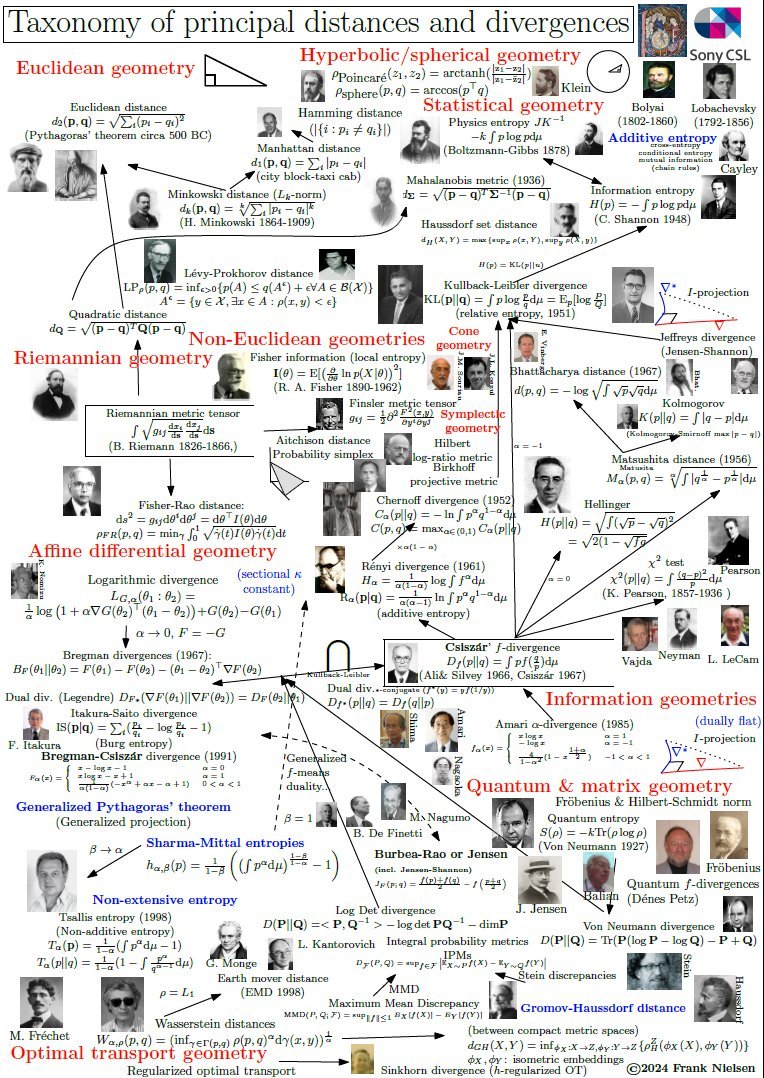

The Moore's Law Update

NOTE: this is a semi-log graph, so a straight line is an exponential; each y-axis tick is 100x. This graph covers a 1,000,000,000,000,000,000,000x improvement in computation/$. Pause to let that sink in.

Humanity’s capacity to compute has compounded for as long as we can measure it, exogenous to the economy, and starting long before Intel co-founder Gordon Moore noticed a refraction of the longer-term trend in the belly of the fledgling semiconductor industry in 1965.

I have color coded it to show the transition among the integrated circuit architectures. You can see how the mantle of Moore's Law has transitioned most recently from the GPU (green dots) to the ASIC (yellow and orange dots), and the NVIDIA Hopper architecture itself is a transitionary species — from GPU to ASIC, with 8-bit performance optimized for AI models, the majority of new compute cycles.

There are thousands of invisible dots below the line, the frontier of humanity's capacity to compute (e.g., everything from Intel in the past 15 years). The computational frontier has shifted across many technology substrates over the past 128 years. Intel ceded leadership to NVIDIA 15 years ago, and further handoffs are inevitable.

Why the transition within the integrated circuit era? Intel lost to NVIDIA for neural networks because the fine-grained parallel compute architecture of a GPU maps better to the needs of deep learning. There is a poetic beauty to the computational similarity of a processor optimized for graphics processing and the computational needs of a sensory cortex, as commonly seen in the neural networks of 2014. A custom ASIC chip optimized for neural networks extends that trend to its inevitable future in the digital domain. Further advances are possible with analog in-memory compute, an even closer biomimicry of the human cortex. The best business planning assumption is that Moore’s Law, as depicted here, will continue for the next 20 years as it has for the past 128. (Note: the top right dot for Mythic is a prediction for 2026 showing the effect of a simple process shrink from an ancient 40nm process node)

----

For those unfamiliar with this chart, here is a more detailed description:

Moore's Law is both a prediction and an abstraction. It is commonly reported as a doubling of transistor density every 18 months. But this is not something the co-founder of Intel, Gordon Moore, has ever said. It is a nice blending of his two predictions; in 1965, he predicted an annual doubling of transistor counts in the most cost effective chip and revised it in 1975 to every 24 months. With a little hand waving, most reports attribute 18 months to Moore’s Law, but there is quite a bit of variability. The popular perception of Moore’s Law is that computer chips are compounding in their complexity at near constant per unit cost. This is one of the many abstractions of Moore’s Law, and it relates to the compounding of transistor density in two dimensions. Others relate to speed (the signals have less distance to travel) and computational power (speed x density).

Unless you work for a chip company and focus on fab-yield optimization, you do not care about transistor counts. Integrated circuit customers do not buy transistors. Consumers of technology purchase computational speed and data storage density. When recast in these terms, Moore’s Law is no longer a transistor-centric metric, and this abstraction allows for longer-term analysis.

What Moore observed in the belly of the early IC industry was a derivative metric, a refracted signal, from a longer-term trend, a trend that begs various philosophical questions and predicts mind-bending AI futures.

In the modern era of accelerating change in the tech industry, it is hard to find even five-year trends with any predictive value, let alone trends that span the centuries.

I would go further and assert that this is the most important graph ever conceived. A large and growing set of industries depends on continued exponential cost declines in computational power and storage density. Moore’s Law drives electronics, communications and computers and has become a primary driver in drug discovery, biotech and bioinformatics, medical imaging and diagnostics. As Moore’s Law crosses critical thresholds, a formerly lab science of trial and error experimentation becomes a simulation science, and the pace of progress accelerates dramatically, creating opportunities for new entrants in new industries. Consider the autonomous software stack for Tesla and SpaceX and the impact that is having on the automotive and aerospace sectors.

Every industry on our planet is going to become an information business. Consider agriculture. If you ask a farmer in 20 years’ time about how they compete, it will depend on how they use information — from satellite imagery driving robotic field optimization to the code in their seeds. It will have nothing to do with workmanship or labor. That will eventually percolate through every industry as IT innervates the economy.

Non-linear shifts in the marketplace are also essential for entrepreneurship and meaningful change. Technology’s exponential pace of progress has been the primary juggernaut of perpetual market disruption, spawning wave after wave of opportunities for new companies. Without disruption, entrepreneurs would not exist.

Moore’s Law is not just exogenous to the economy; it is why we have economic growth and an accelerating pace of progress. At Future Ventures, we see that in the growing diversity and global impact of the entrepreneurial ideas that we see each year — from automobiles and aerospace to energy and chemicals.

We live in interesting times, at the cusp of the frontiers of the unknown and breathtaking advances. But, it should always feel that way, engendering a perpetual sense of future shock.

English

Teen Mathematicians Tie Knots Through a Mind-Blowing Fractal quantamagazine.org/teen-mathemati… via @QuantaMagazine

English

Raffaele Mauro retweetledi

... any *knot* in the Menger sponge: you can deform any knot without the string having to pass through itself so that it ends up as a subset of the Menger sponge.

quantamagazine.org/teen-mathemati…

arxiv.org/abs/2409.03639

English

A ‘Wikipedia for cells’: researchers get an updated look at the Human Cell Atlas, and it’s remarkable nature.com/articles/d4158…

English

Raffaele Mauro retweetledi

There have been several remarkable developments in combinatorics, my field of mathematics. A few weeks ago I gave a talk to a general mathematical audience in which I described six breakthroughs from the last five years.

youtube.com/watch?v=726OMr…

YouTube

English

Raffaele Mauro retweetledi

It’s shocking how much we know about how learning happens, all the way down to the mechanics of what’s going on in the brain.

And it’s not just how learning happens, but also, what we can do to improve learning.

There are plenty of learning-enhancing practice strategies that have been tested scientifically, numerous times, and are completely replicable. They might as well be laws of physics.

For instance: we know that actively solving problems produces more learning than passively watching a video/lecture or re-reading notes.

(To be clear: active learning doesn’t mean that students never watch and listen. It just means that students are actively solving problems as soon as possible following a minimum effective dose of initial explanation, and they spend the vast majority of their time actively solving problems.)

Another finding: if you don’t review information, you forget it. You can actually model this precisely, mathematically, using a forgetting curve. I’m not exaggerating when I refer to these things as laws of physics – the only real difference is that we’ve gone up several levels of scale and are dealing with noisier stochastic processes (that also have noisier underlying variables).

Okay, but aren’t these findings obvious? Yes, but…

Yes, but in education, obvious strategies often aren't put into practice. For instance, plenty of classes that still run on a pure lecture format and don't review previously learned unless it's the day before a test.

Yes, but there are plenty of other findings that replicate just as well but are not so obvious.

Here are some less obvious findings.

-- The spacing effect: more long-term retention occurs when you space out your practice, even if it's the same amount of total practice.

-- A profound consequence of the spacing effect is that the more reviews are completed (with appropriate spacing), the longer the memory will be retained, and the longer one can wait until the next review is needed. This observation gives rise to a systematic method for reviewing previously-learned material called spaced repetition (or distributed practice). A "repetition" is a successful review at the appropriate time.

-- To maximize the amount by which your memory is extended when solving review problems, it's necessary to avoid looking back at reference material unless you are totally stuck and cannot remember how to proceed. This is called the testing effect, also known as the retrieval practice effect: the best way to review material is to test yourself on it, that is, practice retrieving it from memory, unassisted.

-- The testing effect can be combined with spaced repetition to produce an even more potent learning technique known as spaced retrieval practice.

-- During review, it's also best to spread minimal effective doses of practice across various skills. This is known as mixed practice or interleaving -- it's the opposite of "blocked" practice, which involves extensive consecutive repetition of a single skill. Blocked practice can give a false sense of mastery and fluency because it allows students to settle into a robotic rhythm of mindlessly applying one type of solution to one type of problem. Mixed practice, on the other hand, creates a "desirable difficulty" that promotes vastly superior retention and generalization, making it a more effective review strategy.

-- To free up mental processing power, it's critical to practice low-level skills enough that they can be carried out without requiring conscious effort. This is known as automaticity. Think of a basketball player who is running, dribbling, and strategizing all at the same time -- if they had to consciously manage every bounce and every stride, they'd be too overwhelmed to look around and strategize. The same is true in learning.

-- The most effective type of active learning is deliberate practice, which consists of individualized training activities specially chosen to improve specific aspects of a student's performance through repetition (effortful repetition, not mindless repetition) and successive refinement. However, because deliberate practice requires intense effort focused in areas beyond one's repertoire, which tends to be more effortful and less enjoyable, people will tend to avoid it, instead opting to ineffectively practice within their level of comfort (which is never a form of deliberate practice, no matter what activities are performed).

-- Instructional techniques that promote the most learning in experts, promote the least learning in beginners, and vice versa. This is known as the expertise reversal effect. An important consequence is that effective methods of practice for students typically should NOT emulate what experts do in the professional workplace (e.g., working in groups to solve open-ended problems). Beginners (i.e. students) learn most effectively through direct instruction.

Now, this might seem like a lot of new information -- a common reaction is “Wow, the field of education is experiencing a revolution!”

But here’s the thing:

Most key findings have been known for many decades.

It’s just that they’re not widely known / circulated outside the niche fields of cognitive science & talent development, not even in seemingly adjacent fields like education.

These findings are not taught in school, and typically not even in credentialing programs for teachers themselves – no wonder they’re unheard of!

But if you just do a literature review on Google Scholar, all the research is right there – and it’s been around for many decades.

Naturally, this leads us to the following question:

Why aren't these key findings being leveraged in classrooms? Why do they remain relatively unknown?

Here are a handful of reasons that I’m aware of.

1. Leveraging them (at all) requires additional effort from both teachers and students.

In some way or another, each strategy increases the intensity of effort required from students and/or instructors, and the extra effort is then converted into an outsized gain in learning.

This theme is so well-documented in the literature that it even has a catchy name: a practice condition that makes the task harder, slowing down the learning process yet improving recall and transfer, is known as a desirable difficulty.

Desirable difficulties make practice more representative of true assessment conditions. Consequently, it is easy for students (and their teachers) to vastly overestimate their knowledge if they do not leverage desirable difficulties during practice, a phenomenon known as the illusion of comprehension.

However, the typical teacher is incentivized to maximize the immediate performance and/or happiness of their students, which biases them against introducing desirable difficulties and incentivizes them to promote illusions of comprehension.

Using desirable difficulties exposes the reality that students didn’t actually learn as much as they (and their teachers) “felt” they did under less effortful conditions. This reality is inconvenient to students and teachers alike; therefore, it is common to simply believe the illusion of learning and avoid activities that might present evidence to the contrary.

2. Leveraging cognitive learning strategies to their fullest extent requires an inhuman amount of effort from teachers.

Let’s imagine a classroom where these strategies are being used to their fullest extent.

-- Every individual student is fully engaged in productive problem-solving, with immediate feedback (including remedial support when necessary), on the specific types of problems, and in the specific types of settings (e.g., with vs without reference material, blocked vs interleaved, timed vs untimed), that will move the needle the most for their personal learning progress at that specific moment in time.

-- This is happening throughout the entirety of class time, the only exceptions being those brief moments when a student is introduced to a new topic and observes a worked example before jumping into active problem-solving.

Why is this an inhuman amount of work?

-- First of all, it's at best extremely difficult, and at worst (and most commonly) impossible, to find a type of problem that is productive for all students in the class. Even if a teacher chooses a type of problem that is appropriate for what they perceive to be the "class average" knowledge profile, it will typically be too hard for many students and too easy for many others (an unproductive use of time for those students either way).

-- Additionally, to even know the specific problem types that each student needs to work on, the teacher has to separately track each student's progress on each problem type, manage a spaced repetition schedule of when each student needs to review each topic, and continually update each schedule based on the student's performance (which can be incredibly complicated given that each time a student learns or reviews an advanced topic, they're implicitly reviewing many simpler topics, all of whose repetition schedules need to be adjusted as a result, depending on how the student performed). This is an inhuman amount of bookkeeping and computation.

-- Furthermore, even on the rare occasion that a teacher manages to find a type of problem that is productive for all students in the class, different students will require different amounts of practice to master the solution technique. Some students will catch on quickly and be ready to move on to more difficult problems after solving just a couple problems of the given type, while other students will require many more attempts before they are able to solve problems of the given type successfully on their own. Additionally, some students will solve problems quickly while others will require more time.

In the absence of the proper technology, it is impossible for a single human teacher to deliver an optimal learning experience to a classroom of many students with heterogeneous knowledge profiles, who all need to work on different types of problems and receive immediate feedback on each attempt.

3. Most edtech systems do not actually leverage the above findings.

If you pick any edtech system off the shelf and check whether it leverages each of the cognitive learning strategies I’ve described above, you’ll probably be surprised at how few it actually uses. For instance:

-- Tons of systems don't scaffold their content into bite-sized pieces.

-- Tons of systems allow students to move on to more material despite not demonstrating knowledge of prerequisite material.

-- Tons of systems don't do spaced review. (Moreover, tons of systems don't do ANY review.)

Sometimes a system will appear to leverage some finding, but if you look more closely it turns out that this is actually an illusion that is made possible by cutting corners somewhere less obvious. For instance:

-- Tons of systems offer bite-sized pieces of content, BUT they accomplish this by watering down the content, cherry-picking the simplest cases of each problem type, and skipping lots of content that would reasonably be covered in a standard textbook.

-- Tons of systems make students do prerequisite lessons before moving on to more advanced lessons, BUT they don't actually measure tangible mastery on prerequisite lessons. Simply watching a video and/or attempting some problems is not mastery. The student has to actually be getting problems right, and those problems have to be representative of the content covered in the lesson.

-- Tons of systems claim to help students when they're struggling, BUT the way they do this is by lowering the bar for success on the learning task (e.g., by giving away hints). Really, what the system needs to do is take actions that are most likely to strengthen a student's area of weakness and empower them to clear the bar fully and independently on their next attempt.

Now, I’m not saying that these issues apply to all edtech systems. I do think edtech is the way forward here – optimal teaching is an inhuman amount of work, and technology is needed. Heck, I personally developed all the quantitative software behind one system that properly handles the above challenges. All I’m saying is that you can’t just take these things at face value. Many edtech systems don’t really work from a learning standpoint, just as many psychology findings don’t hold up in replication – but at the same time, some edtech systems do work, shockingly well, just as some cognitive psychology findings do hold up and can be leveraged to massively increase student learning.

4. Even if you leverage the above findings, you still have to hold students accountable for learning.

Suppose you have the Platonic ideal of an edtech system that leverages all the above cognitive learning strategies to their fullest extent.

Can you just put a student on it and expect them to learn? Heck no! That would only work for exceptionally motivated students.

Most students are not motivated to learn the subject material. They need a responsible adult – such as a parent or a teacher – to incentivize them and hold them accountable for their behavior.

I can’t tell you how many times I’ve seen the following situation play out:

-- Adult puts a student on an edtech system.

-- Student goofs off doing other things instead (e.g., watching YouTube).

-- Adult checks in, realizes the student is not accomplishing anything, and asks the student what's going on.

-- Student says that the system is too hard or otherwise doesn't work.

-- Adult might take the student's word at face value. Or, if the adult notices that the student hasn't actually attempted any work and calls them out on it, the scenario repeats with the student putting forth as little effort as possible -- enough to convince the adult that they're trying, but not enough to really make progress.

In these situations, here’s what needs to happen:

-- The adult needs to sit down next to the student and force them to actually put forth the effort required to use the system properly.

-- Once it's established that the student is able to make progress by putting forth sufficient effort, the adult needs to continue holding the student accountable for their daily progress. If the student ever stops making progress, the adult needs to sit down next to the student again and get them back on the rails.

-- To keep the student on the rails without having to sit down next to them all the time, the adult needs to set up an incentive structure. Even little things go a long way, like "if you complete all your work this week then we'll go get ice cream on the weekend," or "no video games tonight until you complete your work." The incentive has to be centered around something that the student actually cares about, whether that be dessert, gaming, movies, books, etc.

Even if an adult puts a student on an edtech system that is truly optimal, if the adult clocks out and stops holding the student accountable for completing their work every day, then of course the overall learning outcome is going to be worse.

Ms. Sam@SciInTheMaking

Why do schools keep chasing every new educational trend when decades of proven research already show us what works? 🧵⬇️

English

FrontierMath: A Benchmark for Evaluating Advanced Mathematical Reasoning in AI epochai.org/frontiermath/t…

English

Raffaele Mauro retweetledi

Raffaele Mauro retweetledi

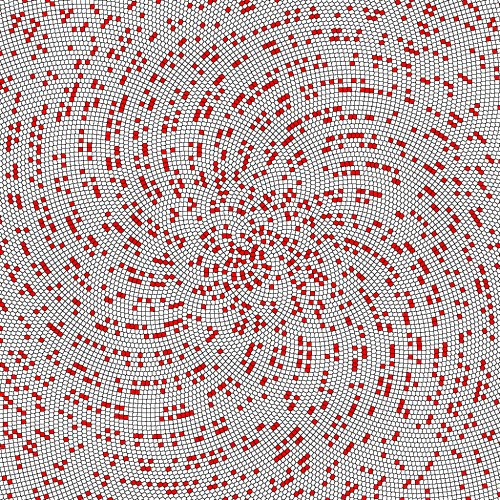

There is a fascinating connection between infinity and fractals, which are geometric shapes that have self-similarity at different scales. For example, the Mandelbrot set is a fractal that is defined by a simple formula, but it has an infinitely complex boundary that contains copies of itself at smaller and smaller scales. The area of the Mandelbrot set is finite, but its perimeter is infinite.

English

Raffaele Mauro retweetledi

Raffaele Mauro retweetledi

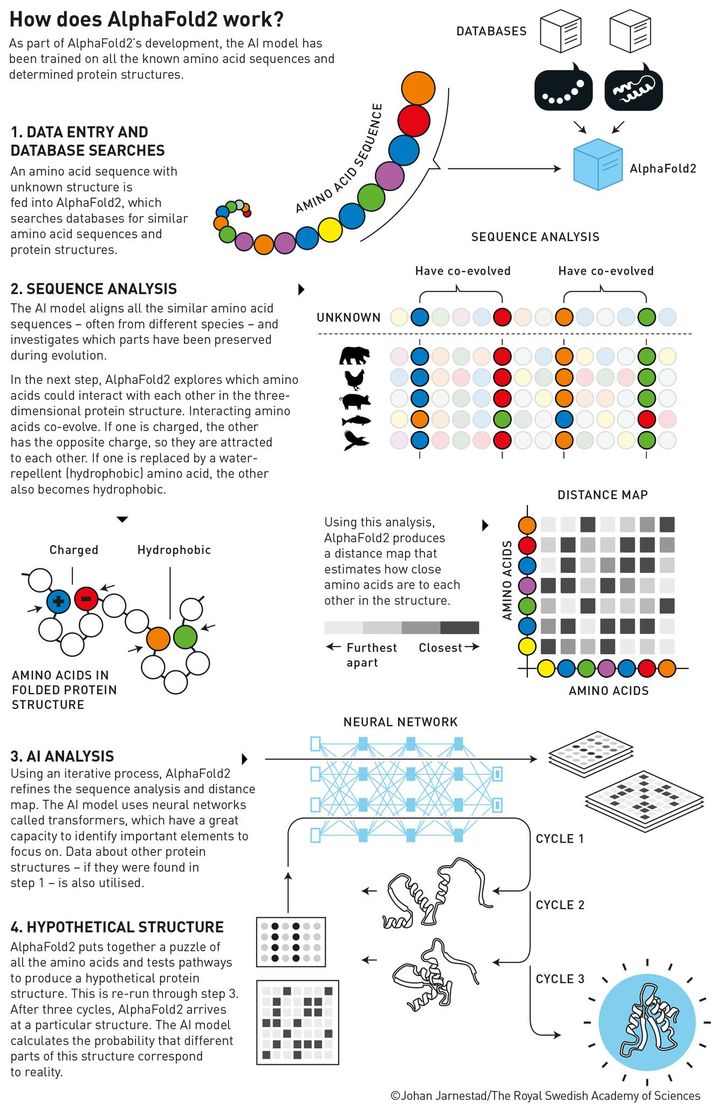

Demis Hassabis and John Jumper, the 2024 Nobel Prize laureates in chemistry, have developed an AI model to solve a 50-year-old problem: predicting proteins’ complex structures.

In 2020, Hassabis and Jumper presented an AI model called AlphaFold2. With its help, they have been able to predict the structure of virtually all the 200 million proteins that researchers have identified. Since their breakthrough, AlphaFold2 has been used by more than two million people from 190 countries. Among a myriad of scientific applications, researchers can now better understand antibiotic resistance and create images of enzymes that can decompose plastic.

The illustration below explains how it works.

Read more about their story: bit.ly/4diKiJ2

English

Raffaele Mauro retweetledi