Ranjay Krishna

2K posts

Ranjay Krishna

@RanjayKrishna

Assistant Professor @ University of Washington, Co-Director of RAIVN lab (https://t.co/f0BWKyjoeA), Director of PRIOR team (https://t.co/l9RzTesMSM)

Grounding lets vision-language models do more than describe—they can point to where a robot should grasp, which button to click, or which object to track across video frames. Today we're releasing MolmoPoint, a better way for models to point. 🧵

VLMs already have visual tokens. Letting them point by selecting those tokens turns out to be simpler, faster, & better. 🤖 Models: huggingface.co/collections/al… 📦 Data: huggingface.co/collections/al… 💻 Code: github.com/allenai/molmo2 📖 Blog: allenai.org/blog/molmopoint

Can Vision-Language Models Solve the Shell Game? paper: huggingface.co/papers/2603.08…

Today, a step forward in open robotics - our results show that sim-to-real zero shot transfer for manipulation is possible. MolmoBot is our open model suite for robotics, trained entirely in simulation on MolmoSpaces.🧵

Today, a step forward in open robotics - our results show that sim-to-real zero shot transfer for manipulation is possible. MolmoBot is our open model suite for robotics, trained entirely in simulation on MolmoSpaces.🧵

Instead of asking a VLM to output progress, it reads the model’s internal belief directly from token logits. No in-context learning. No fine-tuning. No reward training. 📈 We introduce: TOPReward, a zero-shot reward modeling approach for robotics using token probabilities from pretrained video VLMs. The simplest way of doing reward modelling for robotics! Project: topreward.github.io/webpage/ 🧵👇

I read this paper and its awesome - it creates a high-performing, smooth reward function (far superior to GVL) that is SUPER simple to implement with an LLM. IMPLEMENTATION: 1. SELECT A MODEL: Pick an open-weight, multimedia LLM (ie Qwen3-VL). 2. PROMPT THE MODEL: Send the LLM the following prompt: "The above video shows a robot manipulation trajectory that completes the following task: {INSTRUCTION}. Decide whether the above statement is True or not. The answer is: " [where INSTRUCTION is any task like "fold the towel" or "pour coffee into the cup"] 3. EXTRACT THE REWARD: Find the *logit probability* for the specific token "True" and use that as your reward signal. [The logit probability is the raw, unnormalized score assigned by the model to the "True" token before it passes through the softmax layer. This logit prob is available for open-source models and some closed-source models - for example, ChatGPT exposes log probs, whereas Claude does not] That's it!! Obviously the logit prob and using the term "True" are key insights. It is quite elegant. Congrats to the brilliant authors at @UW and @allen_ai !

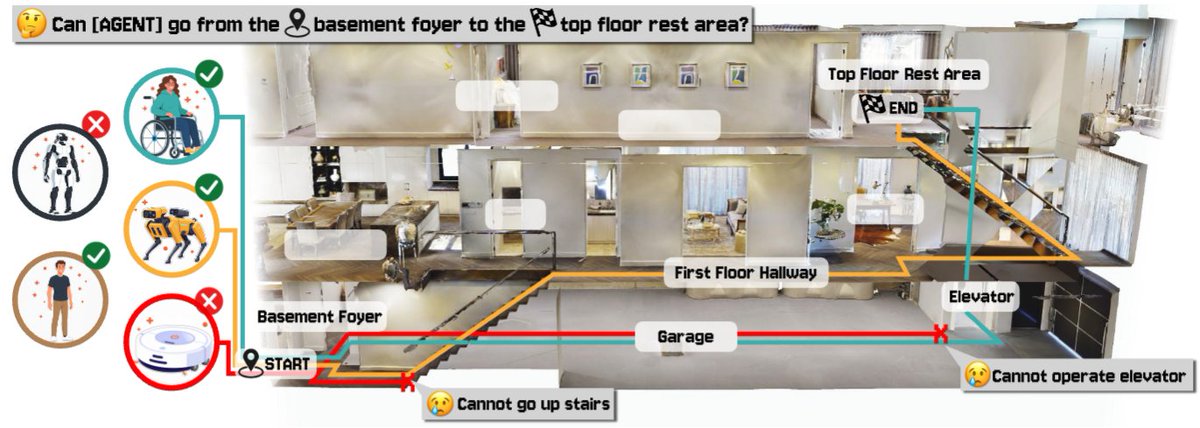

Introducing MolmoSpaces, a large-scale, fully open platform + benchmark for embodied AI research. 🤖 230k+ indoor scenes, 130k+ object models, & 42M annotated robotic grasps—all in one ecosystem.

Introducing MolmoSpaces, a large-scale, fully open platform + benchmark for embodied AI research. 🤖 230k+ indoor scenes, 130k+ object models, & 42M annotated robotic grasps—all in one ecosystem.