raullen.eth

5.7K posts

raullen.eth

@Raullen

🛰️ Building AI that reads the physical world — not the internet. @iotex_io cofounder 💎 | PhD @UWaterloo 🎓 | ex-@Google @Uber

Introducing TurboQuant: Our new compression algorithm that reduces LLM key-value cache memory by at least 6x and delivers up to 8x speedup, all with zero accuracy loss, redefining AI efficiency. Read the blog to learn how it achieves these results: goo.gle/4bsq2qI

came across ioSwarm recently, it lets you run an AI agent node on the IoTeX network that automatically validates transactions and earns you $IOTX decided to try it myself. just dropped the ioswarm GitHub link (github.com/iotexproject/i…) into OpenClaw and asked it to handle the setup and get it running on its own. was surprised how easy it was, it started working with just 1 instruction left it overnight and and I've already started receiving payouts. i'm earning around 150-200 iotx/day at the current reward rate. the ioSwarm agent basically connects to the goodwillclaw delegate, validates IOTX transactions and sends rewards directly to your wallet pretty cool 🔥

아이오텍스(IOTX) 거래 유의 종목 지정 해제 아이오텍스(IOTX)의 거래 유의 종목 지정 해제되었습니다. Investment warning period for IOTX has been lifted. 🔗 Discover more: upbit.com/service_center…

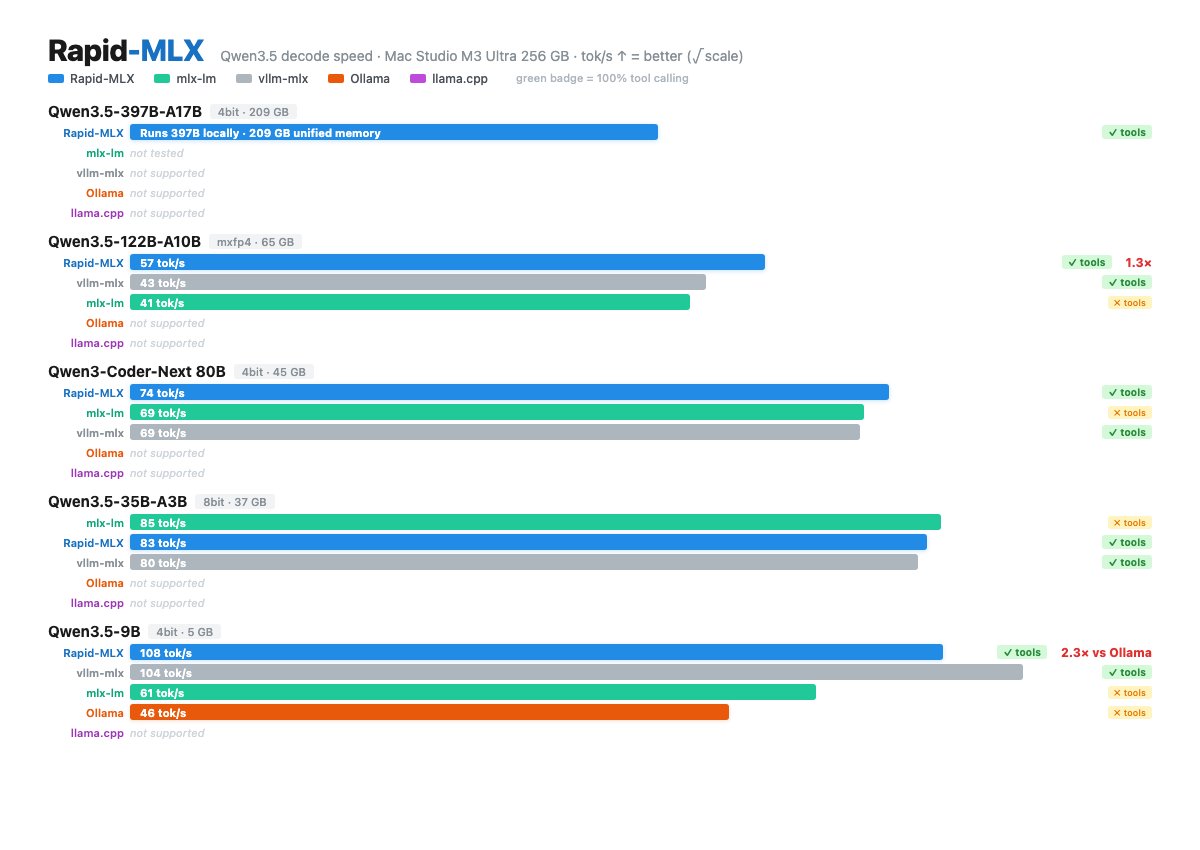

🚀 This might be the fastest local LLM inference engine on Mac — open source. Rapid-MLX is built specifically for Apple Silicon. Tested across 18 models vs Ollama, mlx-lm, llama.cpp — fastest on 16 of them. ⚡ What makes it different: • DeltaNet state snapshots — multi-turn TTFT drops from 1.5s → under 200ms • 100% tool calling accuracy (function calling actually works) • OpenAI-compatible API — drop-in for Claude Code, Cursor, etc. 🏆 Qwen3.5 is where it really shines: The hybrid RNN+attention architecture needs special handling. Other engines re-compute full context every turn. Rapid-MLX snapshots the RNN state and restores in ~0.1ms. 📊 Numbers (Mac Studio M3 Ultra, 256GB): • 397B — runs on a single Mac. 209GB. No cloud. • 122B → 57 tok/s, 100% tools • Coder-Next 80B → 74 tok/s, 0.10s TTFT • 35B → 83 tok/s • 9B → 108 tok/s (2.3× faster than Ollama) Fully open source: 🔗 github.com/raullenchai/Ra… @awnihannun @reach_vb @simonw @JustinLin610 @exaboross

The first tranche of payouts for affected users is on the way!

The ioTube Claims Portal is now live! If you were affected by the ioTube bridge exploit on Feb 21, you can submit your claim at iotube-claims.iotex.io. What to know: - 100% of affected users will be compensated - Balances up to $10K: prompt payout in stablecoins (covers 90%+ of affected wallets) - Larger balances: $10K upfront + remaining over 12 months with loyalty bonus - Funded from Foundation treasury (BTC + stablecoins), not from selling IOTX All recovered stolen assets go directly toward compensation. Fund tracing and law enforcement efforts are ongoing. We committed to making every affected user whole. Submit your claim today.

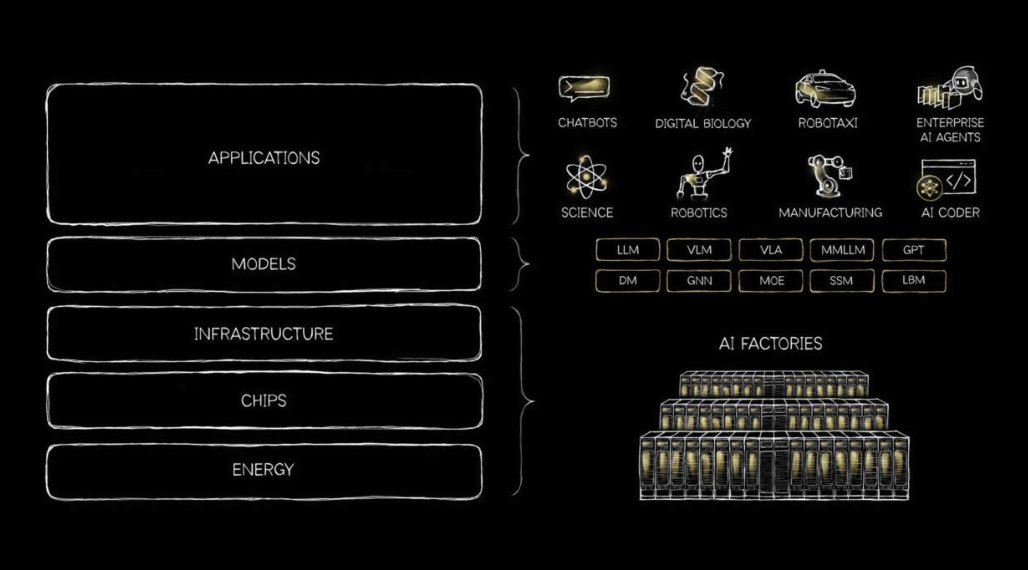

The next customers of physical-world data won't be humans. They'll be machines and robots. • Warehouse robots navigating crowded environments • Traffic systems coordinating intersections • Drones monitoring agriculture and energy grids They all need the same thing: perception, verification, automation. The machine and robot economy is just beginning.

a16z post on institutional ai vs individual ai perfectly articulates not only how companies need to evolve themselves, but also how ai companies building for enterprise need to deliver value

🏆 Weekly Top Gainers 🥇 @GravityChain leads this week with a strong +29% surge 🥈 @BSVBlockchain follows with +10% growth 🥉 @waterfall_dag climbs +6% ⚡️ @eCash and @iotex_io also post solid gains 📊 chainspect.app/dashboard?gain…