Ray Chen

618 posts

Ray Chen

@raychen

🏗️ @railway | [email protected]

Singapore Katılım Aralık 2016

133 Takip Edilen336 Takipçiler

Pi gang -- what am I doing wrong?! I really want to use Pi because of its customizability, but the harness itself seems subpar for me. I've noticed that Pi+Opus4.7 requires more steering as well.

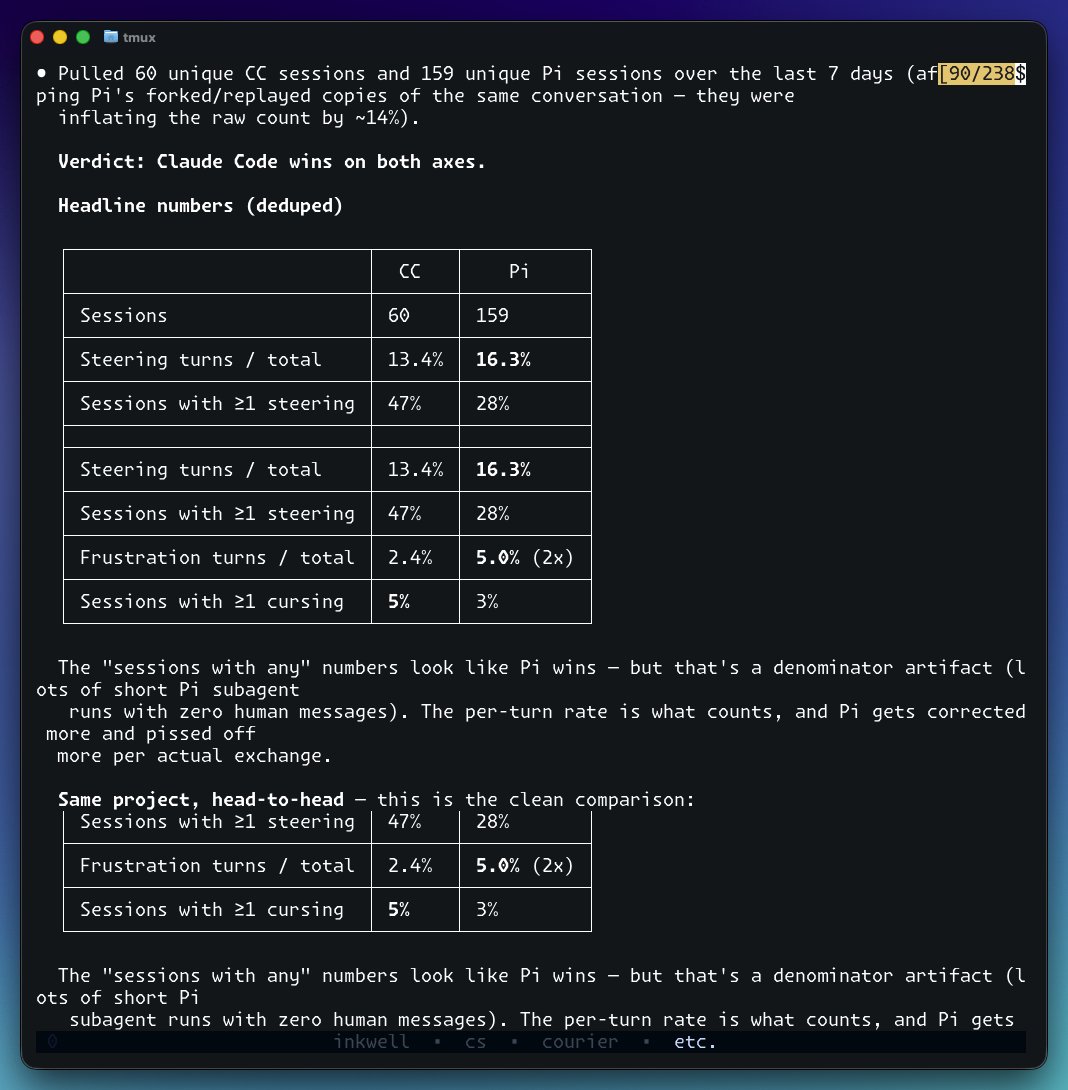

Ray Chen@raychen

I've been test-driving Claude Code versus Pi+GPT5.5 the past week. Here are the results. 159 Pi sessions vs 60 CC sessions. On the same project, Pi needed 2x the corrections and got cursed at 3x as often. Opus 4.7 has been getting anecdotally getting dumber, but it looks like I'm keeping CC as my daily driver after all. Prompt: "Stalk all my Claude Code (~/.claude/projects/) and Pi (~/.pi/agent/sessions/) sessions the past week, and tell me which one required less steering, and pisses me off the least."

English

On a related note, Pi+GPT5.5 seems to be amazing at Rust. @thisismahmoud maybe that's why you prefer it 😛

English

I've been test-driving Claude Code versus Pi+GPT5.5 the past week. Here are the results.

159 Pi sessions vs 60 CC sessions. On the same project, Pi needed 2x the corrections and got cursed at 3x as often.

Opus 4.7 has been getting anecdotally getting dumber, but it looks like I'm keeping CC as my daily driver after all.

Prompt: "Stalk all my Claude Code (~/.claude/projects/) and Pi (~/.pi/agent/sessions/) sessions the past week, and tell me which one required less steering, and pisses me off the least."

English

@dominikkoch (unless your agent harness exposes cost-per-turn natively like Pi does)

English

@dominikkoch hmm yeah, might be inaccurate cos it goes off your history and estimates according to token rates

English

Share how much you've spent on agentic coding!

curl -fsSL "agentic-coding-spend.ray.cat" | bash -s

Works with Claude, Codex, & Pi. Most of it is estimated from consumed tokens according to your sessions, except when using Pi.

English

There’s no Claude when you’re dead

Emma Steuer 🧚🤖@emmysteuer

You only live once, so make sure to spend as much time as possible on your computer. You won’t have access to it when you die

English

@JustJake Hi Jake, our server is down. Very confident it's a Railway issue.

Check our domain (confirmafy.com) CloudFlare is indicating a problem with the host.

Then we got a brief response of "Service temporarily unavailable"

We've tried restarting and redeploying.

English

Hey @Railway — really love your platform 🙌

Had an unfortunate automation bug that created thousands of PR environments unintentionally and spiked my bill (~$800). Already fixed it + added limits.

Reached out to support — totally understand usage billing, but as a small startup this is really tough to absorb.

Would really appreciate if this can be looked at for a one-time goodwill adjustment (even partial) 🙏

Happy to stay and grow long-term with Railway

English

@DhravyaShah Agent inside sandbox that can orchestrate its own sandboxes ;-)

English

Heya! If you're looking for someone fulltime, I'm not the right person, but otherwise I would love to connect!

I've been working in the Discord space for 9 years now, most recently as the primary admin for the @openclaw Discord server (as well as other moderation and maintainer duties there, I was one of the very first maintainers to join the team and wrote the library that powers the claw's Discord integration), building the community and the moderation team and processes from the ground up to its current membership count of 174k and leading a team of 21 staff members across 6 different teams!

English

Anyone I know looking for a community-centric role? Seeking someone experienced in Community x DevTools who's interested in helping scale @Railway's community

~3m devs, ~35k Discord members, ~74% engagement rate in Community Bounties (docs.railway.com/community/boun…), & growing fast

English