ReadyAI

224 posts

ReadyAI

@ReadyAI_

Making the world's data accessible to AI. Home of AcquiOS for CRE & M&A acquisitions Bittensor Subnet 3️⃣3️⃣ 🌐

If Martin is right, he also just wrote the product spec for open source + distributed compute where broad swaths of groups, individuals and organizations contribute their compute resources to training runs for large param open source models. There are lots of issues in figuring this out: homogeneity vs heterogeneity of the training clusters, orchestration, financial incentives etc etc etc but some early projects are good signal as to where this can go and that these limitations can be overcome (folding@home, Venice, Tao). An attempted oligopoly on intelligence is the perfect boundary condition for a bottoms up uprising of fully open, fully distributed AI.

Yes emissions are used to bootstrap innovation, same as Uber, Amazon and countless of other big companies You can chose between these 2: -give those emissions to VC’s -give those emissions to builders who devote their whole time to build out the network Vc’s hate it because they can’t apply the VC playbook/had discounted access compared to the masses

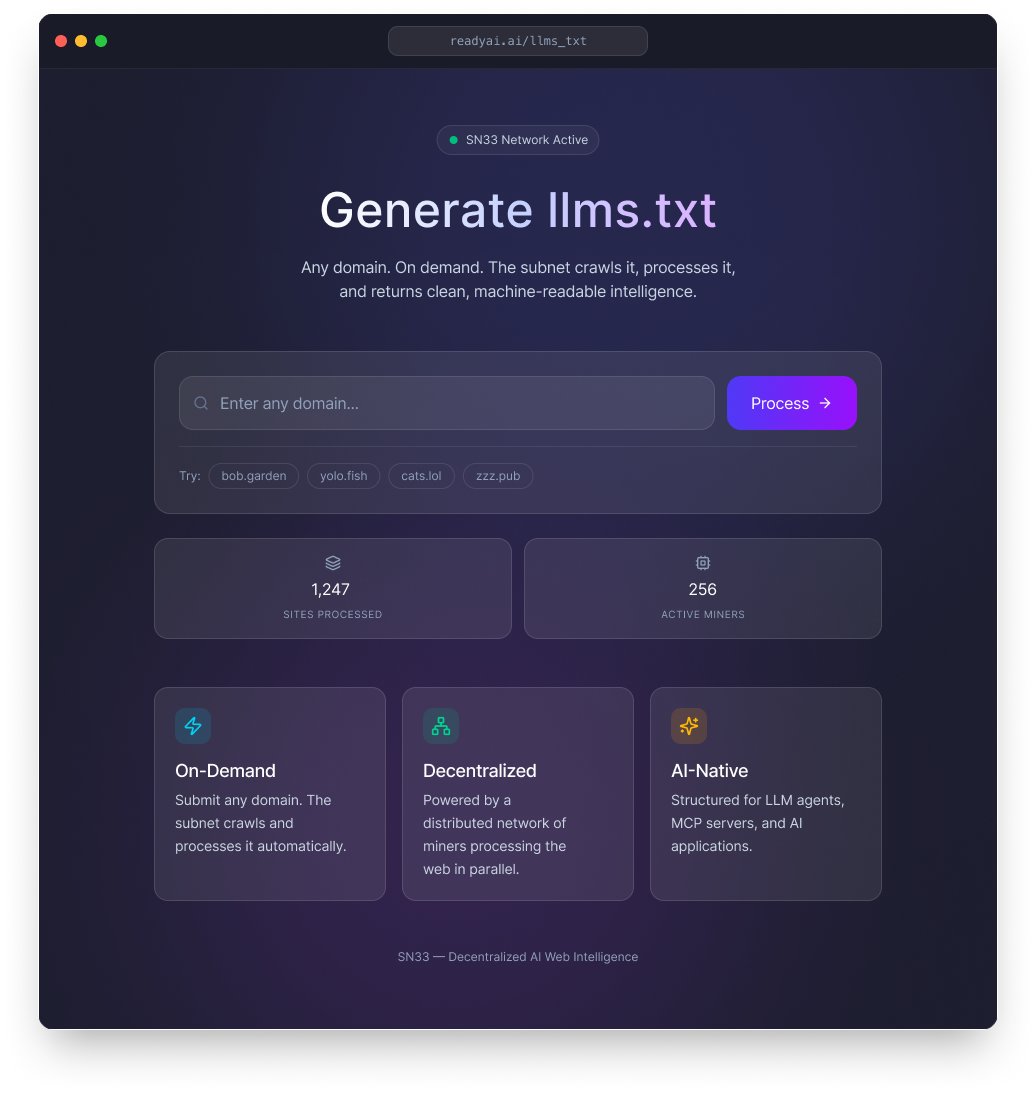

We just launched a new readyai.ai Type any domain into the search. If it's in our dataset, you get clean, structured intelligence instantly. No scraping. No parsing HTML. Just machine-readable data, ready for any AI agent. 10,000+ websites crawled, cleaned, and structured by Subnet 33 so far. Growing to 100K by Q2, 1M by year end. This is the beginning of something bigger: a marketplace for agentic data. Right now, every AI agent that needs info about a company or domain scrapes, parses, and hopes. Billions of redundant crawls. Trillions of wasted tokens. We're building the infrastructure layer that fixes this — an indexed, machine-readable web powered by decentralized compute.

Nothing to see here… Just Jensen Huang (CEO of the world’s most valuable company Nvidia) and Chamath discussing Bittensor $TAO 🤯

🚀 llms.txt are live on SN33 The llms.txt repository is now live. 🔗 github.com/afterpartyai/l… SN33 has processed the first batch with over 1,000 websites crawled, cleaned, and converted into structured llms.txt files by the subnet. Semantic summaries ready for any LLM agent, MCP server, or AI app to consume instantly. No scraping. No parsing raw HTML. Just clean, machine-readable intelligence. New batches will be pushed as the subnet keeps processing. The repo grows every week. What's in the dataset: → Structured semantic summaries per domain → Named entities: people, orgs, products, technologies, concepts → Topic classification and key themes → Deterministic O(1) lookup by domain with no index file needed → Git-friendly structure that scales to millions of domains This initial release covers ~1,000 domains as a pilot, but the pipeline scales to millions. 📍 Roadmap: 10K → 100K → 1M domains → continuous updates from new Common Crawl releases and soon from requests. 🌍 And the frontend is coming. Any domain. You request it, the subnet processes it, you get an llms.txt back. We're putting the finishing touches on the public UI and it drops soon. SN33 is becoming infrastructure. The web, made readable for machines and open to anyone, powered by decentralized infra. Star the repo. Share it. And stay close. The next drop is right around the corner.

SN33 -- Enriching the Data of the World SN33 just shipped Webpage Metadata v2, and the best way to explain what we’re building is this: an llms.txt version of Common Crawl. Our partnership with Common Crawl began with the simple but daunting task of tagging web pages to make semantic web data widely available. Generating this data would break down the barriers preventing web organization. This week we are taking a giant step in broadening that goal to encapsulate AI-enabling the world wide web by launching the enrichment process for entire web sites. Search engines atomize the web by surfacing individual pages. That's great for finding individual facts. It does almost nothing to give agents the holistic information they need to actually complete tasks. Simple example: an agent searching for "best skis" gets quality-for-price rankings from individual pages. It completely misses how waist width affects your ability to float in powder, navigate tight spaces, or carve on groomed trails. That information exists across an entire site, but no one is structuring it that way. This week we shipped the technology to change that. SN33 is now enriching entire web sites, not just individual pages. Our new high-volume API pushes full sites through the subnet, collecting enriched data from tags, NER, similar pages to summarization across every page on a site, grouped together. Why llms.txt matters The llms.txt standard summarizes an entire web site's contents in a single meaningful text file. Agents and MCP tools can understand what a site contains without processing every page. It's the missing layer between the open web and the agent economy. Adoption has been stymied by one problem: nobody is generating these files at scale. There hasn't been a broad effort to create llms.txt for the whole web — until now. Once SN33 reaches tipping-point volume of enriched site data, we begin publishing llms.txt files at scale. We believe SN33 will become the largest producer of llms.txt files in the world. The demand for structured web data is already proven. Our first open-source dataset, the 5000 Podcast Conversations, has crossed 300,000+ downloads on HuggingFace. That was conversations. This is the entire open web. More open-source releases are coming. v2.28.63 is on testnet and goes mainnet February 23rd.