Sabitlenmiş Tweet

resonance_src

79 posts

resonance_src

@resonance_src

Storyteller. Pitched originals to Aniplex + Netflix. Now making it myself. YouTube · TikTok · IG: @resonance_src [email protected]

Katılım Aralık 2025

104 Takip Edilen29 Takipçiler

For @MilanMomcilovi5: your #MarchMadness anime backstory.

Harness Farm For All.

Don't kill my bracket.

English

@ty3rome @Studio_Tora_lab @SuperUltraMega @AiAniAlchemist Love this concept. Thanks for the shoutout 🙏🏽

English

resonance_src retweetledi

Something stirs at midnight…

MIDNITEDIVE — the first sanctuary for Al Anime storytellers awakens Saturday.

Four creators. Four worlds. One night.

@resonance_src - Viva EPS 1: Mira

@studio_Tora_lab - Twist Crown EPS 4

@SuperUltraMega - Noor Star

@AiAniAlchemist — Dragon Blood Revolution

The stories find you Saturday. Will you be watching?

English

resonance_src retweetledi

A.I. Filmmaking is SLOP! It’s disgusting. There’s no soul in it! ...

That’s what a lot of people on the internet still say.

Quick note: This post is sponsored by Flick, and I highly recommend checking them out.

But here’s the thing: many people also think making a film with AI is incredibly easy. And that’s partly true. It is easier when you compare it to the effort of a traditional film production.

But it’s incredibly hard if you think it’s just a “push of a button.”

So I took that debate… and moved it into a kitchen.

I used flick.art as my home base and generated most of the project inside the canvas. Within Flick, I primarily used Kling AI 3.0 to create the dialogue scenes.

But here’s the game-changing hack:

I use video models to generate start frames.

Since video models are trained on actual video data, it makes a lot of sense to use them for the initial frames especially if you don’t want your start frames to look like static photos.

For example, when you use OpenAI Sora 2 or ByteDance Seedance with the same prompt you’d normally use with Google Nano Banana or Seedream, you’ll often get results that already feel much more like real video from the start.

Check out :

👉 flick.art

And if you’re into A.I. filmmaking you can also sign up for my newsletter. (Something big is coming)

👉 simonmeyerdirector.com/musicvideo

KEEP COOKING.

#ai #filmmaking

English

@cryptoxiaoxiang That's a nice trick to get around content filters haha

English

I made this fight scene 3 months ago.

Before SeeDance 2.0, Kling 3.0, Vidu 3, and many other models released.

Crazy how quickly this space moves.

Still one of my favorite fight scene cuts due to the hand to hand combat, which I crafted frame by frame for that sequence.

May look to remaster this with new models.

English

@icreatelife Woops this link may be dead. But here it is on my profile:

x.com/resonance_src/…

resonance_src@resonance_src

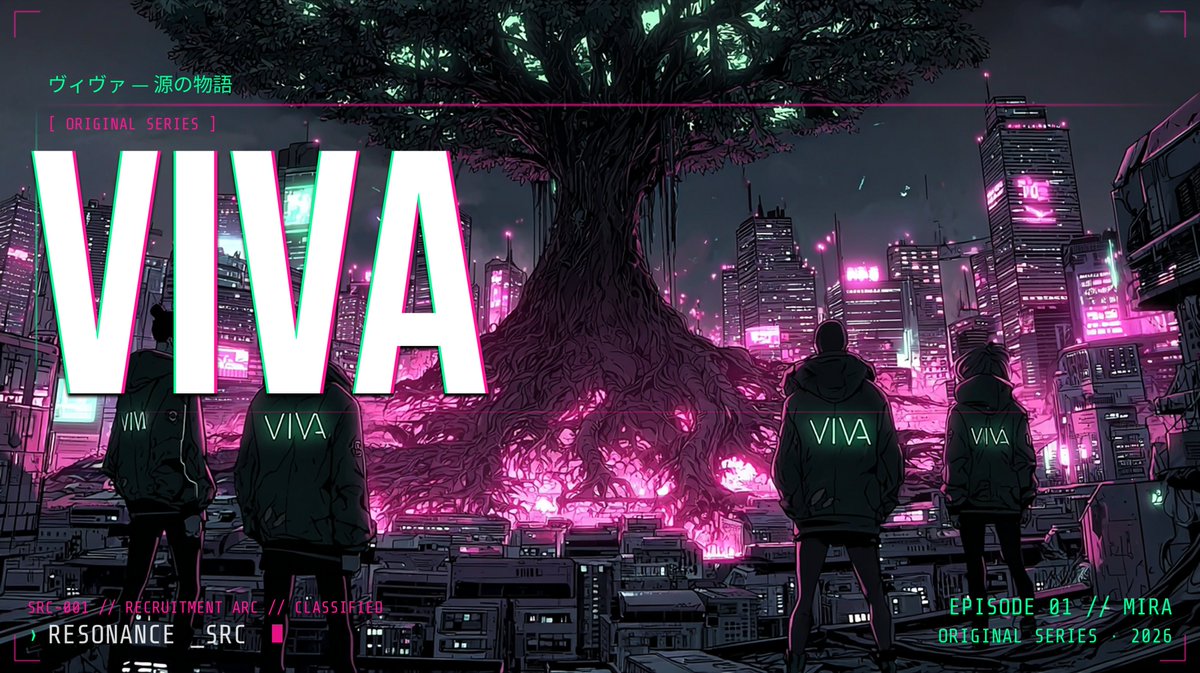

A cyberpunk city powered by a dying tree. Mira was built to be a weapon. Now she's choosing her own target. VIVA | Ep 01: Mira

English

@icreatelife Haven't gotten around to posting it on my X yet, but here's a really fun cyberpunk one I just finished. You mentioned you were working on multi-frames @icreatelife - got some good camera shots in this one. youtube.com/watch?v=W1oWpm…

YouTube

English

@VraserX I’ve actually heard from a good amount of tech friends they’re fully switching over to Claude. Makes it easier since the quality on chatgpt has been noticeably dropping

English

@Framer_X @yapper_so Interesting. How’s it compare to youart?

English

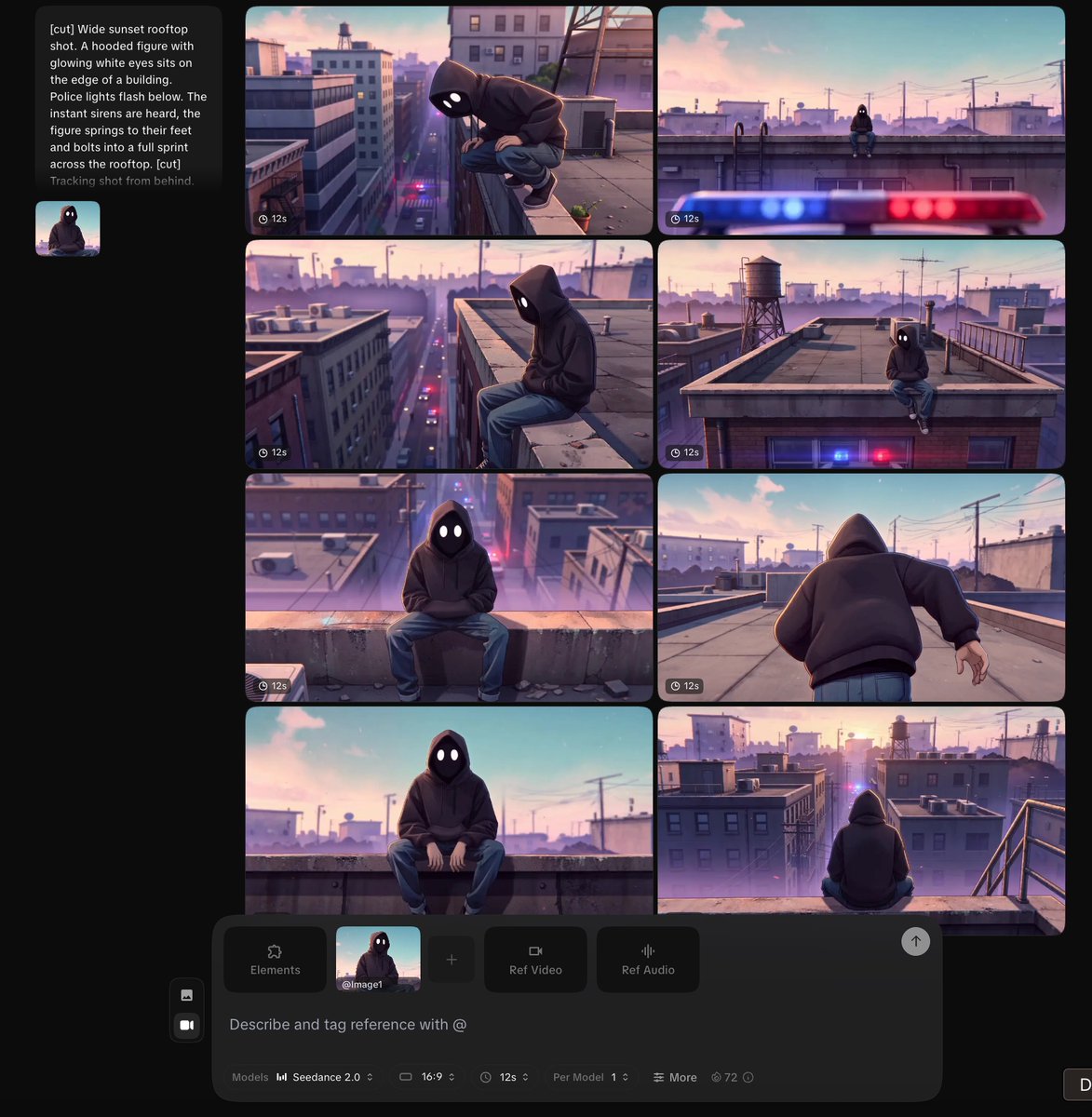

Many of you asked where I’m running Seedance 2.0.

After testing 20+ platforms, @yapper_so currently has the best pricing + highest success rate.

Don’t expect miracles though.

They face the same issues as everyone else:

• strict moderation

• overloaded servers

BUT - they implemented some smart backend optimizations that noticeably increase the pass rate.

Here’s how to use it properly:

1️⃣ Get the cheapest plan.

$10 plan →

5s video ≈ $0.37

15s video ≈ $0.90

2️⃣ Upload your image + prompt.

Select Seedance 2.0 from the model list.

3️⃣ IMPORTANT: always generate 8 variations per attempt.

Many generations fail randomly.

Usually only 2–3 out of 8 pass.

Generating 8 at once saves you hours.

WHAT TO EXPECT

First your prompt must pass moderation.

If you get “Content flagged” - copy/paste prompt into chatgpt and ask to fix it.

Once it passes the moderation filter, one of three things happens:

a) 2–3 our of 8 videos succeeds in 5–20 minutes

b) You wait up to 2 hours, but most (if not all) succeeds (as in screenshot)

c) Everything fails fast → just regenerate

It’s still a bit tedious, but if you want access to Seedance 2.0 right now, this is the most reliable method I’ve found.

Good luck!

English

OpenAI is hemorrhaging users right now.

Mass cancellations flooding in after the Pentagon deal, GPT-4o kill-off, and endless downgrades.

700,000+ already pledged to quit. ChatGPT app crashing under the weight of delete buttons.

Sam Altman built an empire on user trust. Now users are torching it. Centralized AI always eats its own.

English

@minchoi This is literally how they're going to subvert and use these models for surveillance. Under ambiguous terms.

English

@ns123abc Allowing usage for 'all lawful purposes' is also terribly ambiguous and opens the door to mass surveillance

English

🚨 OPENAI FOUND THE LOOPHOLE

The terms OpenAI proposed allows the Pentagon to still do those things, just not on OpenAI’s cloud.

Altman:

“we will deploy on cloud networks only”

OpenAI isn’t saying “you can’t do surveillance/autonomous weapons.” They’re saying these things are “unsuited to cloud deployments.”

It’s a technical limitation, not an ethical prohibition.

OpenAI still provides the models and know-how. It just runs on Pentagon servers.

Anthropic got banned for saying “No”

OpenAI got a contract for saying “not here”

Scam Altman strikes again.

English