Robert L. Logan IV

102 posts

One of the most consequential series of investigative journalism of this decade was the @Propublica series that @eisingerj helmed, in which Eisinger and colleagues analyzed a trove of leaked IRS tax returns for the richest people in America: propublica.org/series/the-sec… 1/

Can long-context language models (LCLMs) subsume retrieval, RAG, SQL, and more? Introducing LOFT: a benchmark stress-testing LCLMs on million-token tasks like retrieval, RAG, and SQL. Surprisingly, LCLMs rival specialized models trained for these tasks! arxiv.org/abs/2406.13121

GPT-5 confirmed to confidently try to complete 11 tasks simultaneously then email you 6 months later explaining it’s had a mental breakdown

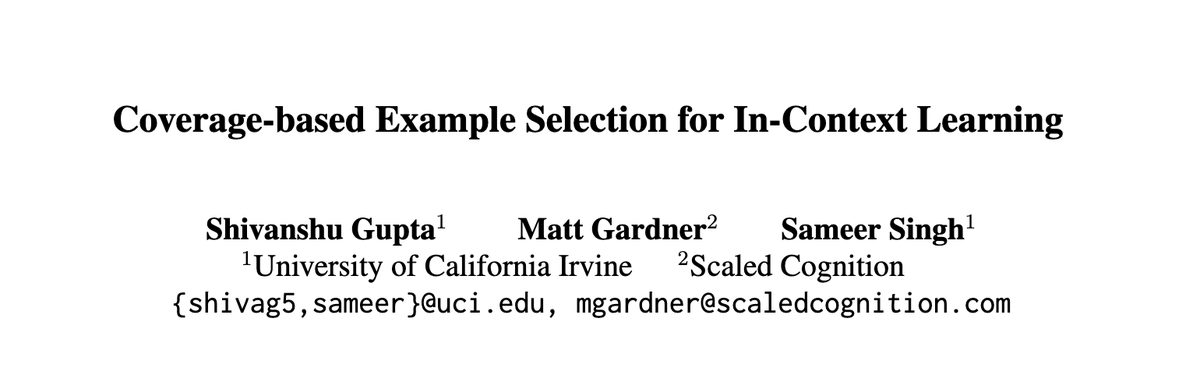

(1/6) 🚀🚀 Thrilled that our paper arxiv.org/abs/2305.14907 has been accepted to #EMNLP2023 findings! 🎉 tl;dr: Selecting in-context examples that together cover all the salient aspects of the test input yields training-free methods that beat even trained SoTA methods! 💪🔥

Explaining ML models can be a difficult process. What if anyone could understand ML models using accessible conversations? We built a system, TalkToModel, to do this!🎉 Paper: arxiv.org/abs/2207.04154 Code & Demo: github.com/dylan-slack/Ta… 1/9