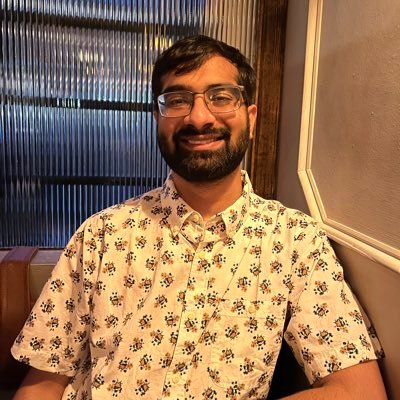

Yunhao (Robin) Tang

128 posts

Yunhao (Robin) Tang

@robinphysics

Interested in training and RL • @AnthropicAI • Ex reasoning @MistralAI • Llama RL @MetaAI • Gemini post-training and Deep RL research @DeepMind • PhD @Columbia

#RepresentationLearning can help training strong RL agents on a variety of tasks. But which feature learning method should you pick? State reconstruction (like Dreamer), or latent self prediction (like SPR)? openreview.net/forum?id=izAJ8… With @tylerkastnr @igilitschenski @sologen

Understanding the performance gap between online and offline alignment algorithms Reinforcement learning from human feedback (RLHF) is the canonical framework for large language model alignment. However, rising popularity in offline alignment algorithms challenge the need