Robins

240 posts

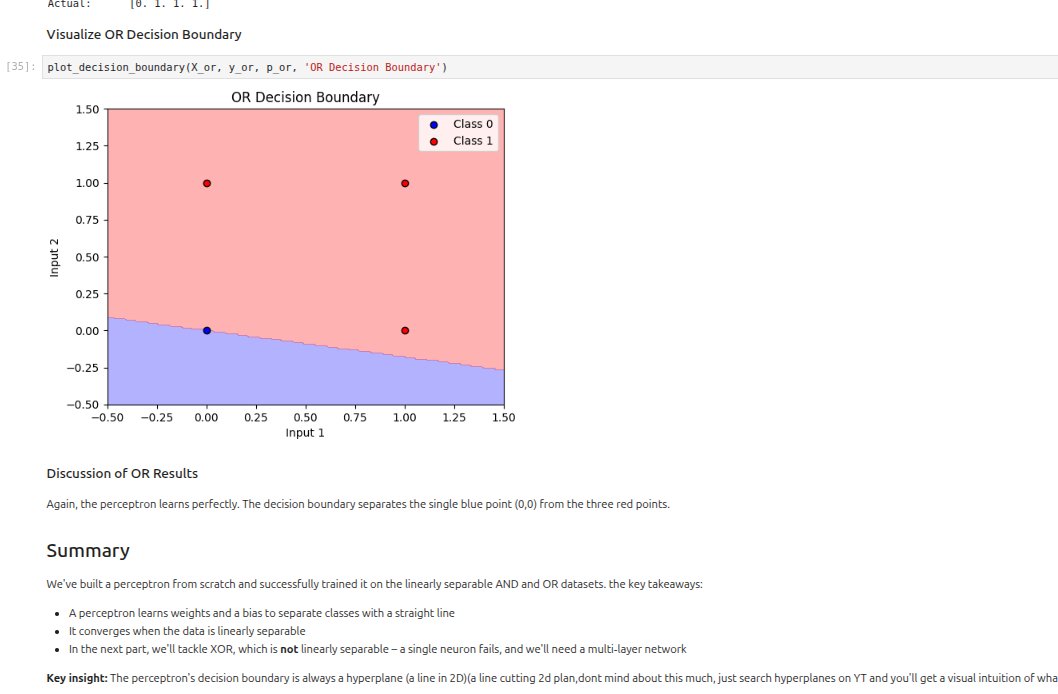

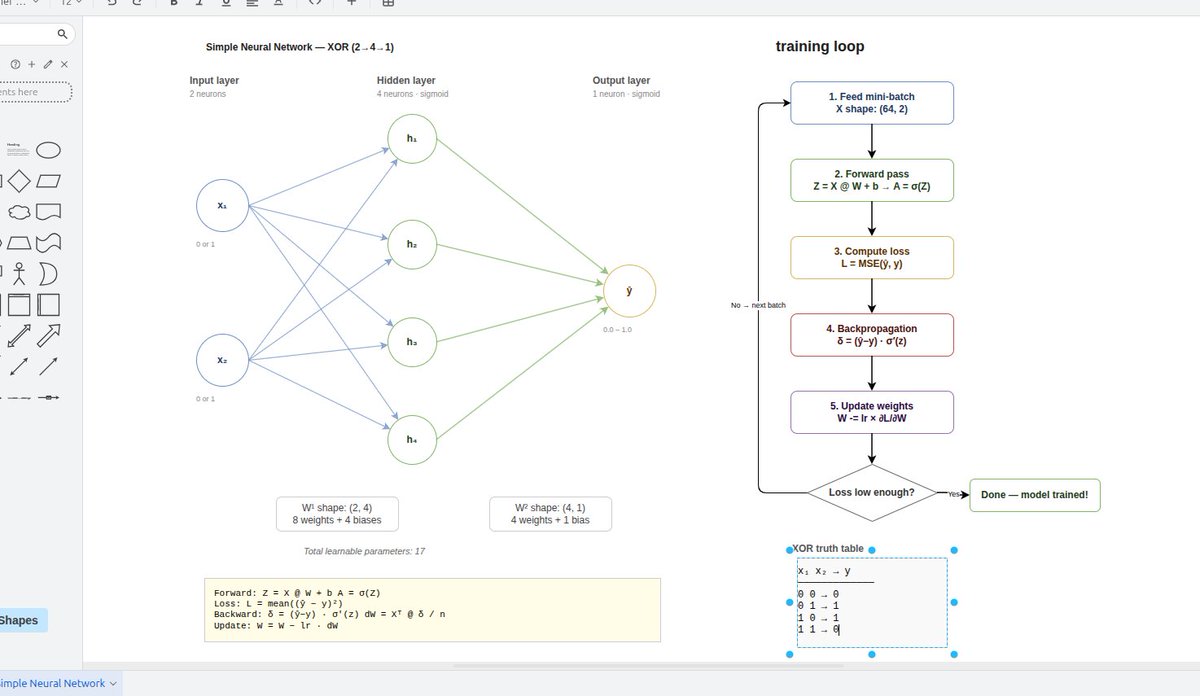

DAY 15: reviewed building blocks structure of a basic neural network and finalized the architecture design.I'll implement it tomorrow

DAY 15: reviewed building blocks structure of a basic neural network and finalized the architecture design.I'll implement it tomorrow

Day 14: spent some time going through #21-scalars-vectors-matrices-and-tensors" target="_blank" rel="nofollow noopener">sebastianraschka.com/teaching/pytor…

and figured building a neural network from scratch this week would be a good exercise. I’ll keep posting updates

Day 13 of ML: Today I read Andrej Karpathy’s article karpathy.medium.com/yes-you-should… completed his neural nets lecture 1(which is Lecture 4 in the full series)on backpropagation on YouTube - youtu.be/i94OvYb6noo?si…

I’m back, been trying to balance things, hoping this works now

Day 12 of ML(DL) with dl2.ai: -automatic differentiation -autograd -+learnt how pytorch works fundamentally

Day 11 of ML went through backprop and autograd Understood how gradients flow backward via the chain rule, and how autograd builds the computation graph to compute derivatives automatically during training

Day 10 of ML with dl2.ai neural networks and a deeper dive into neurons. what stood out: -random initialization breaks symmetry, so neurons specialize during training -learning = updating weights with gradient descent to shape decision boundaries.

DAY 9 OF ML with dl2.ai: pytorch & collab basics

DAY 8 of ML with dl2.ai: Essential Linear Algebra d2l.ai/chapter_prelim…