CMU Center for Perceptual Computing and Learning

649 posts

CMU Center for Perceptual Computing and Learning

@roboVisionCMU

The Chronicles of Smith Hall.

A snapshot into how SAM 3D Body is being used at CMU for assisting physical therapy

RI Associate Research Professor Kris Kitani led the development of SAM3D Body, which could transform physical therapy with AI pose estimation! #TartanProud SAM 3D Body delivers precise, AI-driven static position analysis to personalize rehabilitation & enhance patient outcomes.

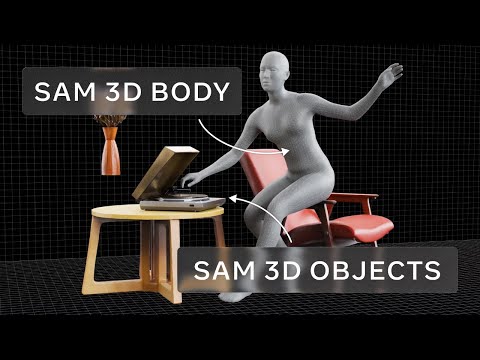

Introducing SAM 3D, the newest addition to the SAM collection, bringing common sense 3D understanding of everyday images. SAM 3D includes two models: 🛋️ SAM 3D Objects for object and scene reconstruction 🧑🤝🧑 SAM 3D Body for human pose and shape estimation Both models achieve state-of-the-art performance transforming static 2D images into vivid, accurate reconstructions. 🔗 Learn more: go.meta.me/305985

In this #CVPR2025 edition of our community-building workshop series, we focus on supporting the growth of early-career researchers. Join us tomorrow (Jun 11) at 12:45 PM in Room 209 Schedule: sites.google.com/view/standoutc… We have an exciting lineup of invited talks and candid panels: @sarameghanbeery, @dimadamen, @jbhuang0604, @lealtaixe, @LerrelPinto, @lschmidt3, @shubhtuls, @gulvarol, @cvondrick, @sainingxie Co-organizing with @unnatjain2010, @ap229997, @georgiagkioxari, @akanazawa, and Lana Lazebnik @CVPR

1/ Despite having access to rich 3D inputs, embodied agents still rely on 2D VLMs—due to the lack of large-scale 3D data and pre-trained 3D encoders. We introduce UniVLG, a unified 2D-3D VLM that leverages 2D scale to improve 3D scene understanding. univlg.github.io

🚀 Can we make a humanoid move like Cristiano Ronaldo, LeBron James and Kobe Byrant? YES! 🤖 Introducing ASAP: Aligning Simulation and Real-World Physics for Learning Agile Humanoid Whole-Body Skills Website: agile.human2humanoid.com Code: github.com/LeCAR-Lab/ASAP

🚀 Can we make a humanoid move like Cristiano Ronaldo, LeBron James and Kobe Byrant? YES! 🤖 Introducing ASAP: Aligning Simulation and Real-World Physics for Learning Agile Humanoid Whole-Body Skills Website: agile.human2humanoid.com Code: github.com/LeCAR-Lab/ASAP

Excited to share that I'll be joining University of California at Irvine as a CS faculty in '25!🌟 Faculty apps: @_krishna_murthy, @liuzhuang1234 & I share our tips: unnat.github.io/notes/Hidden_C… PhD apps: I'm looking for students in vision, robot learning, & AI4Science. Details👇