Ropedia

32 posts

Ropedia

@ropedia_ai

Interactive Intelligence from Human Xperience

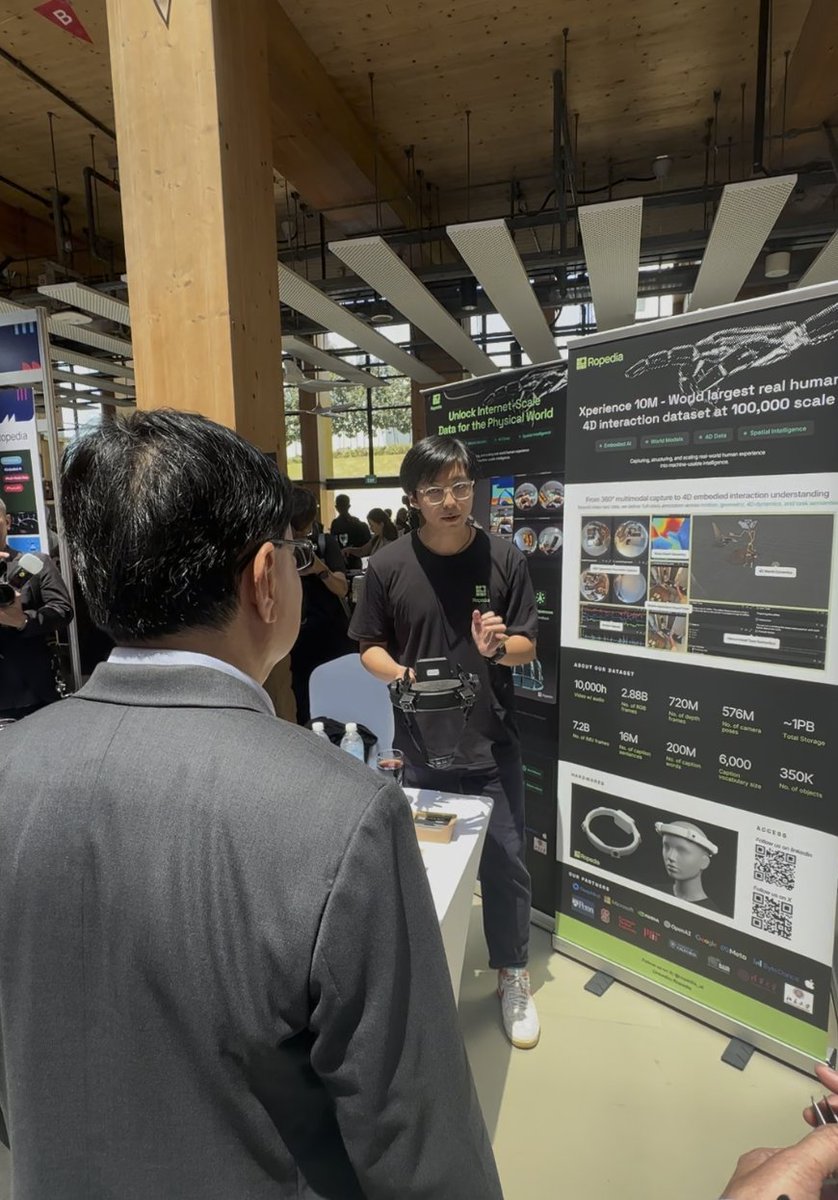

Today Ropedia releases Xperience-10M at #GTC day 1 — World largest real human 4D interaction dataset at 10M scale. Each trajectory aligns: • visual observations • spatial structure • human motion • interaction dynamics • task semantics A new foundation for physical and spatial AI, try it out @huggingface huggingface.co/datasets/roped…

Today Ropedia releases Xperience-10M at #GTC day 1 — World largest real human 4D interaction dataset at 10M scale. Each trajectory aligns: • visual observations • spatial structure • human motion • interaction dynamics • task semantics A new foundation for physical and spatial AI, try it out @huggingface huggingface.co/datasets/roped…

Today Ropedia releases Xperience-10M at #GTC day 1 — World largest real human 4D interaction dataset at 10M scale. Each trajectory aligns: • visual observations • spatial structure • human motion • interaction dynamics • task semantics A new foundation for physical and spatial AI, try it out @huggingface huggingface.co/datasets/roped…

It turns out that VLAs learn to align human and robot behavior as we scale up pre-training with more robot data. In our new study at Physical Intelligence, we explored this "emergent" human-robot alignment and found that we could add human videos without any transfer learning!

We discovered an emergent property of VLAs like π0/π0.5/π0.6: as we scale up pre-training, the model learns to align human videos and robot data! This gives us a simple way to leverage human videos. Once π0.5 knows how to control robots, it can naturally learn from human video.