Ryan Teehan

264 posts

Ryan Teehan

@rteehas

PhD Student @nyuniversity | prev. @stabilityai | x-cofounder @carperai | prev. @uchicago @TTIC_Connect

316 ARC-AGI tasks solved with zero learning. No neural net, no training, no DSL — just 19th-century projective geometry. Encode grid cell relationships as Plücker lines in P³, find transversals via Schubert calculus, score candidates by geometric incidence. 95% solve rate on the eval set (of non-timeout tasks). Single C file, runs in seconds.

“Some academics think that the students themselves are different: Whether because of concerns about the worsening job market or a cultural shift rightward, they seem less interested in raising hell on campus.” theatlantic.com/ideas/2026/03/…

Only a very high-level target (at the extreme, think class labels) can make shortcut solutions easier. If the loss has to explain a hierarchy instead of just a high-level target, you impose more semantic constraints on the representation, and you get less spuriousness.

new preprint! turns out, if your model is confident on _any_ long enough input, we can find other inputs where the model is wrong, yet its perplexity won't really tell you it's wrong 📉 work with @fedzbar @ccperivol @sindero and Razvan

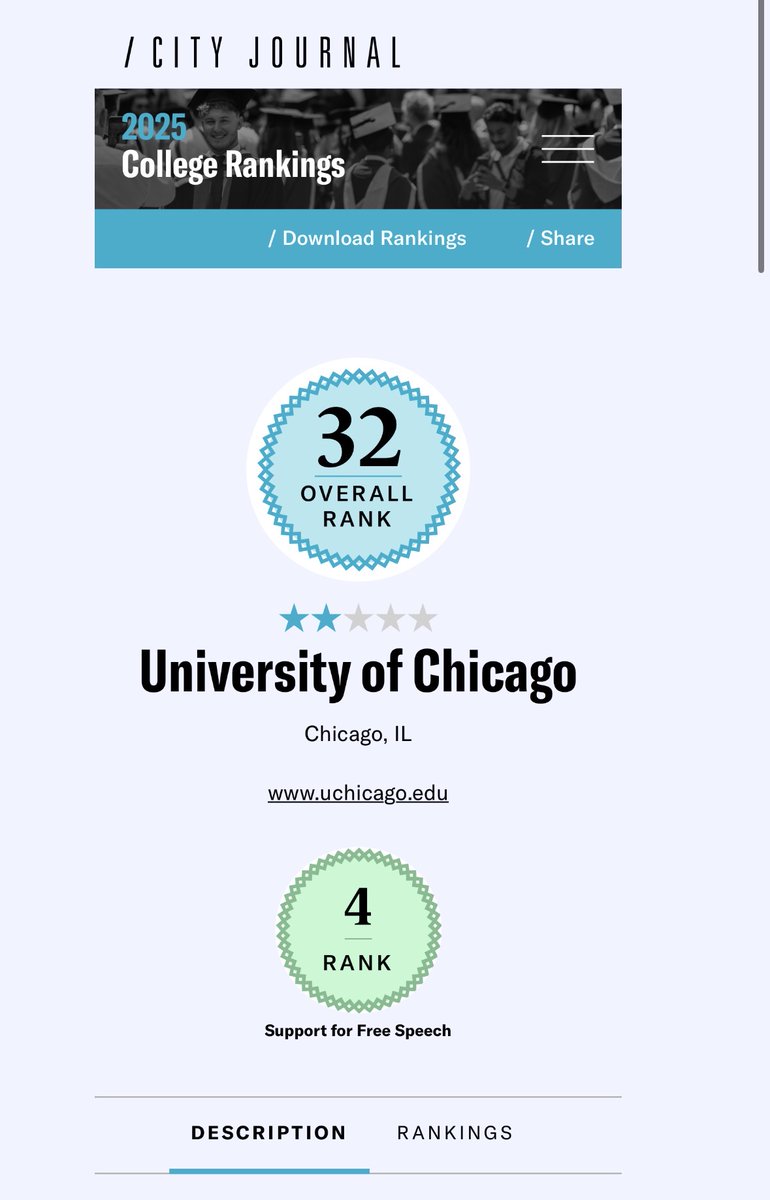

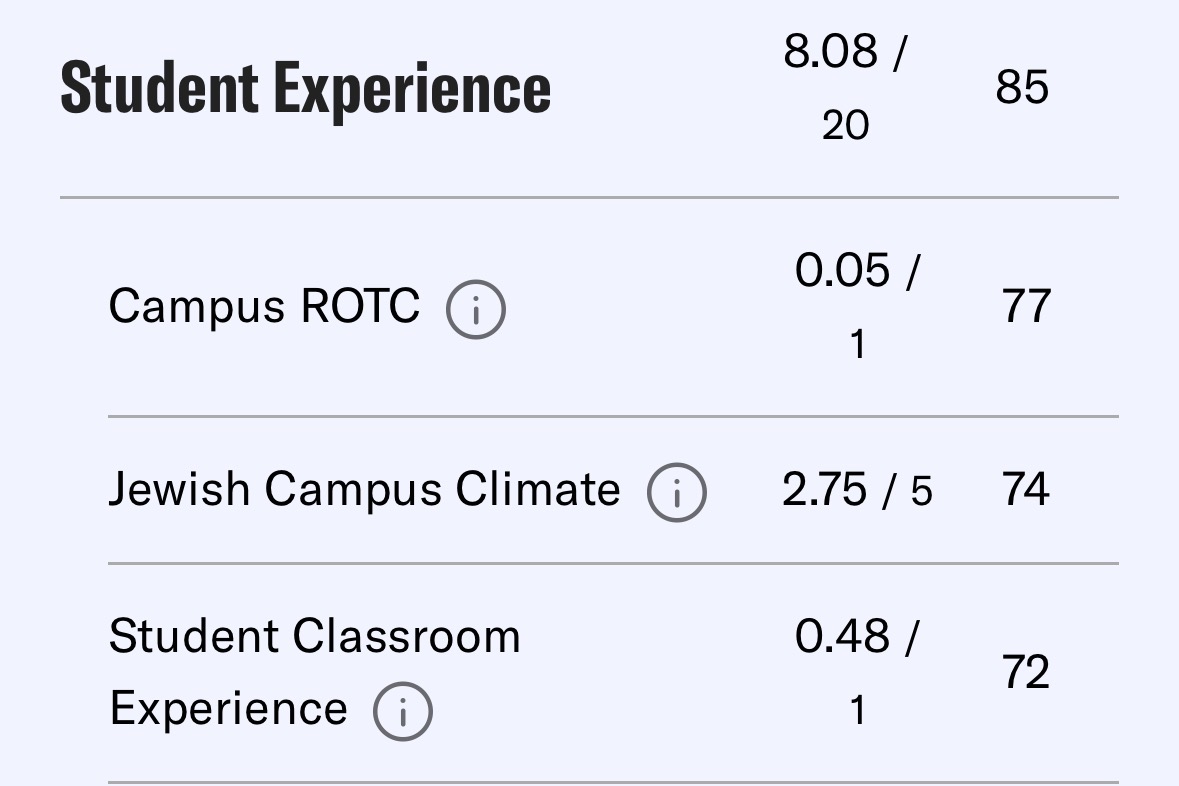

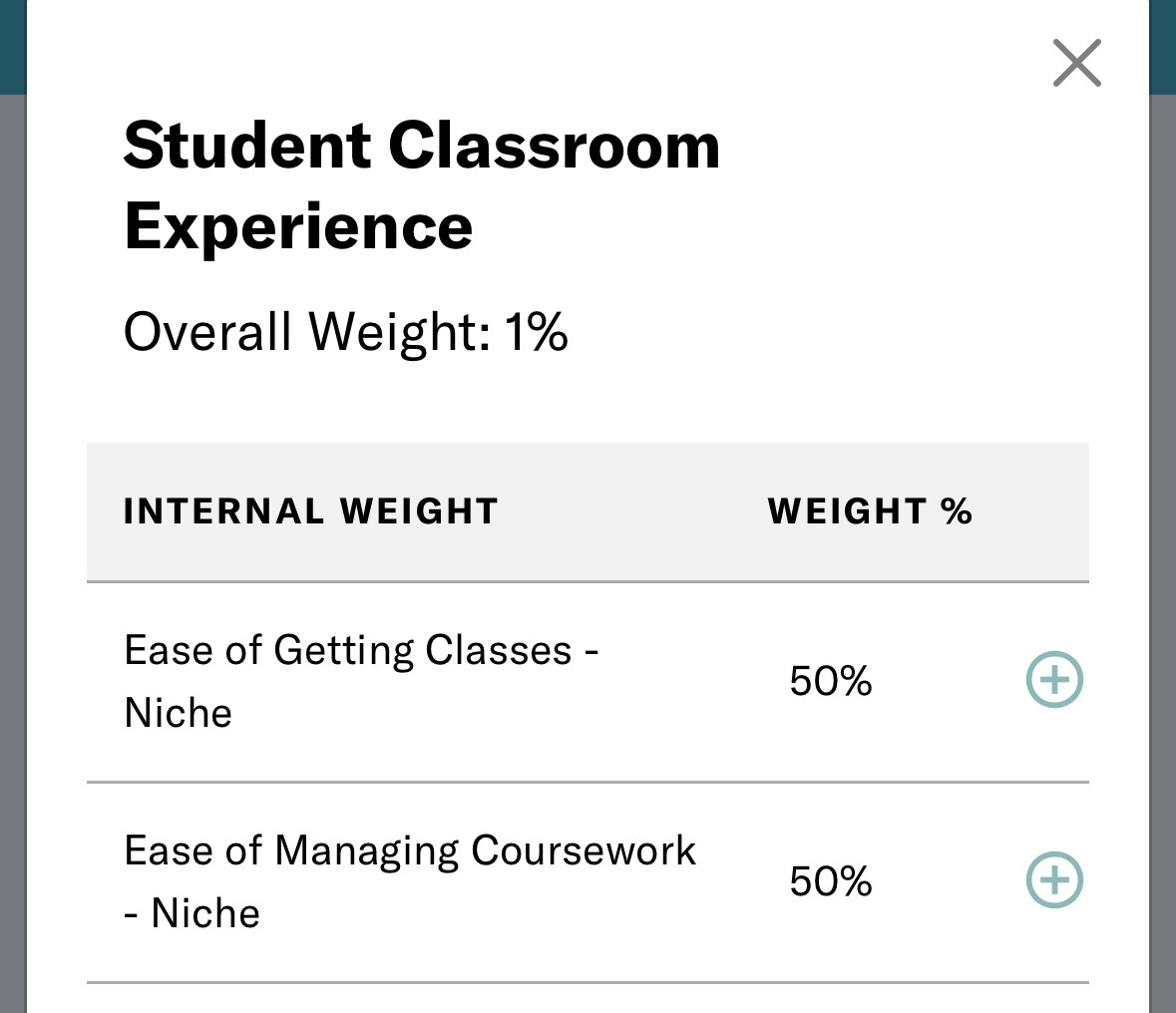

The Manhattan Institute's inaugural 2025 university rankings btw, released as "a necessary corrective to outdated lists." The Bari Weiss slopulists have invented ways of reality-bending undreamt of by the undynamic DEI geriatrics

Do stronger LLMs make better verifiers? Not necessarily when grading themselves. New work led by Courant PhD student Jack Lu (@Jacklu_me) and CDS Asst Prof Mengye Ren (@mengyer) shows that cross-family verification outperforms self-verification. nyudatascience.medium.com/study-reveals-…

Wondering how to get the most out of LLM test-time verification? New study: “When Does Verification Pay Off? A Closer Look at LLMs as Solution Verifiers". 🔍 37 models, 9 datasets 🔥 Self vs intra-family vs cross-family verification Result: verify across families! 🧵👇

ICL is powerful, but only if LLMs actually understand their contexts. Let’s optimize the KV-cache itself for few-shot adaptation! Introducing Context Tuning: 📎 Initialize prefixes from examples ⚙️ Optimize them via gradient descent 🚀 Unlock strong, efficient adaptation 🧵👇