Barabilo T@BarabiloT

Spent last week digging for tokens where value accrual is actual math you can model, not just conference theater. That search led me straight to $DROPEE.

Most tokens in this cycle are single-product bets, think $HYPE or $PUMP. Dropee is different. It's building the infrastructure to create, deploy, and distribute mini-apps directly inside Telegram. That distinction matters because it turns the platform into a revenue-generating layer rather than a one-off speculation.

The buyback mechanics are what caught my attention. Up to 50% of every dollar earned flows straight into on-chain $DROPEE buybacks. That's explicit, measurable, and repeatable. When you run even a modest slice of Telegram's $670M/yr mini-app market through that 50% band, you're suddenly modeling tens of millions in structural annual buying pressure. That's not narrative, that's a formula you can plug into a 2026 forecast.

What tightens the thesis further is the AI-native stack. Dropee Create is already shipping titles by automating visuals, mechanics, and basic code. Marginal cost per title drops hard, output scales fast, and eventually the community feeds revenue back into the ecosystem. More builders, more distribution, more buybacks. The flywheel becomes self-reinforcing once it's moving.

The $DROPEE Public Sale is live on ChainGPT Pad right now and closes May 25 at 12 PM UTC ahead of TGE. You're getting in while the buyback mechanics are already laid out and the AI engine is already producing at scale.

If Dropee keeps capturing and routing revenue into those buybacks, this evolves from "trust me bro" into a token with mechanics you can actually spreadsheet. That's exactly the kind of asymmetry I was looking for.

@dropee_app #DropeeCreate $DROPEE

━━━━━━━━━━━━━━━━━━━━

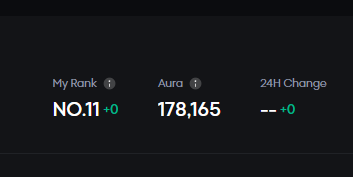

Spent some time looking into @wallchain , and the thesis is pretty clear: it helps Web3 brands find real influence at scale, while giving creators a way to prove real impact.

No vanity metrics. No bot noise. Just authentic attention, smarter targeting, and a reputation layer for Crypto Twitter that values quality, trust, and consistency over follower count.

━━━━━━━━━━━━━━━━━━━━

@3look_io feels like a smarter way to run creator campaigns. Instead of vague promo incentives, it gives campaigns defined rules, fixed reward pools, and automatic tracking.

Pre-TGE campaigns reward growth with points, while post-TGE campaigns pay out in crypto. Eligible posts are tracked, rewards are calculated from real engagement, and distribution runs until the pool is fully allocated.

It feels like a more structured way to turn attention into rewards, instead of just chasing impressions.