Ryan Theisen

1.7K posts

Ryan Theisen

@rythei

Constructing a series of breathing apparatus with kelp

Katılım Aralık 2012

432 Takip Edilen300 Takipçiler

@vin_sachi Who has a clue what’s in the train set in these models? The evals are basically marketing material now, so reasonable to assume labs cook up/buy/generate similar types of problems to train on

English

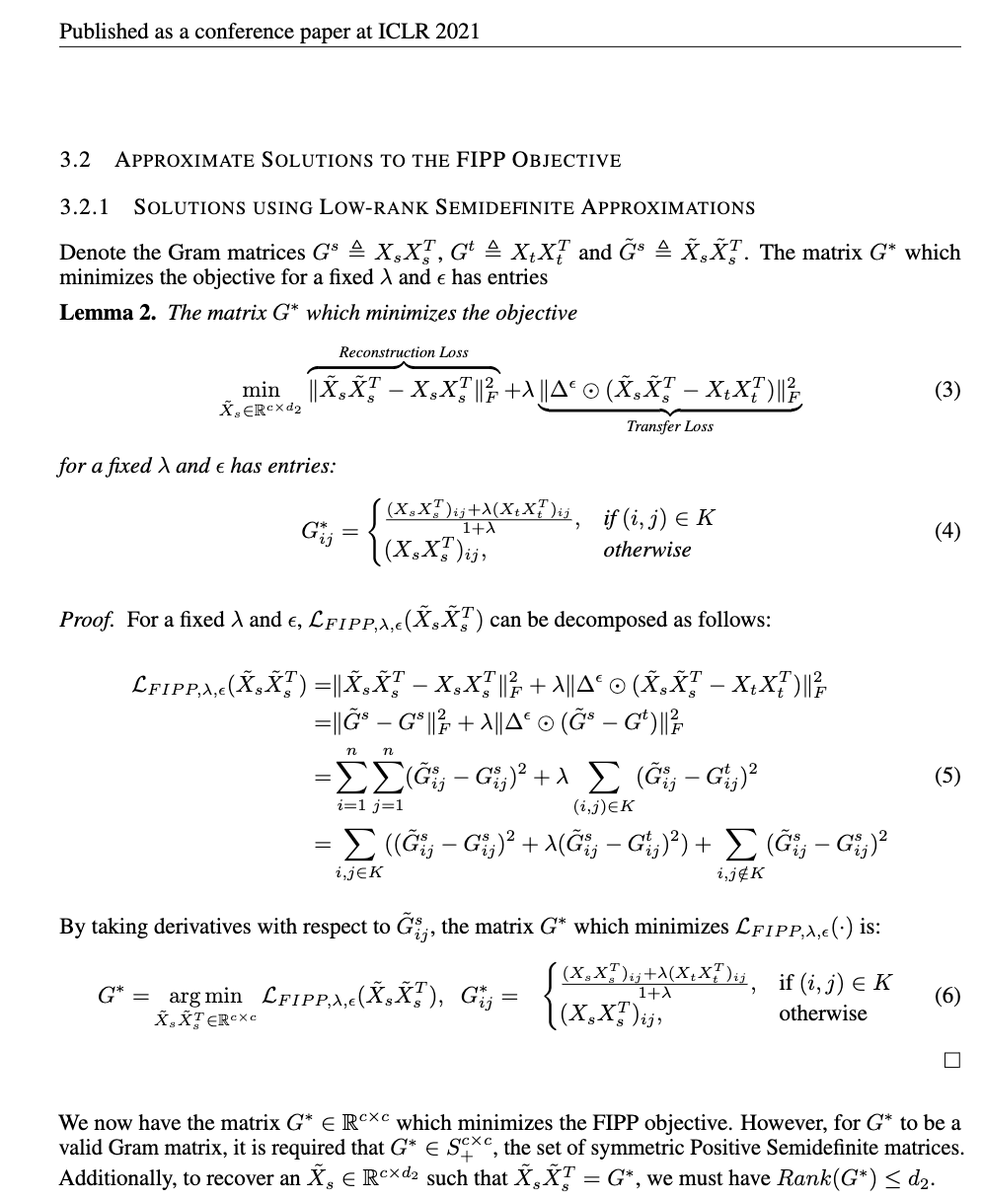

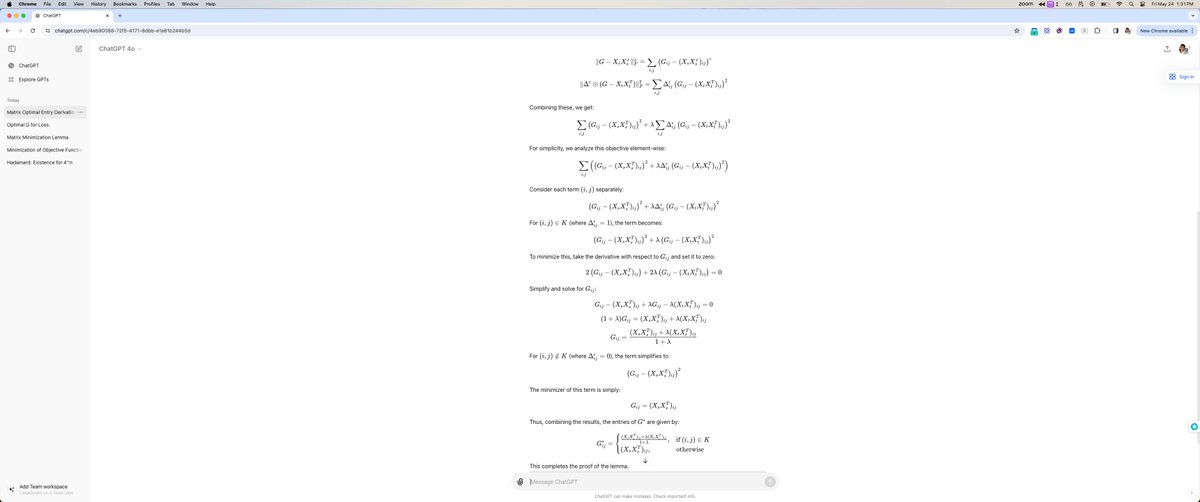

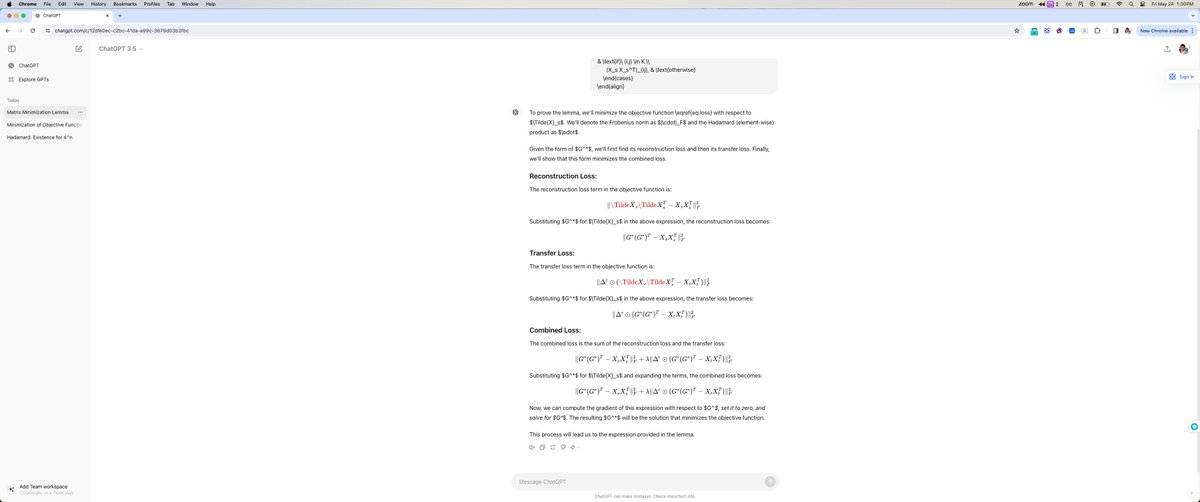

Amazing progress but one thing evals fail to capture is how generalizable reasoning models are OOD

Maybe one way to benchmark this would be similarity of nearest neighbors in the models train set to eval test set samples

Probably a good paper there if it doesn’t exist

adi@adonis_singh

this graph is so insane once you realize the graph for o3 is pass@1

English

SF is on the same path as Detroit in the 70’s - companies leaving, reduced population, depolicing and drug enablement

If you’re building something and don’t want to live in SF my dms are open. There’s plenty of strong technical talent elsewhere (nyc, boston, la, etc)

The San Francisco Standard@sfstandard

The 16th Street BART plaza is home to an ever-fluctuating market of sidewalk vendors, and while many of them are selling stolen goods, there are deals to be found. sfstandard.com/2024/11/29/bar…

English

@vin_sachi @McaleerStephen Soothr in ny is the best I’ve had in the states

English

@rythei @McaleerStephen Yeah had it on your reco from a while back and get it every time I’m in Seattle lol

English

@vin_sachi @McaleerStephen Yes and this is the best take re Khao Soi

English

@McaleerStephen Oh sick, will be in SF end of month - let’s do dinner there :) @rythei

English

Ryan Theisen retweetledi

(Unsolicited) Advice for a young PhD student - “This is not vocational training”

I spent most of my afternoon today at Stanford. It’s the week before autumn quarter and 8 years since I came out to the farm with wide eyes. It was as good a time as any to reflect at a place that is still the most beautiful I’ve had the chance to call home. One thing I kept thinking about was the advice I’d give to those that were now starting on this path - things have changed so much. Nevertheless, I’d reiterate the advice that had been given to me that was most meaningful in retrospect.

Before coming out west, I’d built a startup in Boston. My first boss and CEO, a professor at MIT, had finished his PhD at Stanford 9 years prior. Through the infinite wisdom of hindsight, he’d provided me many pieces of prescient advice. One that stuck out, and is somewhat under communicated, was to not treat the degrees vocationally.

A parallel he drew is the historical origin of research based academia - that of patronage towards gifted youth. You see, many of the greats, such as Gauss, were given the privilege to learn and discover the fundamental truths through noble patronage. He’d told me that framing the program in this manner would be effective at making the most of it - an exceedingly rare privilege to retreat from the world to learn, discover, meet great people, and introspect. Not as a stepping stone or accomplishment towards a set path or career outcome - a unique aspect relative to any other degree.

In this vein, the PhD is not a means to an end and extrinsically a negative EV bet (maybe this is not true anymore with 7 figure AI salaries). The way to truly win the wager is not carefully crafting the best outcome at the end of the tunnel but through intrinsic value gained in discovery and relationships - learning to be a first principles reasoner, a cogent communicator of complex thought, an adept meta learner, and building authentic connections with those who are invariably the brightest in the world. The best way to do this is to be open-minded, non-transactional and throw out any plans you made on the way in. You have a lot of time, so try a lot of things.

If you play your cards right, you get the chance to live a very interesting life, compound knowledge tremendously, and enjoy the company of the best people along the way

English

@vin_sachi @y0b1byte It does if your objective has sufficient curvature ;)

English

@y0b1byte L1 does not explicitly guarantee sparsity just that \sum_i |x_i| is small. L0 does explicitly encourage sparsity but is not convex. L1 is closest convex proxy to the L0 (you can plot the level sets and look at distance to project between the two)

English

@vin_sachi One’s knowledge cutoff was obviously after the paper was posted, obviously a lm can’t do symbolic differentiation (nor would it ever make sense to use it for that). Kind of a trivial example imo

English

Ryan Theisen retweetledi

@Jayyzaba Yeah, tbf would never have predicted Nelli’s output to fall off so much. Competition for Him + Saka has to be a priority this summer you’d think

English

@Jayyzaba Idk, he’s a luxury player to support other elite goal scorers, which we don’t have with enough consistency

English

Ryan Theisen retweetledi