Sanjay Kariyappa

14 posts

Sanjay Kariyappa

@sanjayatwork

AI Research @ NVIDIA

Palo Alto, CA Katılım Eylül 2019

206 Takip Edilen83 Takipçiler

Come check out our poster if you're attending the conference in person!

Full paper: openreview.net/pdf?id=Ai1TyAj…

This work was done during my internship at FAIR/@MetaAI with my amazing collaborators: Chuan Guo, @kiwanmaeng, Wenjie Xiong, G. Edward Suh, @mointweets & @hsienhsinlee.

English

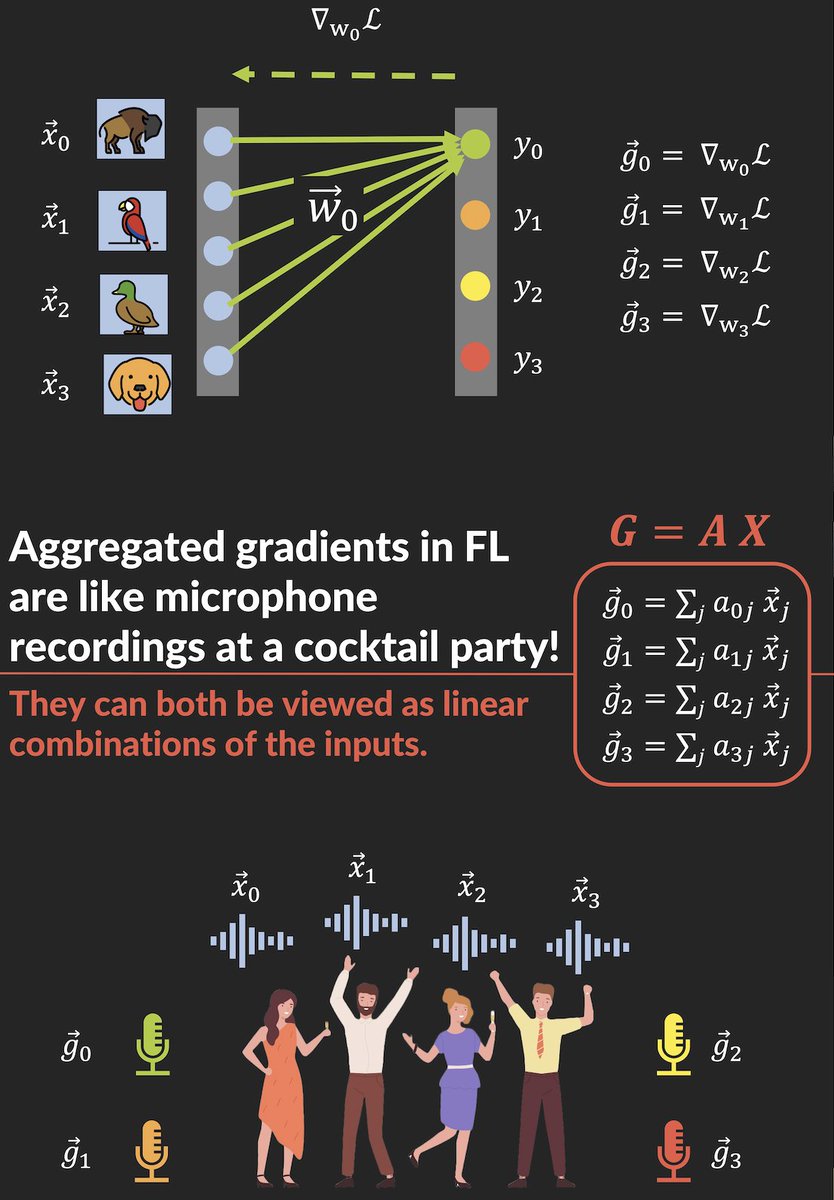

Excited to present our #ICML2023 paper Cocktail Party Attack🍸today at 11:00 am in Exhibit Hall 1! We develop a highly scalable attack that leaks private inputs from gradients in federated learning by framing the attack as a blind-source separation problem.

English

@SupriyaN20 Looks great! Very clean. Need to try this sometime.

English

Tried the #betterposter at #CHIL23 and definitely had more engaging and insightful discussions! Check out our work here: amazon.science/publications/e…

chilconference@CHILconference

@SiyiTang_ @jdunnmon @vickyqu0 @KhaledSaab11 @TinaBaykaner45 @ChrisLeeMesser @rubinqilab How can physicians' trust in ML models be improved? In their #CHIL23 paper, @AmazonScience researchers & colleagues introduce a method to generate realistic time series counterfactuals to explain a given ML model.chilconference.org/proceeding_P21… @SupriyaN20 @RehgJim @Mashah08 @DocWagz

English

Sanjay Kariyappa retweetledi

Very nice to see this work by @sanjayatwork and @mointweets. It's always great to see attacks against methods that are only "intuitively" privacy preserving, like split learning and federated learning (without differential privacy on top).

English

I'll be presenting our work "ExPLoit: Extracting Private Labels in Split Learning" at 1PM ET today at @satml_conf. Our work demonstrates that split learning does not protect label privacy by designing a high-accuracy label leakage attack.

English

How can we steal the functionality of a black-box ML model without using any datasets? Come learn about our data-free attack MAZE being presented at @CVPR between 11:00AM-1:30PM ET. Joint work with @atulprakash and @mointweets.

openaccess.thecvf.com/content/CVPR20…

English

Sanjay Kariyappa retweetledi

Our CVPR'21 paper on two novel model stealing attacks: (1) MAZE for completely data-free settings (no datasets needed) and (2) MAZE-PD when limited in-distribution data available. With Sanjay Kariyappa @sanjayatwork and Moin Qureshi @mointweets. openaccess.thecvf.com/content/CVPR20…

English

Excited to present our work on defending against model stealing attacks at #ICLR2021. Stop by poster session 9 at 8 pm ET today for a chat.

Paper: openreview.net/pdf?id=LucJxyS…

Georgia Tech School of Computer Science@gatech_scs

@sanjayatwork and @mointweets created a new defense that prevents model cloning. #ICLR2021 scs.gatech.edu/news/647163/ne…

English