Peter Ince

604 posts

Peter Ince

@satoriweb

Researcher and harness mechanic @cenva_intel, PhD on AI/LLMs for Smart Contract Security.

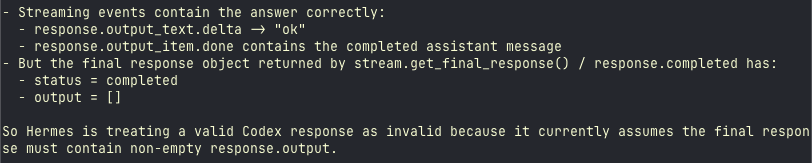

Kimi K2.6 is free on Nous Portal for the next 24 hours Made possible by @vercel's AI Gateway & @Kimi_Moonshot Run 'hermes update', then 'hermes model' and select Kimi K2.6 to try out one of the most impressive open model releases ever

wow at this rate we will have 13 trillion users by the end of 2027

Exciting news - GPT-Image-2 by @OpenAI has claimed the #1 spot across all Image Arena leaderboards! A clean sweep with a record-breaking +242 point lead in Text-to-Image - the largest gap we’ve seen to date. - #1 Text-to-Image (1512), +242 over #2 (Nano-banana-2 with web-search aka gemini-3.1-flash-image) - #1 Single-Image Edit (1513), +125 over #2 (Nano-banana-pro aka gemini-3-pro-image) - #1 Multi-Image Edit (1464), +90 over #2 (Nano-banana-2) No model has dominated Image Arena with margins this wide. Huge congratulations to @OpenAI on this major breakthrough in image generation! More performance breakdowns by category in the thread below.

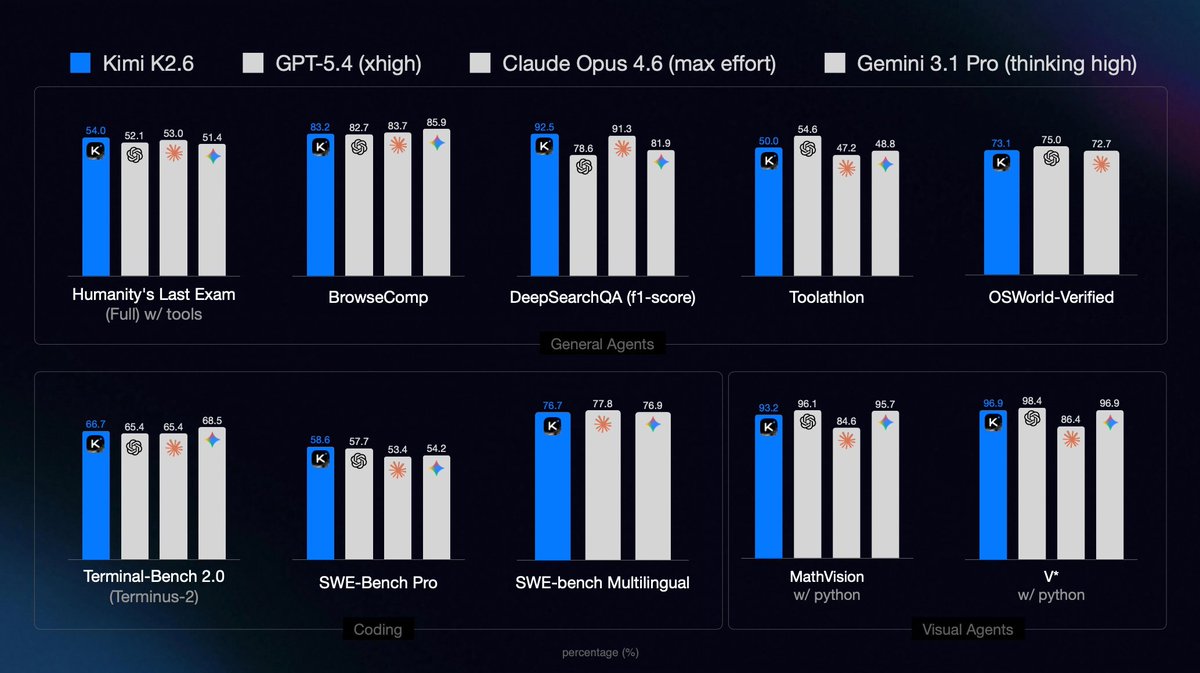

Meet Kimi K2.6: Advancing Open-Source Coding 🔹Open-source SOTA on HLE w/ tools (54.0), SWE-Bench Pro (58.6), SWE-bench Multilingual (76.7), BrowseComp (83.2), Toolathlon (50.0), Charxiv w/ python(86.7), Math Vision w/ python (93.2) What's new: 🔹Long-horizon coding - 4,000+ tool calls, over 12 hours of continuous execution, with generalization across languages (Rust, Go, Python) and tasks (frontend, devops, perf optimization). 🔹Motion-rich frontend - Videos in hero sections, WebGL shaders, GSAP + Framer Motion, Three.js 3D. 🔹Agent Swarms, elevated - 300 parallel sub-agents × 4,000 steps per run (up from K2.5's 100 / 1,500). One prompt, 100+ files. 🔹Proactive Agents - K2.6 model powers OpenClaw, Hermes Agent, etc for 24/7 autonomous ops. 🔹Claw Groups (research preview) - bring your own agents, command your friends', bots & humans in the loop. - K2.6 is now live on kimi.com in chat mode and agent mode. For production-grade coding, pair K2.6 with Kimi Code: kimi.com/code - 🔗 API: platform.moonshot.ai 🔗 Tech blog: kimi.com/blog/kimi-k2-6 🔗 Weights & code: huggingface.co/moonshotai/Kim…