Samy Badreddine

12 posts

@sbadredd

Research Scientist at SonyAI - PhD student at FBK Interested in Neurosymbolic AI and Probabilistic ML https://t.co/pQK2WIBhEd

This week, we were very proud to host three brilliant young researchers: @sbadredd, Christoph Wehner and @olgamshk. They gave super interesting talks and engaged in inspiring discussions with our PhD students. Thank you for visiting! @lasige

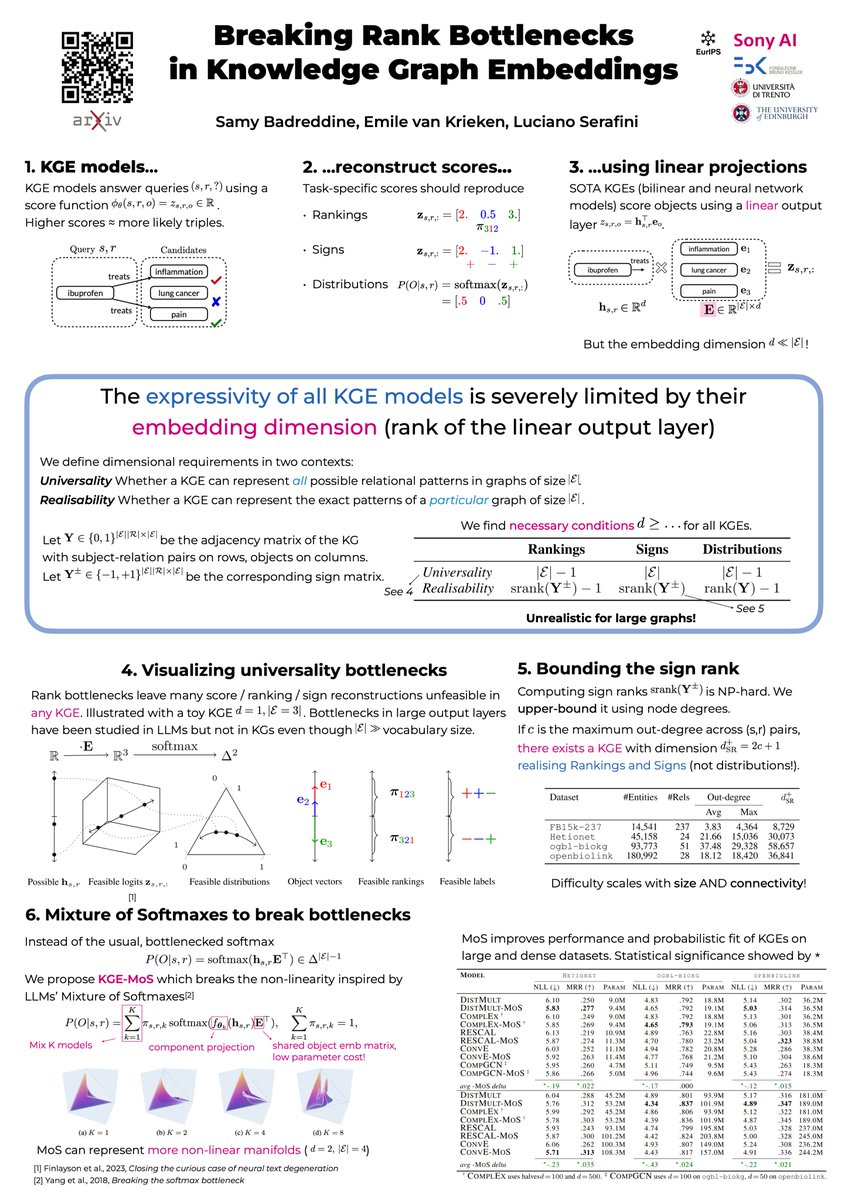

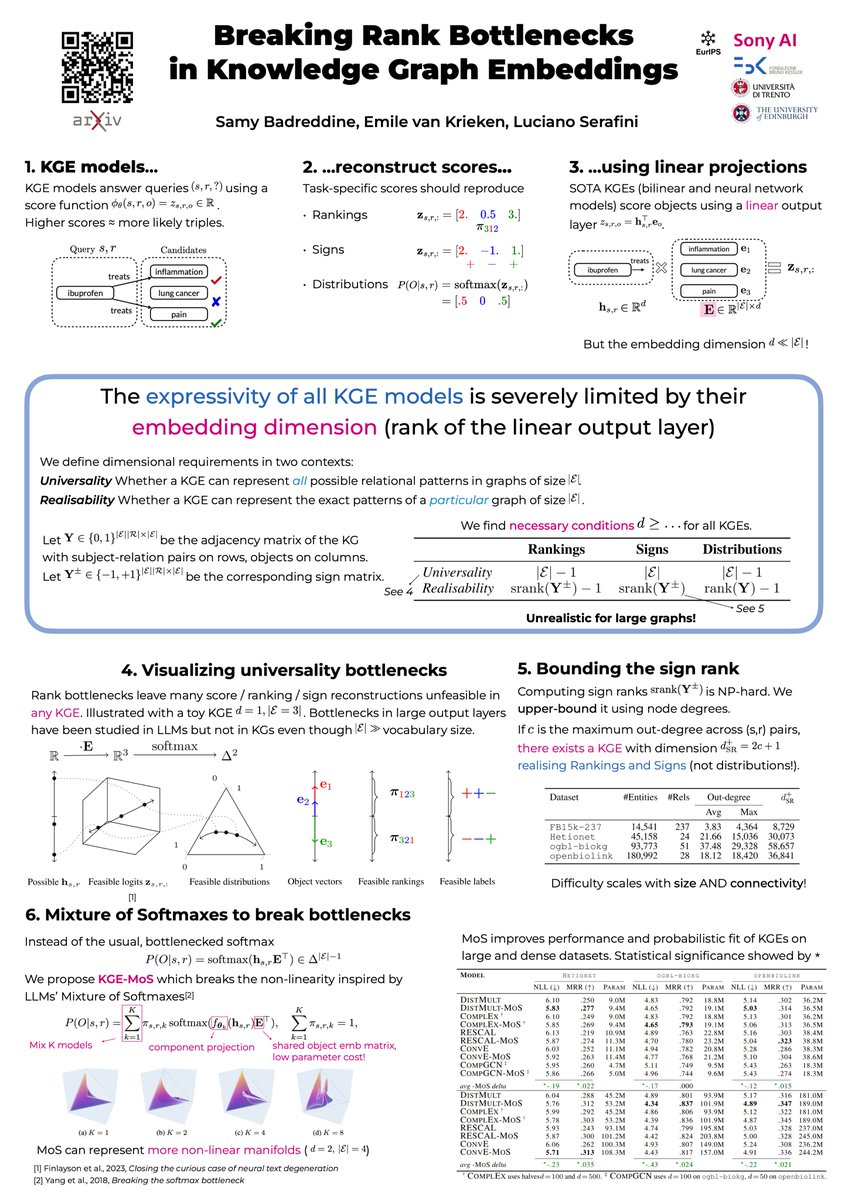

Very excited to be in Barcelona for NeSy 2024! We're presenting two papers: - Our ICML paper x.com/EmilevanKrieke… - A preview of our new NeSy library ULLER together with @sbadredd, @e_giunchiglia and Robin Manhaeve. We will give a special tutorial on Thursday! Hit me up!

NeSy2024 conference on Neurosymbolic AI starting tomorrow: sites.google.com/view/nesy2024