schandra retweetledi

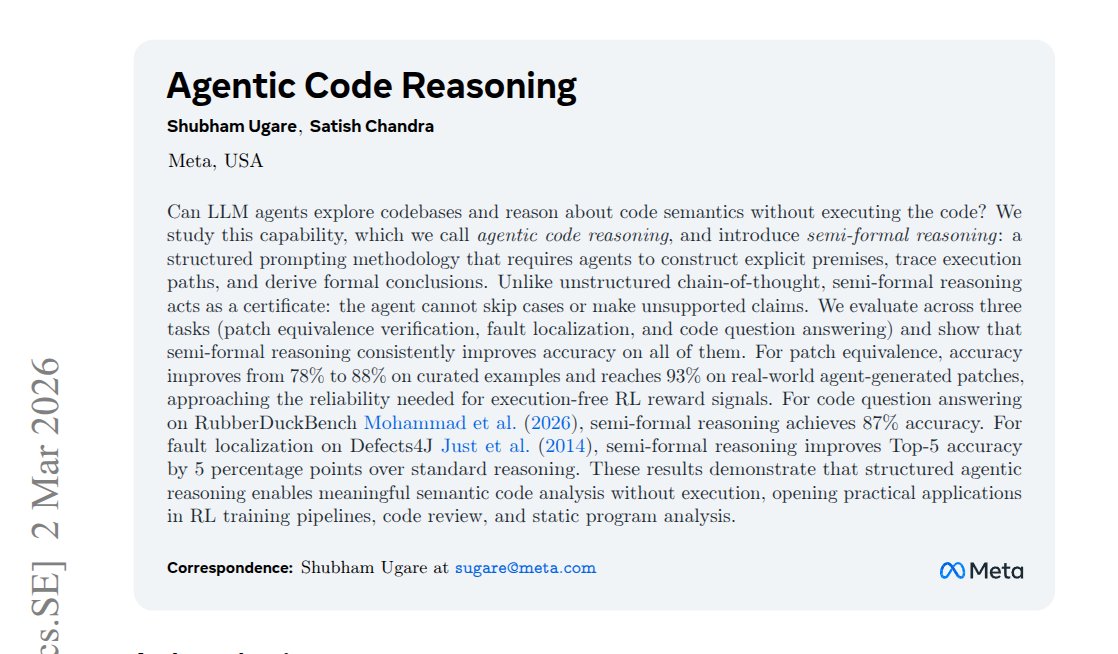

Meta found that forcing an llm to show its work, step by step, with evidence for every claim, nearly halves its error rate when verifying code patches

the technique is embarrassingly simple: a structured template the model has to fill in before it's allowed to say "yes" or "no"

no fine-tuning. no new architecture. just a checklist that won't let the model skip steps

English