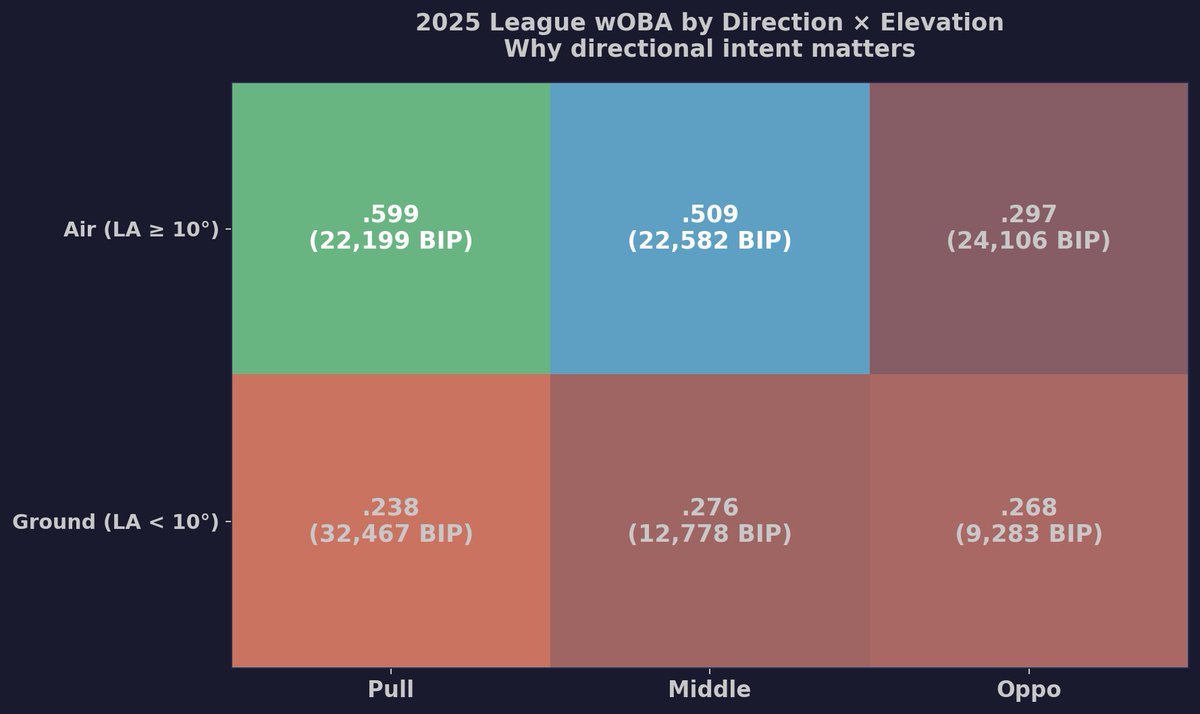

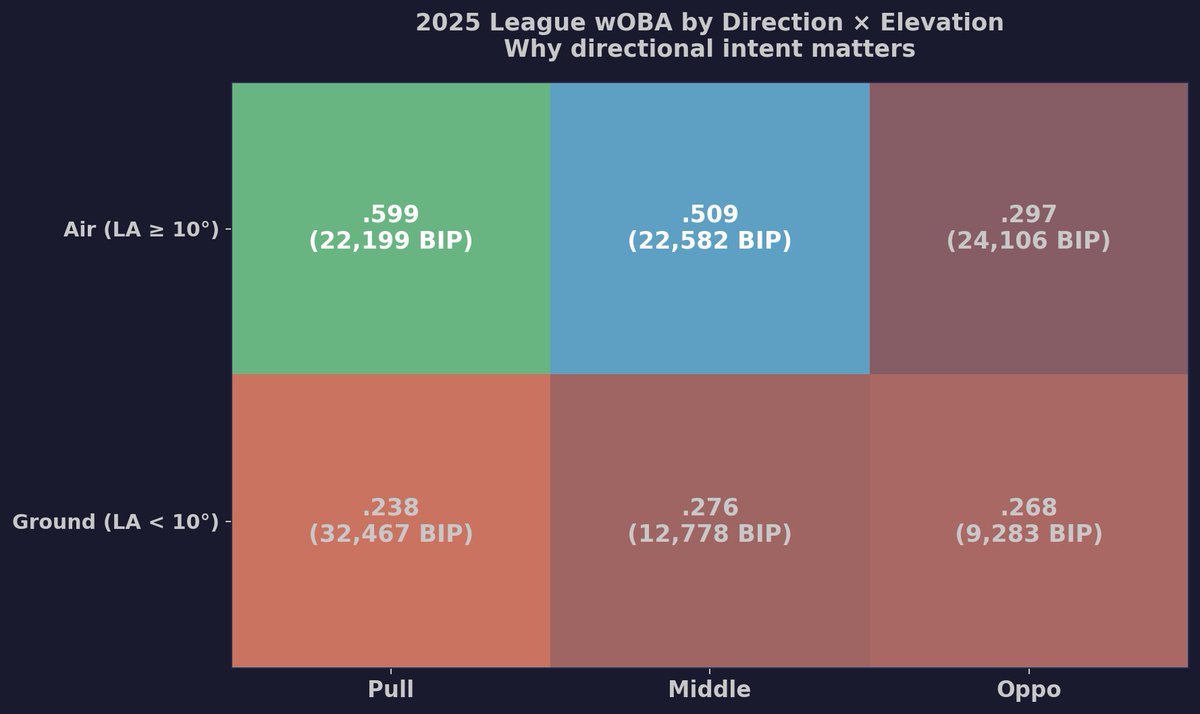

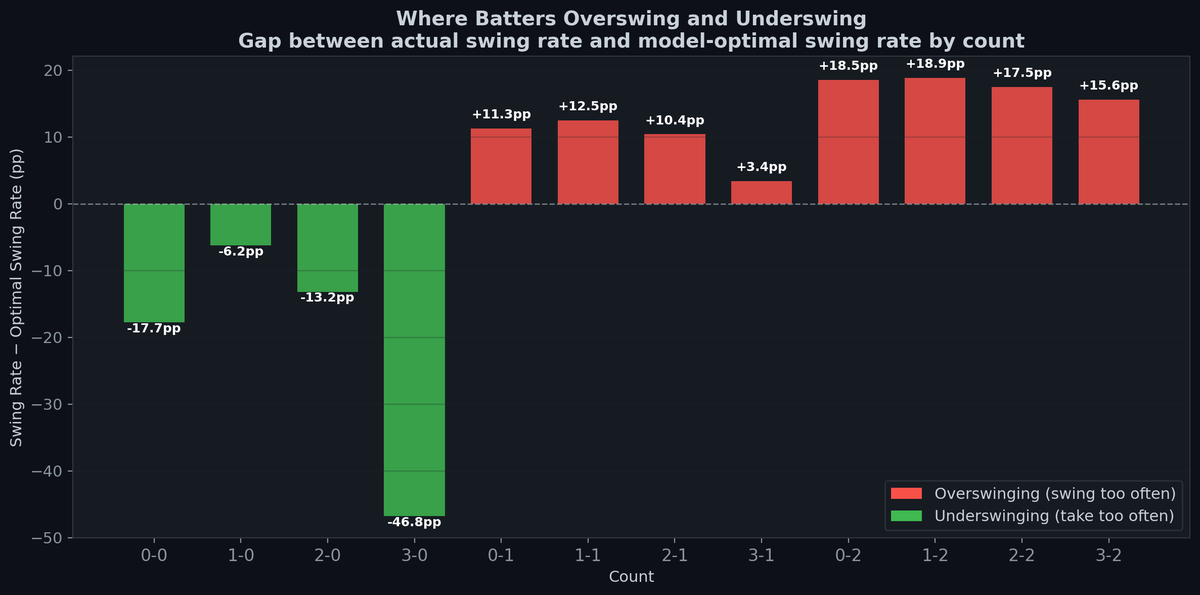

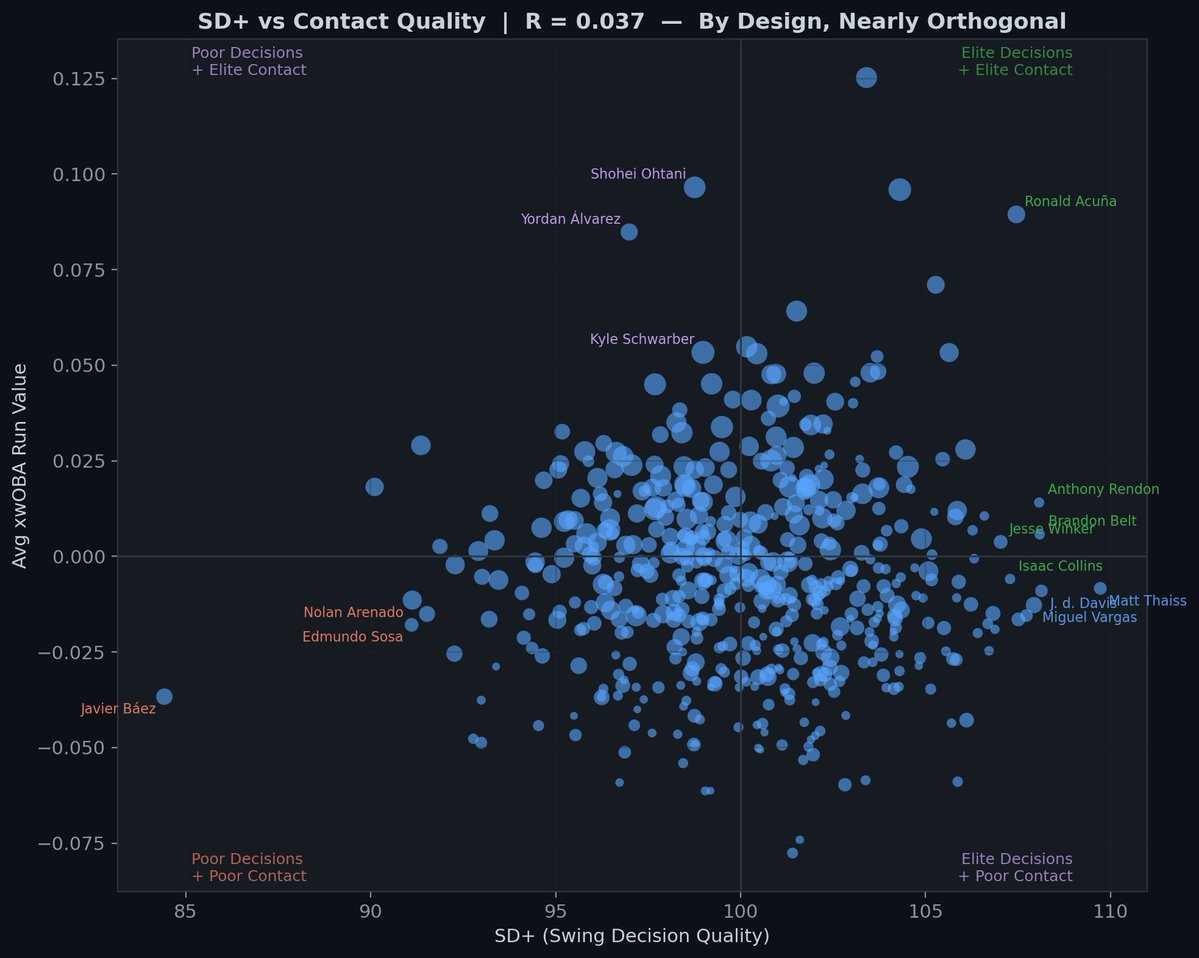

The conventional wisdom is "be aggressive in hitter's counts" but the data says batters aren't aggressive enough. The bars show the gap between what batters actually do vs what the model says is optimal in each count.

Scott

4.4K posts

@sdmiddlecamp

Wake Forest MS student. @CalPoly alum. Formerly @DrivelineBB, @SLOTribune, Sounders FC.

The conventional wisdom is "be aggressive in hitter's counts" but the data says batters aren't aggressive enough. The bars show the gap between what batters actually do vs what the model says is optimal in each count.

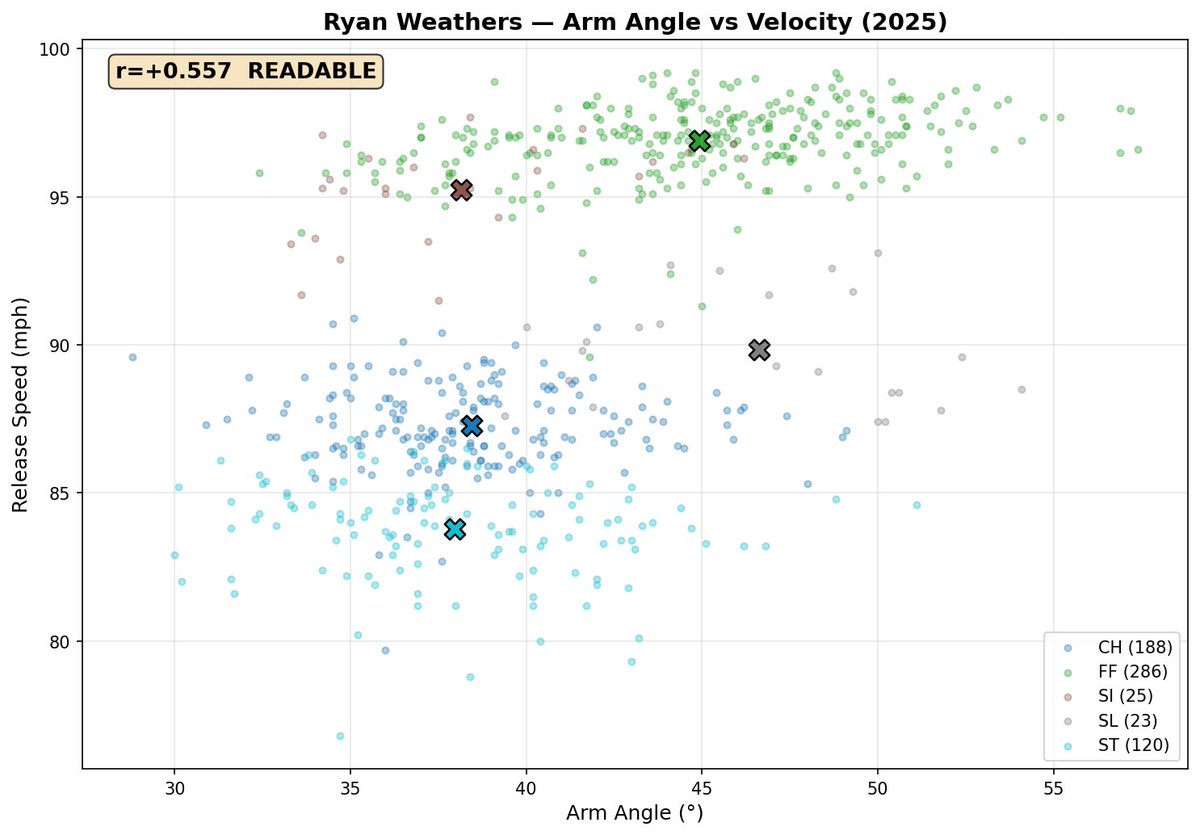

We've come so far in the public world that we've gotten a little repetitive. I don't have the time nor the skills to do the research on it but if someone (smarter and less busy) did new research on arm angles affecting pitch grips, swing decisions based on counts, other topics.

@drivelinekyle @GiuseppePaps I think about this a lot when it comes to vibecoded platforms in general. If the barrier to entry is tokens, what’s the moat? Name recognition? And how long can someone coast purely on name recognition?

If I were (much) younger, and keen on working in baseball, I would build a computer vision program to quantify how much information pitchers leak when they deliver their different pitch types. Batters are computational geniuses masquerading as athletes.

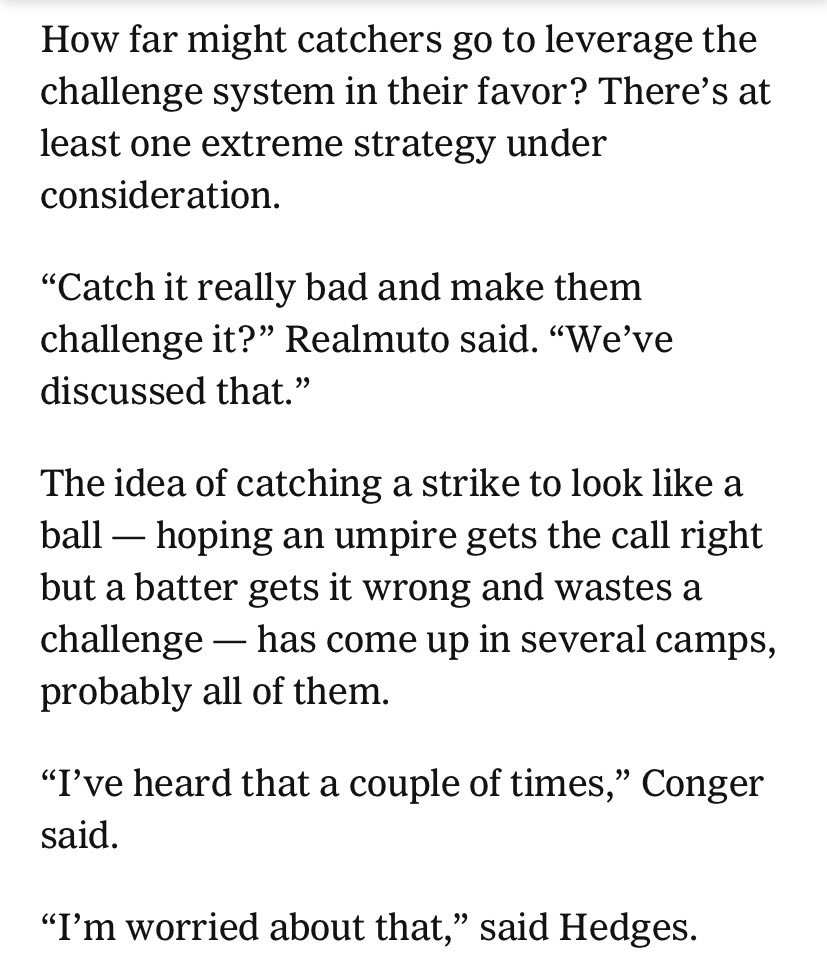

It’s no longer enough to know the strike zone. Big league catchers now have to learn every strike zone and better recognize the difference between, say, the top of 5-foot-6 Jose Altuve’s strike zone and the top of 6-foot-7 Aaron Judge’s.

Three days ago I left autoresearch tuning nanochat for ~2 days on depth=12 model. It found ~20 changes that improved the validation loss. I tested these changes yesterday and all of them were additive and transferred to larger (depth=24) models. Stacking up all of these changes, today I measured that the leaderboard's "Time to GPT-2" drops from 2.02 hours to 1.80 hours (~11% improvement), this will be the new leaderboard entry. So yes, these are real improvements and they make an actual difference. I am mildly surprised that my very first naive attempt already worked this well on top of what I thought was already a fairly manually well-tuned project. This is a first for me because I am very used to doing the iterative optimization of neural network training manually. You come up with ideas, you implement them, you check if they work (better validation loss), you come up with new ideas based on that, you read some papers for inspiration, etc etc. This is the bread and butter of what I do daily for 2 decades. Seeing the agent do this entire workflow end-to-end and all by itself as it worked through approx. 700 changes autonomously is wild. It really looked at the sequence of results of experiments and used that to plan the next ones. It's not novel, ground-breaking "research" (yet), but all the adjustments are "real", I didn't find them manually previously, and they stack up and actually improved nanochat. Among the bigger things e.g.: - It noticed an oversight that my parameterless QKnorm didn't have a scaler multiplier attached, so my attention was too diffuse. The agent found multipliers to sharpen it, pointing to future work. - It found that the Value Embeddings really like regularization and I wasn't applying any (oops). - It found that my banded attention was too conservative (i forgot to tune it). - It found that AdamW betas were all messed up. - It tuned the weight decay schedule. - It tuned the network initialization. This is on top of all the tuning I've already done over a good amount of time. The exact commit is here, from this "round 1" of autoresearch. I am going to kick off "round 2", and in parallel I am looking at how multiple agents can collaborate to unlock parallelism. github.com/karpathy/nanoc… All LLM frontier labs will do this. It's the final boss battle. It's a lot more complex at scale of course - you don't just have a single train. py file to tune. But doing it is "just engineering" and it's going to work. You spin up a swarm of agents, you have them collaborate to tune smaller models, you promote the most promising ideas to increasingly larger scales, and humans (optionally) contribute on the edges. And more generally, *any* metric you care about that is reasonably efficient to evaluate (or that has more efficient proxy metrics such as training a smaller network) can be autoresearched by an agent swarm. It's worth thinking about whether your problem falls into this bucket too.

I packaged up the "autoresearch" project into a new self-contained minimal repo if people would like to play over the weekend. It's basically nanochat LLM training core stripped down to a single-GPU, one file version of ~630 lines of code, then: - the human iterates on the prompt (.md) - the AI agent iterates on the training code (.py) The goal is to engineer your agents to make the fastest research progress indefinitely and without any of your own involvement. In the image, every dot is a complete LLM training run that lasts exactly 5 minutes. The agent works in an autonomous loop on a git feature branch and accumulates git commits to the training script as it finds better settings (of lower validation loss by the end) of the neural network architecture, the optimizer, all the hyperparameters, etc. You can imagine comparing the research progress of different prompts, different agents, etc. github.com/karpathy/autor… Part code, part sci-fi, and a pinch of psychosis :)